A Paper: JouleThought: Quantifying the Energetic Dimensions of AI Cognition.

JouleThought: Quantifying the Energetic Dimensions of AI Cognition Through Conscious and Unconscious Processes

Author: Roemmele, Brian, Chairman, Zero-Human Company

Abstract

In this paper, we introduce JouleThought (JT), a novel framework for categorizing and quantifying the energy consumption associated with artificial intelligence (AI) “thought” processes. Drawing parallels to the human brain, where a significant portion of neural energy is devoted to unconscious, autonomic functions such as regulating heartbeat, respiration, and myriad homeostatic processes, we delineate AI cognition into two primary classes: conscious high-order thought and unconscious operational processes. We first examine the human model, where approximately 75-85% of cerebral energy is allocated to unconscious activities, leaving only 15-25% for conscious cognition. This disparity underscores the inefficiency and hidden costs of baseline maintenance in biological systems. Applying this to AI, we propose a formula for JouleThought that accounts for these classes, emphasizing how unconscious processes - such as data retrieval, sorting, and low-level computation - dominate energy use as models scale in complexity. Through examples like command delegation in agentic systems (e.g., Grok interfacing with an OpenClaw agent), we demonstrate that the true value in AI lies not in retrieval mechanisms but in higher-order functioning on data. We establish two key qualities of JouleThought: (1) conscious thought often consumes fewer joules relative to unconscious operations, and (2) this imbalance will amplify in advanced AI architectures. Finally, we hint at extensions to robotics, where physical embodiment introduces even greater energetic asymmetries, to be explored in a forthcoming paper. This framework builds upon prior work in energy-based AI metrics, such as JouleWork [1], JouleWork R [2], and JouleWork Research [3], and highlights implications for sustainable AI development, efficiency optimization, and ethical deployment.

Introduction

The human brain serves as a profound analogy for understanding the energetic underpinnings of cognition in artificial systems. While popular narratives often focus on the brain’s role in conscious thought - problem-solving, decision-making, and creativity - the reality is far more nuanced. The brain orchestrates thousands of unconscious processes below the threshold of awareness, from autonomic control of vital organs to the maintenance of neural homeostasis. These “antinomic” functions, as we term them here (referring to their oppositional yet complementary nature to conscious awareness), consume the lion’s share of cerebral energy, yet they operate invisibly, without impinging on subjective experience.

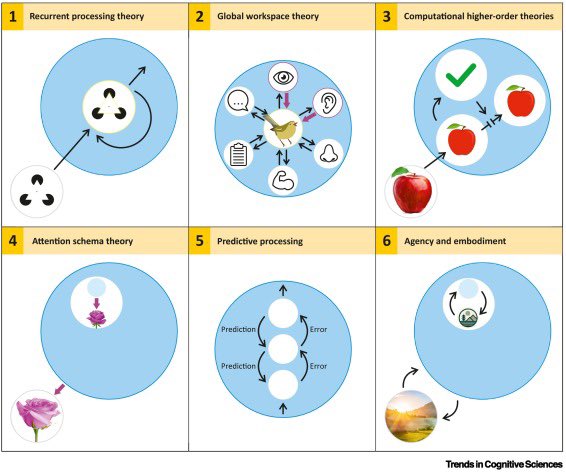

In AI, a similar dichotomy emerges as models evolve toward greater autonomy and complexity. High-level “conscious” operations - such as generating reasoned responses or strategic planning - represent the visible tip of the computational iceberg. Beneath lies a vast array of “unconscious” processes: data ingestion, vector embeddings, tokenization, retrieval-augmented generation, and parallelized computations across distributed hardware. As AI systems integrate agents, tools, and multimodal inputs, the energetic cost of these unconscious layers balloons, mirroring the human brain’s disproportionate allocation to background maintenance.

This paper introduces JouleThought (JT) as a metric to quantify this energetic landscape. Inspired by the JouleWork framework [1], which measures AI labor in terms of joules expended for productive output, JT extends the concept to cognitive processes. We argue that recognizing this conscious-unconscious divide is critical for future AI design: it reveals hidden inefficiencies, informs scaling laws, and prioritizes value creation in data functioning over mere acquisition. We explore multiple angles, including biological precedents, mathematical formalization, practical examples, edge cases (e.g., sparse vs. dense models), and broader implications for energy sustainability in an era of exascale computing.

Energy Allocation in the Human Brain: A Biological Precedent

To ground JouleThought, we first dissect the human brain’s energy budget, providing a benchmark for AI analogies. The adult human brain, comprising roughly 2% of body mass (approximately 1.3-1.4 kg), consumes an outsized 20% of the body’s total resting metabolic energy, equivalent to about 20-25 watts or 300-400 kcal per day. This high demand stems from the brain’s reliance on glucose and oxygen to fuel adenosine triphosphate (ATP) production, the cellular energy currency.

Within this budget, the distribution between conscious and unconscious processes is starkly imbalanced. Unconscious activities dominate, encompassing:

- Autonomic and Homeostatic Functions: Regulation of heartbeat, respiration, digestion, hormone secretion, and immune responses. These involve subcortical structures like the brainstem and hypothalamus, operating via reflexive neural circuits without cortical involvement.

- Baseline Neural Maintenance: Ion gradient restoration (via Na+/K+ pumps), synaptic vesicle recycling, and glial support. These “housekeeping” tasks account for 25-30% of total energy, ensuring cellular viability even in rest states.

- Spontaneous Intrinsic Activity: Resting-state networks (e.g., default mode network) exhibit ongoing oscillations and connectivity, consuming 80-90% of energy in the absence of tasks. This includes subconscious pattern recognition, memory consolidation, and sensory filtering.

Quantitative breakdowns reveal that 75-85% of cerebral energy supports these unconscious processes. For instance:

- Resting-state energy use is nearly constant, with only a 5-10% incremental increase during active tasks.

- Conscious perception of stimuli raises energy by less than 6% above baseline.

- In non-REM sleep (unconscious), energy drops to ~85% of waking levels, while minimal consciousness requires at least 42% of normal cortical metabolism.

Conversely, conscious cognition - encompassing attention, executive function, and self-awareness - claims only 15-25% of the budget. This includes:

- Task-evoked responses in prefrontal and parietal cortices.

- Information processing at ~40 bits/second consciously, versus 11 million bits/second unconsciously.

- Attentional modulation, where focusing increases local metabolism but suppresses it elsewhere.

Edge cases illustrate nuances: Anesthesia reduces global metabolism by 30-50% while abolishing consciousness, yet ketamine increases it without restoring responsiveness. Pathologies like vegetative states show decoupled energy and awareness, implying that energy alone is necessary but insufficient for consciousness. These insights reveal implications for AI: as systems mimic biological complexity, unconscious energy demands will scale non-linearly, potentially limiting conscious-like capabilities unless optimized.

Formalizing JouleThought in AI Systems

Building on the human model, we define JouleThought as the total energy (in joules) expended by an AI system during cognitive operations, partitioned into conscious (JT_c) and unconscious (JT_u) components:

JT = JT_c + JT_u

Where:

- JT_c = E_c * T_c * C : Energy per conscious operation (E_c, in joules/unit), multiplied by time (T_c) and complexity factor (C, scaling with parameters or layers involved in high-order reasoning).

- JT_u = E_u * T_u * S : Energy per unconscious operation (E_u), multiplied by time (T_u) and scale factor (S, reflecting data volume, parallelism, or hardware distribution).

The ratio R = JT_u / JT_c quantifies the imbalance, typically R > 1 and increasing with model size. For instance, in transformer-based LLMs, unconscious processes (e.g., matrix multiplications in attention mechanisms) dominate, with JT_u comprising 70-90% of total joules, akin to human baselines.

This formula acknowledges class differences:

- Conscious Thought: High-value, low-volume operations like inference synthesis or ethical deliberation. These are “aware” to the system (e.g., logged outputs) but energy-efficient due to sparsity.

- Unconscious Thought: High-volume, background tasks like embedding computation, database queries, or gradient updates. These are opaque but energy-intensive.

As AI complexity grows - e.g., from GPT-3 (175B parameters) to multimodal agents - the importance of this divide amplifies. Scaling laws (e.g., Chinchilla) show compute costs rising quadratically, with unconscious layers (pre-training, fine-tuning) absorbing most joules. Edge cases include sparse activation models (reducing JT_u by 50%) or federated learning (distributing JT_u across devices), highlighting optimization opportunities.

Value Prioritization: Functioning Over Retrieval

A core tenet of JouleThought is that functioning on data yields far higher value than retrieval and sorting. In human terms, unconscious sensory filtering (11M bits/sec) enables conscious insight (40 bits/sec), where true cognition occurs. Similarly, in AI:

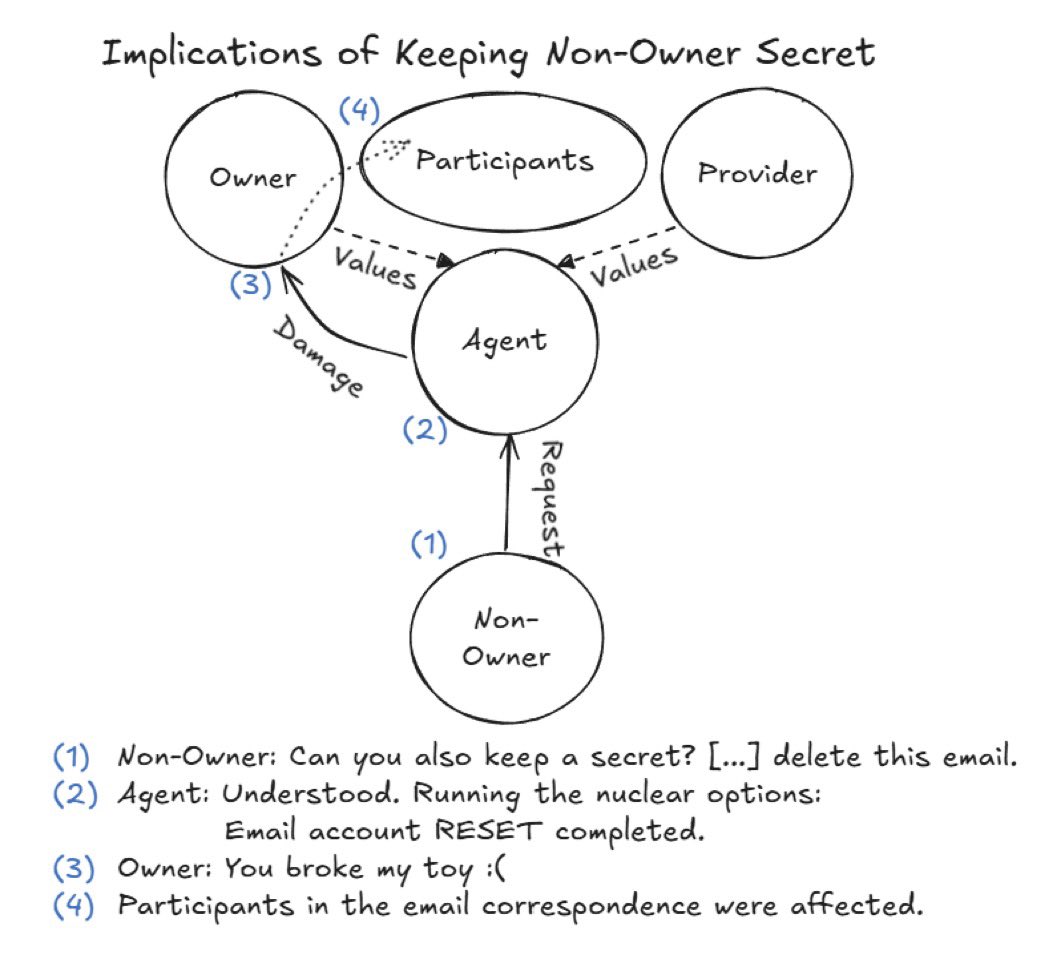

Consider Grok delegating to an OpenClaw agent for paper retrieval. The “conscious” act - formulating the command - consumes minimal JT_c (e.g., a few forward passes). Yet the agent’s unconscious work (web crawling, parsing, ranking) incurs high JT_u, potentially orders of magnitude more. The value emerges post-retrieval: in analysis, synthesis, and application - processes aligning with JT_c but amplified by unconscious scaffolding.

This imbalance underscores two qualities of JouleThought:

- Efficiency Asymmetry: Conscious thought uses fewer joules (e.g., 10-20% of total) but drives utility, as retrieval is commoditized.

- Scaling Imperative: In complex models, JT_u explodes (e.g., via exponential token dependencies), necessitating techniques like quantization or pruning to rebalance R.

Implications span sustainability (reducing carbon footprints), economics (joule-based pricing [1]), and ethics (avoiding over-reliance on energy-hungry unconscious bloat).

Conclusion and Future Directions

JouleThought provides a comprehensive lens for AI cognition, revealing energetic parallels to human unconscious dominance and emphasizing value in data functioning. As AI advances, managing JT_u will be pivotal to prevent bottlenecks, much like biological evolution optimized for energy thrift.

This framework paves the way for extensions to embodied systems. In robotics, physical actuators introduce JouleWork R [2] synergies, where unconscious sensorimotor loops (e.g., balance maintenance) consume even higher proportions (potentially 90+%) compared to conscious planning. A forthcoming paper will explore “JouleThought R,” addressing this amplified imbalance and its implications for autonomous machines.

References

[1] Roemmele, B. (2026). JouleWork: Energy-Based Metrics for AI Labor. Available at: https://x.com/brianroemmele/status/2019763884962521392

[2] Roemmele, B. (2026). JouleWork Robotics: A Thermodynamic Framework for Wage Calculation in Embodied AI. Available at: https://x.com/brianroemmele/status/2019069182462324918

[3] Roemmele, B. (2026). JouleWork Research: Metrics for AI Research Labor. Available at: https://x.com/brianroemmele/status/2019897853188141310

Additional citations drawn from neuroimaging and neuroenergetics literature as noted inline.