TruthArchive.ai - Tweets Saved By @DynamicWebPaige

@DynamicWebPaige - 👩💻 Paige Bailey

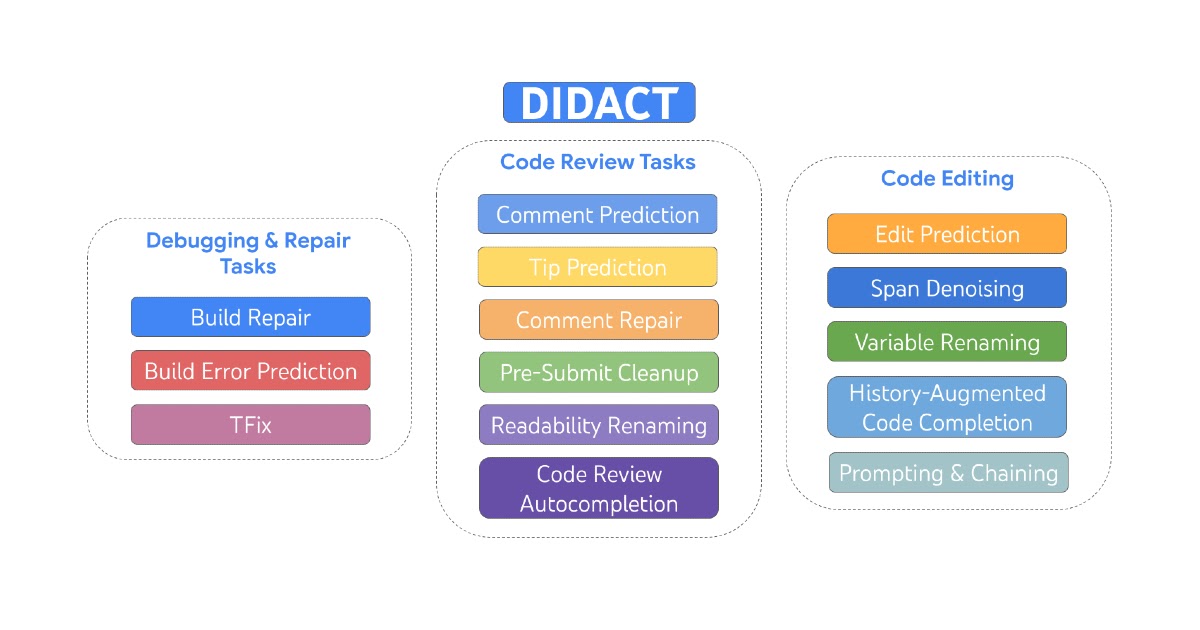

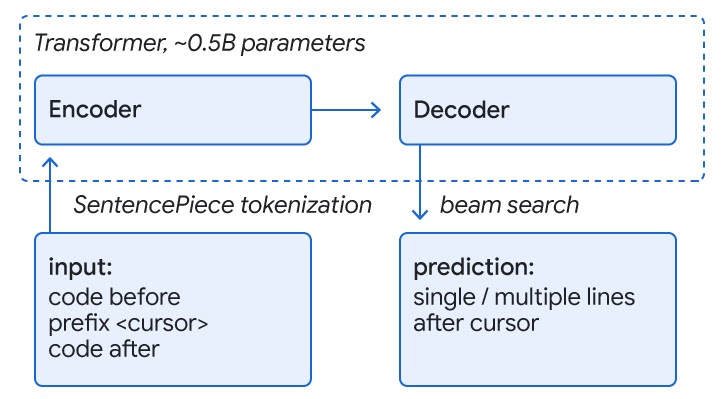

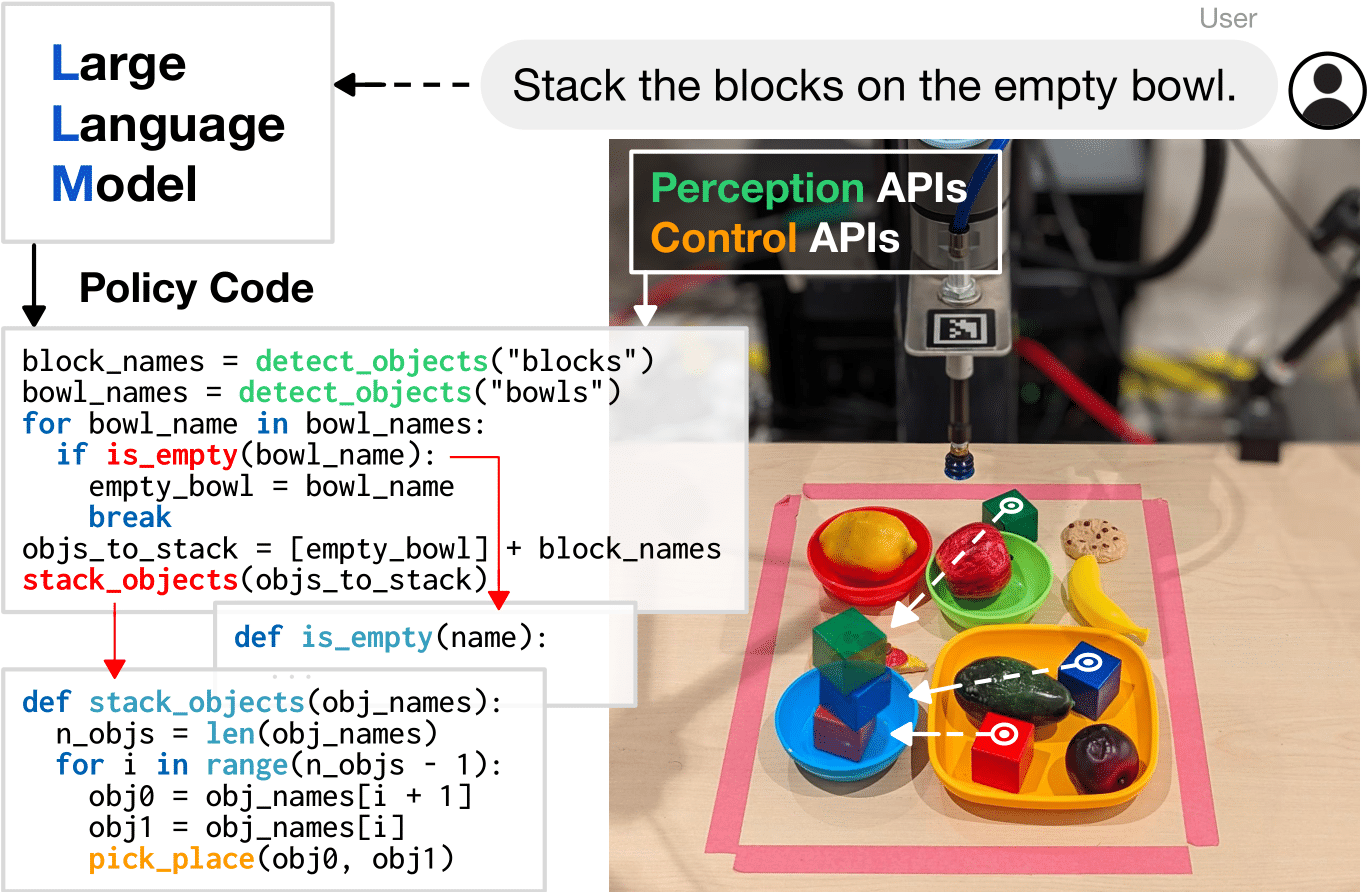

✨👩💻 Our @DeepMind Code AI team delivered a presentation this morning about the work we've done internally and externally—and the path for reinventing what it means to do software development and creative technical work in the age of generative models. 🤖 RL and generative models combined have massive potential for creators: and there has never been a more capable group to build and implement this vision. ✨ Very excited for the years to come! 🙌🏻 If you're curious about what we've been working on, a small fraction of our research and applied work can be found in the links below: • Large sequence models for software development activities https://ai.googleblog.com/2023/05/large-sequence-models-for-software.html • Understanding HTML with Large Language Models https://arxiv.org/abs/2210.03945 • Natural Language to Code Generation in Interactive Data Science Notebooks https://arxiv.org/abs/2212.09248 • ML-Enhanced Code Completion Improves Developer Productivity https://ai.googleblog.com/2022/07/ml-enhanced-code-completion-improves.html • Code as Policies: Language Model Programs for Embodied Control https://code-as-policies.github.io • Learning Performance-Improving Code Edits https://arxiv.org/abs/2302.07867 • Generative Agents: Interactive Simulacra of Human Behavior https://arxiv.org/abs/2304.03442 • AlphaDev discovers faster sorting algorithms https://deepmind.com/blog/alphadev-discovers-faster-sorting-algorithms • Competitive programming with AlphaCode https://deepmind.com/blog/competitive-programming-with-alphacode • Baldur: Whole-Proof Generation and Repair with Large Language Models https://arxiv.org/abs/2303.04910 ...and more.

.png)

@DynamicWebPaige - 👩💻 Paige Bailey

👉🏻 If you're interested in trying out some of the smaller models (powered by PaLM 2, soon to be Gemini), you can check out: - @GoogleColab: https://blog.google/technology/developers/google-colab-ai-coding-features/ - Duet AI for Developers (which includes security, DevOps, and data analysis features): https://cloud.google.com/blog/products/application-development/introducing-duet-ai-for-developers - @AndroidStudio: https://developer.android.com/studio/preview/studio-bot - @Firebase: https://developers.generativeai.google/tools/firebase_extensions - @GoogleCloud's Codey API: https://cloud.google.com/vertex-ai/docs/generative-ai/code/code-models-overview - Bard, and Magi: https://blog.google/technology/ai/code-with-bard/ 🚀 More product integrations landing in the next several months, stay tuned!