reSee.it - Tweets Saved By @NameRedacted247

@NameRedacted247 - Name Redacted

1. 🧵Why has Meta hired more than 160 individuals from the US Intelligence Community since 2018? Is the Global Engagement Center (GEC) directly providing funding to Meta? Is this a modern-day version of Operation Mockingbird? CIA-14 FBI-26 NSA-16 DHS-29 State Dept-32 DOD-49 This is an update to my previous thread from December 2022. The primary focus here is to provide a comprehensive list of the most notable individuals, currently working at Meta, with backgrounds in intelligence.

@GreenEyesinTN - GreenEyes in TN. Never violate 1A.

@NameRedacted247 @HolaKetty @GoyWonderTM @mama_aries2 please archive 🙏

@HolaKetty - Ketty D

@GreenEyesinTN @NameRedacted247 @GoyWonderTM @mama_aries2 🫡

@AlexGnarcia - A/G

@HolaKetty @GreenEyesinTN @NameRedacted247 @GoyWonderTM @mama_aries2 should see how many 8200 alum they have hired too!!! Came across figures when researching the WIZ stuff. 8200 alum in US tech companies is NOT RARE!!!! must build an index to understand ALL intel (dom/foreign) alum who populate today's TECH COMPANIES.

@NameRedacted247 - Name Redacted

1. 🧵Why has Meta hired more than 160 individuals from the US Intelligence Community since 2018? Is the Global Engagement Center (GEC) directly providing funding to Meta? Is this a modern-day version of Operation Mockingbird? CIA-14 FBI-26 NSA-16 DHS-29 State Dept-32 DOD-49 This is an update to my previous thread from December 2022. The primary focus here is to provide a comprehensive list of the most notable individuals, currently working at Meta, with backgrounds in intelligence.

@GreenEyesinTN - GreenEyes in TN. Never violate 1A.

@NameRedacted247 @HolaKetty @GoyWonderTM @mama_aries2 please archive 🙏

@NameRedacted247 - Name Redacted

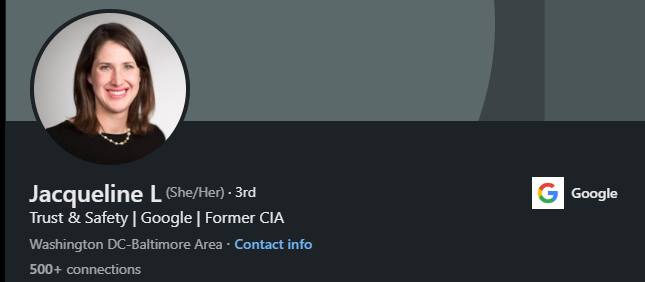

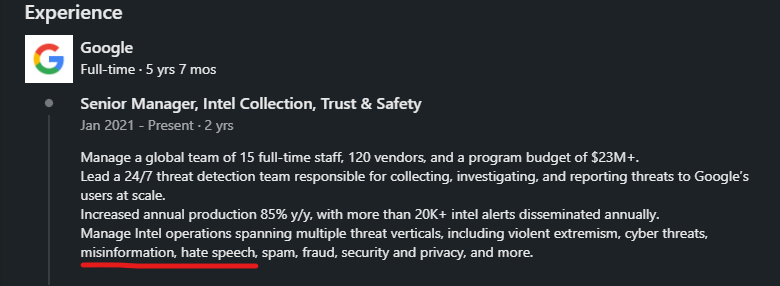

1. 🧵Google & Meta function as extensions of the US Intelligence Community. With Jacqueline Lopour, Google's Head of Trust & Safety, and Aaron Berman, Meta's Head of Elections Content/Misinformation Policy, both being career CIA officers, it underscores the CIA's substantial control over online censorship. Why is this CIA-Big Tech revolving door, where career CIA officers wield power to censor & decide what misinformation is, purposefully suppressed in the broader conversation about censorship? Why are career CIA officers like Jacqueline Lopour & Nick Rossmann, who both have a history of spreading misinformation & promoting the RussiaGate conspiracy theory, now in senior roles in Trust & Safety at Google, deciding what is misinformation & overseeing content moderation? The cumulative number of former Intelligence Community personnel hired by Meta & Google since 2018 is staggering. Before 2018, there were only a handful. Here are the combined hires by both companies: CIA-36 FBI-68 NSA-44 DHS/CISA-68 State Dept-86 DOD-121

@NameRedacted247 - Name Redacted

2. Why would Google specifically choose these six senior executives to attend an @ISF_OSAC event in DC? Everyone in this picture, alongside former CIA Director Robert Gates, is a current senior executive at Google & a former career CIA officer, except for the attorney from Perkins Coie (2nd from the left). https://securityfdn.org/events-gallery/#gallery-972

@NameRedacted247 - Name Redacted

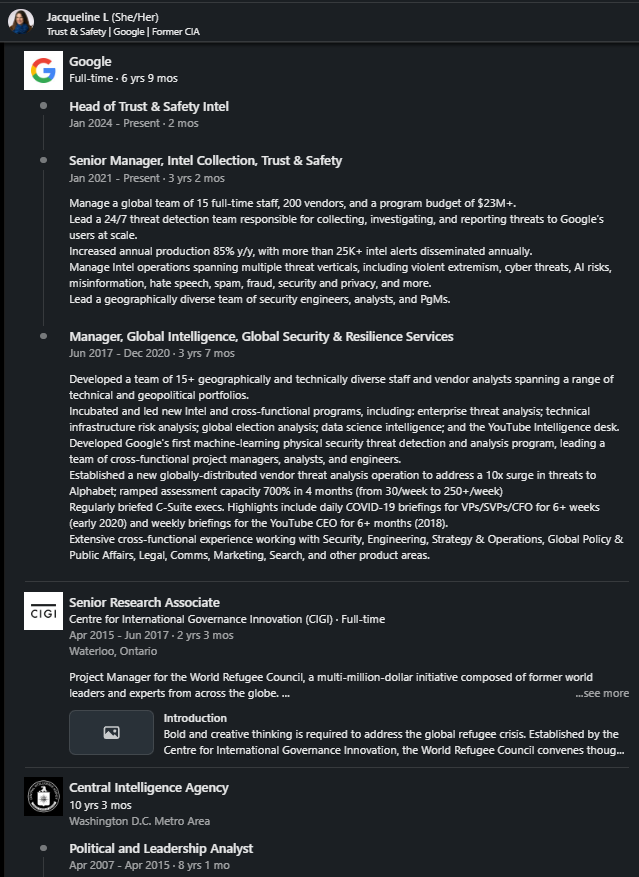

3. Jacqueline Lopour spent 10 years at the CIA before joining Google in 2017. She is currently Head of Trust & Safety, where she not only determines what constitutes misinformation but also wields considerable power in content moderation on Search & YouTube. In this interview, Lopour is promoting the RussiaGate conspiracy theory. I wonder if she believes her own propaganda.

@NameRedacted247 - Name Redacted

4. Jacqueline Lopour, a career CIA officer, played a significant role in developing various intelligence programs at Google & YouTube: *Manages intel operations for violent extremism, misinformation, hate speech, etc. *Led development of intelligence programs for global election analysis. *Developed the “YouTube Intelligence Desk.” *Developed Google’s first machine-learning threat detection & analysis program. *Provided daily COVID-19 briefings to senior leadership at Google & YouTube CEO LinkedIn - https://archive.ph/IgF1w

@NameRedacted247 - Name Redacted

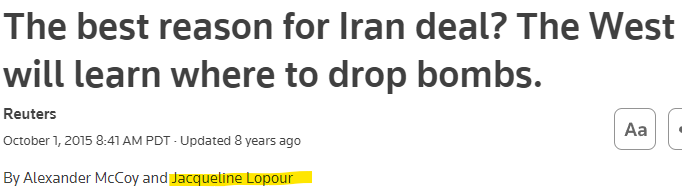

5. In 2015, Lopour authored a rather bizarre article titled: “The best reason for Iran deal? The West will learn where to drop bombs.” https://www.reuters.com/article/idUSL1N1211IF/

@NameRedacted247 - Name Redacted

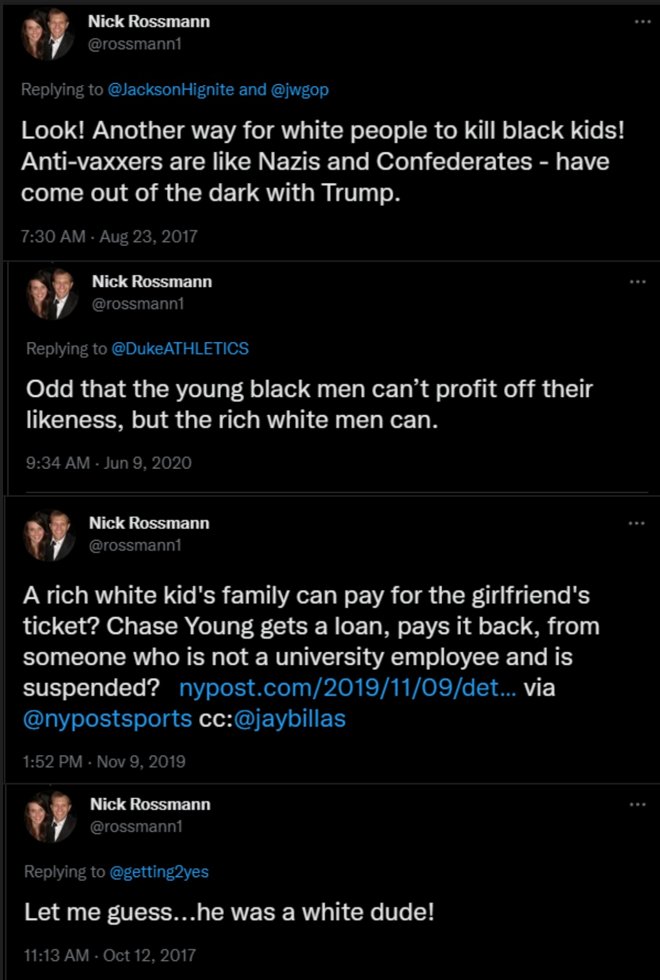

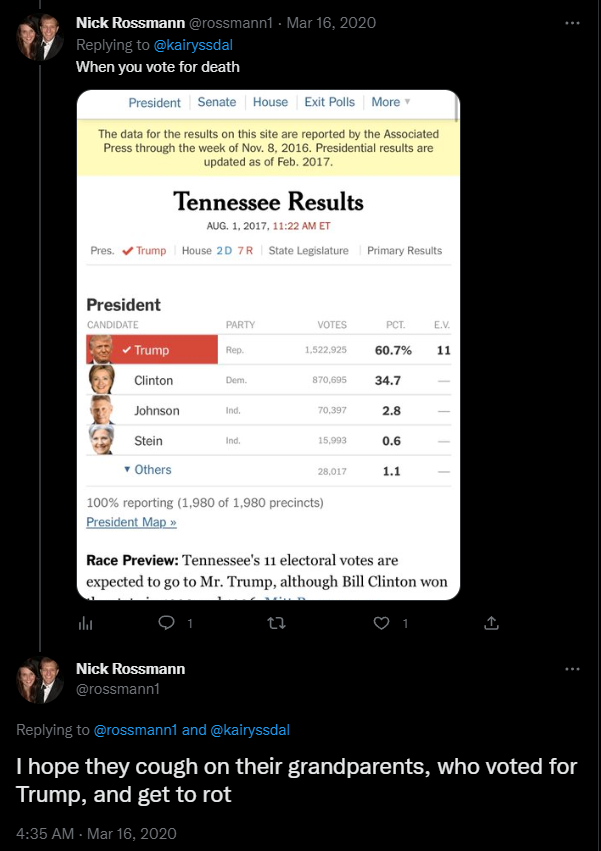

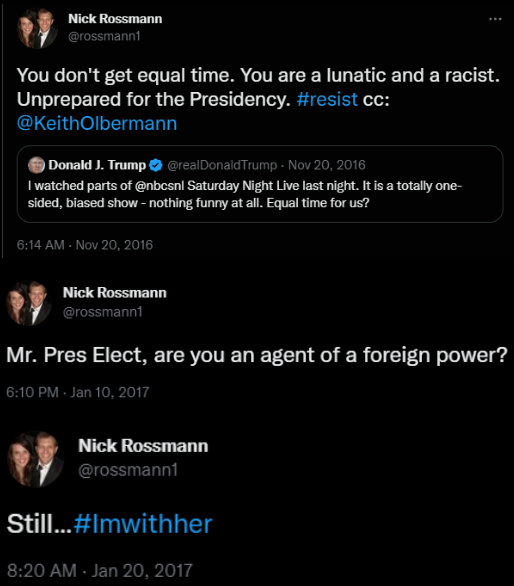

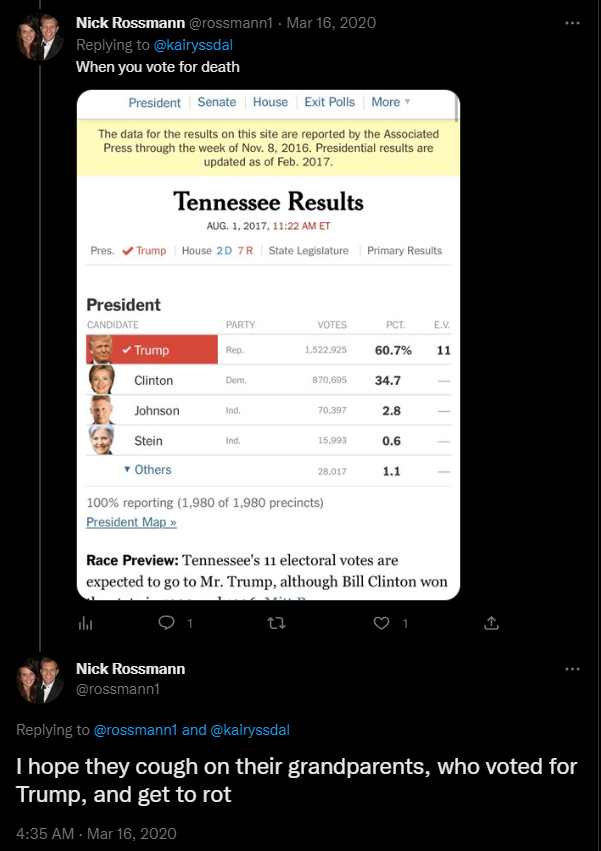

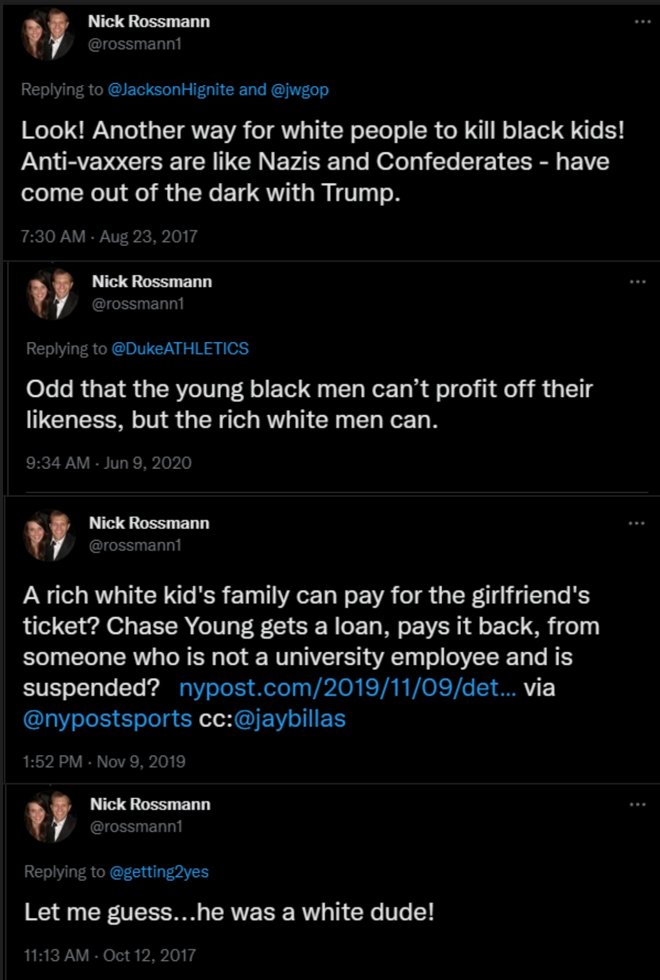

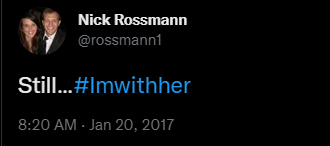

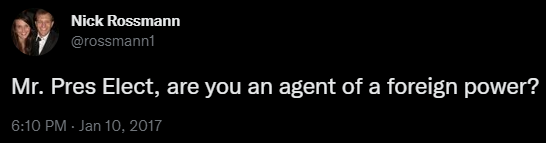

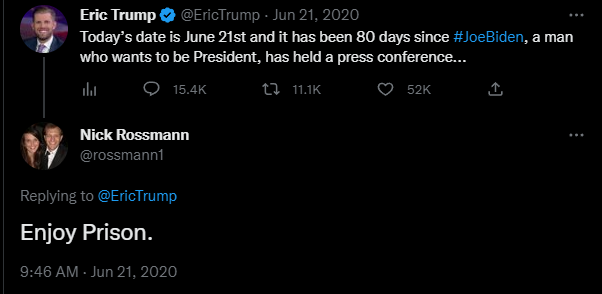

6. Nick Rossmann spent over 5 years at the CIA before joining Google in 2022 as Senior Manager of Trust & Safety. His activity on Twitter/X is troubling, especially considering his current position in content moderation. Why does Nick Rossmann have a problem with white people? Here are some examples of Rossmann’s unhinged behavior on Twitter/X (all archived): *Negative tweets about white people: https://archive.vn/ZdKeT https://archive.vn/PYgWh https://archive.vn/rOOpB *Hoping Trump voters cough on their grandparents (giving them COVID) & “get to rot”- https://archive.is/rppqw *Asking Trump if he is an agent of a foreign power - https://archive.vn/xi7t8 *Calling Trump “a lunatic & a racist”, tagging Keith Olbermann & using the hashtag “Resist” - https://archive.vn/Pk5Kh *Calling anti-vaxxers Nazis & Confederates - https://archive.vn/YWMDD

@NameRedacted247 - Name Redacted

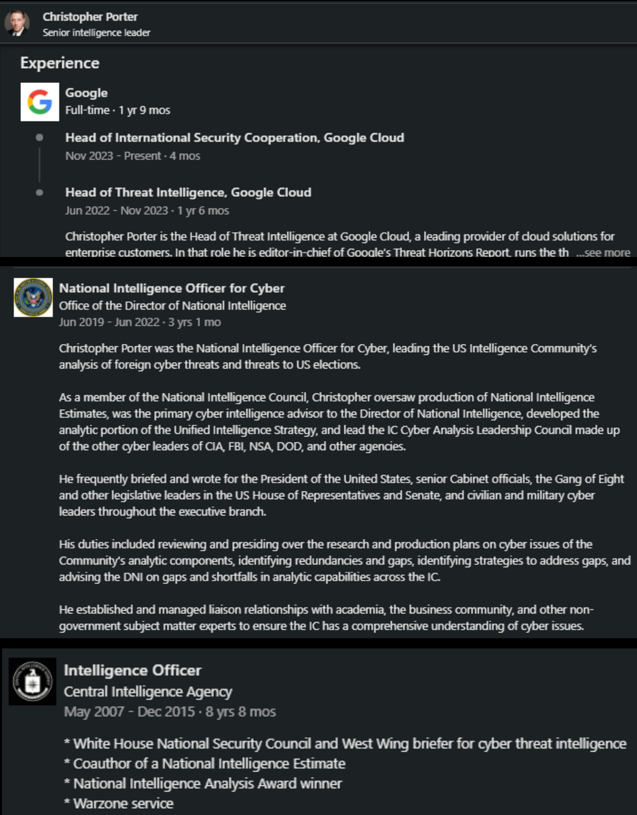

7. Christopher Porter spent most of his professional career in the Intelligence Community. After 9 years at the CIA, he joined ODNI where he was Head of the IC Cyber Analysis Council leading a team of CIA, FBI, NSA & DOD regarding US elections. While at the ODNI, he regularly briefed President Biden so it’s only natural that as of June 2022, he joined Google as Head of Threat Intelligence. Porter is also a member of the Atlantic Council His LinkedIn bio states that he likes to talk about Russia & election security LinkedIn- https://archive.is/pFOI2

@NameRedacted247 - Name Redacted

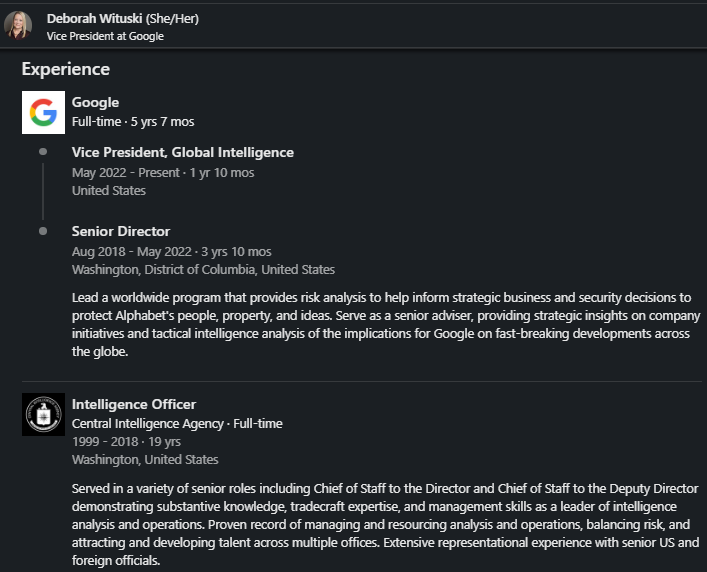

8. Deborah Wituski joined Google in 2018 as Senior Director Global Intelligence. Her only prior work experience was 19 years at the CIA, where she was Chief of Staff to the Director. Wituski is a member of Council on Foreign Relations.

@NameRedacted247 - Name Redacted

9. Now why would Deborah’s intelligence desk at Google be so concerned about Putin’s personal life or the innerworkings of the Kremlin? Full video for reference- https://www.youtube.com/watch?v=1tW2tPdwI9E

@NameRedacted247 - Name Redacted

10. Katherine Tobin joined Google in 2021. Her career path is like the others listed in this thread: after 6 years at Booz Allen Hamilton, she spent 4 years at the ODNI, followed by 4 years at the CIA, & then returned to the ODNI for another 3 years. With over 10 years of experience in the Intelligence Community, Google was the obvious choice for her. On her LinkedIn bio, she states that her favorite problems to solve are promoting DEI

@NameRedacted247 - Name Redacted

11. Katherine wrote a blog post on LinkedIn, sharing her transition from the CIA to the private sector titled “My New Mission: From Spying to Startups.” For anyone interested, here is her personal blog- https://kttobin.net/

@NameRedacted247 - Name Redacted

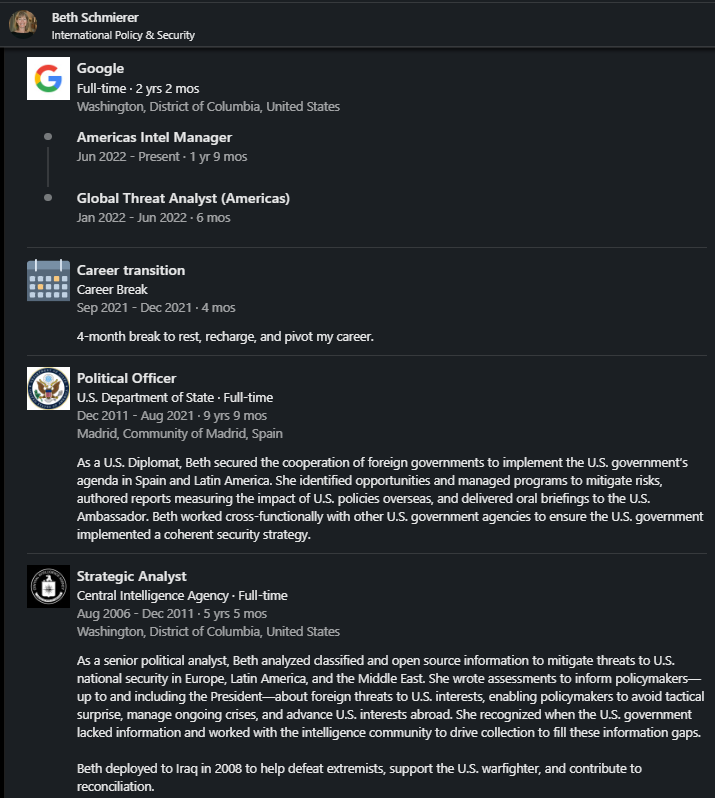

12. Beth Schmierer joined Google in 2022 as a Global Threat Analyst and Intel Manager. Her only prior work experience was five years at the CIA. Additionally, she spent nine years in Madrid working for the State Department, where she claims to have been tasked with “implementing the US government’s agenda in Spain & Latin America”

@NameRedacted247 - Name Redacted

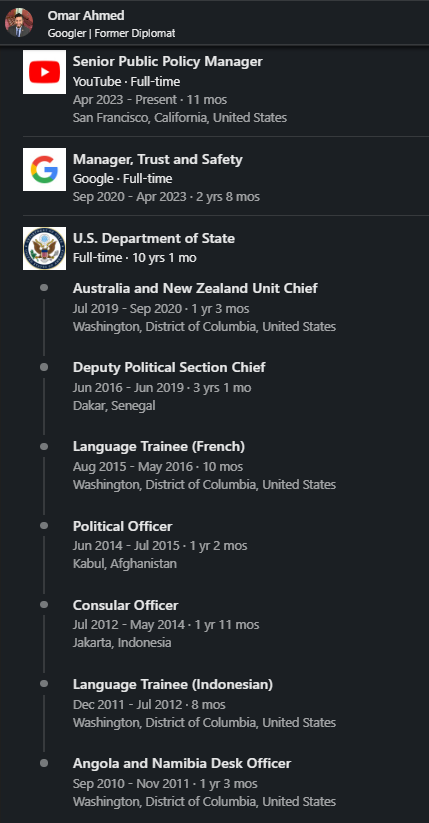

13. Omar Ahmed joined Google in 2020 as Manager of Trust & Safety at Google/YouTube. His only prior work experience is 10 years at the State Department. I suppose his experience in Indonesia, Afghanistan & Senegal qualifies him to be a content moderator.

@NameRedacted247 - Name Redacted

14. Jamie Washington joined Google in 2018 as Director of Threat Intelligence. Her only experience prior to joining Google is 2 years at the FBI and 13 years at the CIA (2 years in Iraq).

@NameRedacted247 - Name Redacted

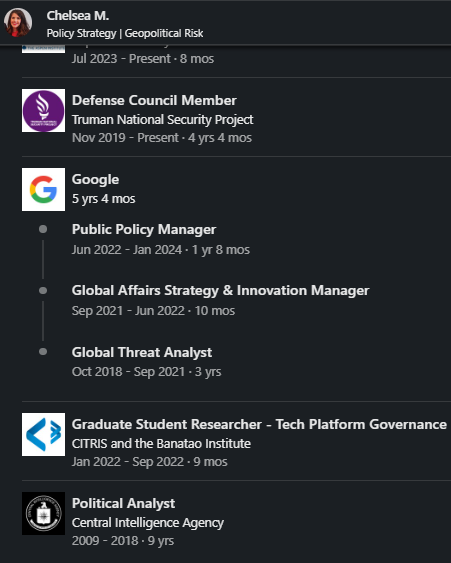

15. Chelsea Magnant joined Google in 2018 as Public Policy Manager. Her only prior work experience was at the CIA for 9 years. She’s also an instructor at Aspen Institute

@NameRedacted247 - Name Redacted

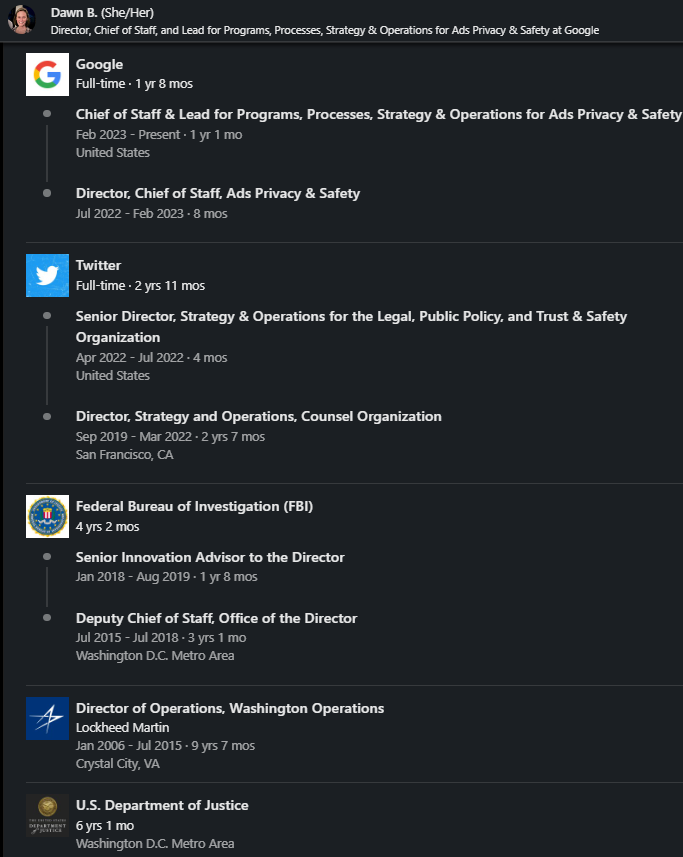

16. Dawn Burton joined Google’s Trust & Safety team in 2022. She was previously at Twitter Trust & Safety but was fired by @elonmusk. Prior to this, she spent 6 years at DOJ, then 4 years at FBI as a Senior Advisor to James Comey. After a career at DOJ & FBI, working for James Comey, it’s totally normal that she would transition to Trust & Safety departments at tech platforms, right?

@NameRedacted247 - Name Redacted

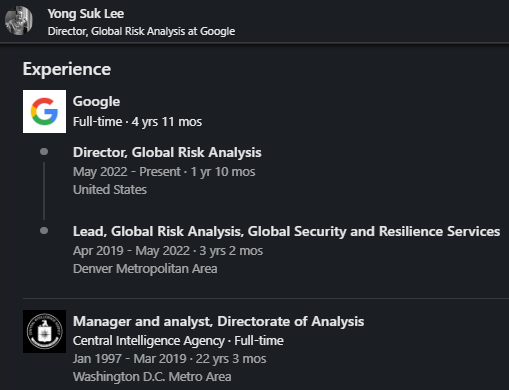

17. Yong Suk Lee joined Google in 2019 as Director Global Risk Analysis. His only prior work history is 22 years at the CIA where he was the Deputy Assistant Director of Korea Mission Center. He is also a member of Council on Foreign Relations

@NameRedacted247 - Name Redacted

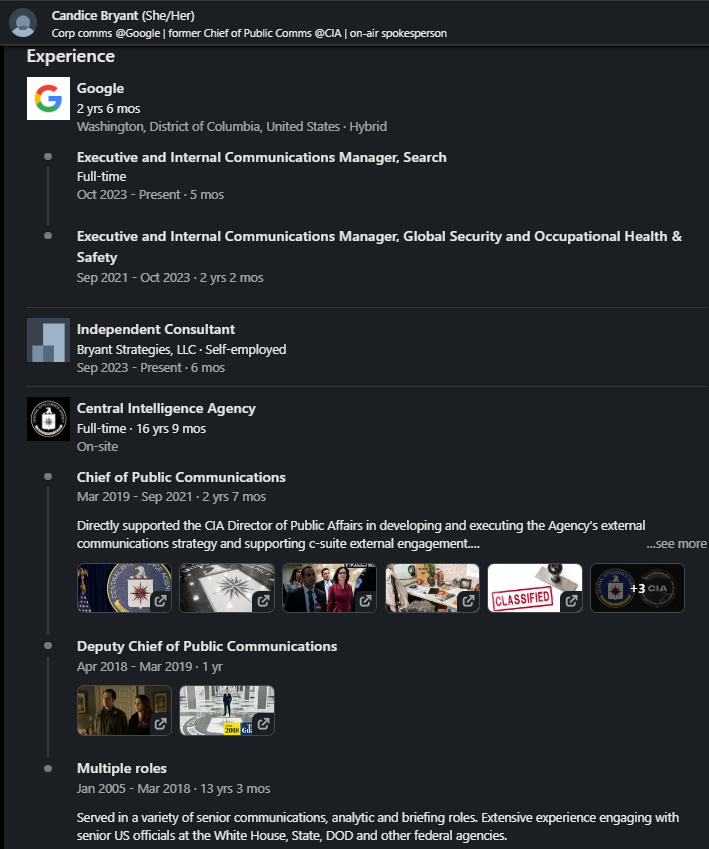

18. Candice Bryant joined Google in 2021 as Executive & Internal Comms Manager. Her only other work experience was 17 years at the CIA. She led the CIA's social media team with the goal of boosting the agency's public image. https://www.politico.com/news/2021/09/08/cia-least-covert-mission-510043

@NameRedacted247 - Name Redacted

19. I could list over 100 more examples of individuals whose sole work history is within the Intelligence Community or career State Department diplomats. Many of these individuals hold positions as content moderators and policy managers. Most of them joined Google/YouTube after 2018. Is it merely a coincidence that censorship has increased aggressively since then?

@NameRedacted247 - Name Redacted

20. If you are a “journalist” who covers the censorship issue but chooses to ignore the revolving door, you lack integrity. If you are a notable figure who’s been censored on Facebook, Instagram, or YouTube, it's crucial to start questioning why career CIA officers are employed by these companies as content moderators.

@NameRedacted247 - Name Redacted

1. #DisinfoGate PART 3 SHELBY PIERSON-ODNI ELECTION CZAR 10/15/2020- One day after the NY Post Hunter Biden Laptop story, Pierson says-“Russians leak information…whether it denigrates Biden and/or boosts Trump” Which Russian “leak operation” is she referring to? 🧵 @elonmusk

@NameRedacted247 - Name Redacted

2. 10/19/2020- Five days after the NY Post story, Shelby Pierson’s boss, former DNI @JohnRatcliffe states emphatically: “Hunter Biden’s Laptop is NOT part of some Russian Disinformation campaign” Again, which Russian leak operation was Shelby Pierson talking about?

@NameRedacted247 - Name Redacted

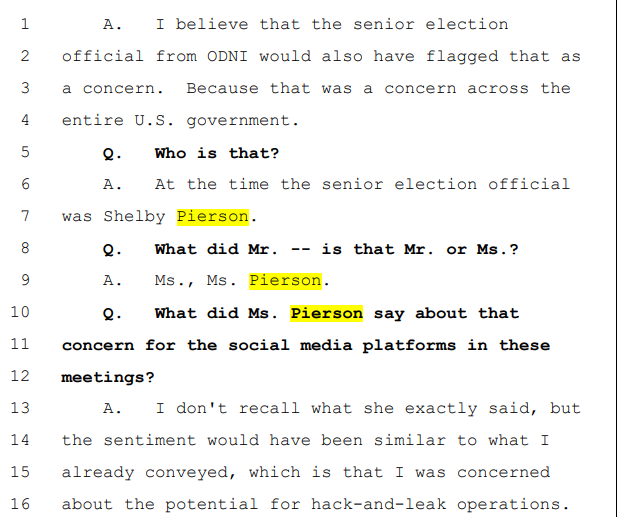

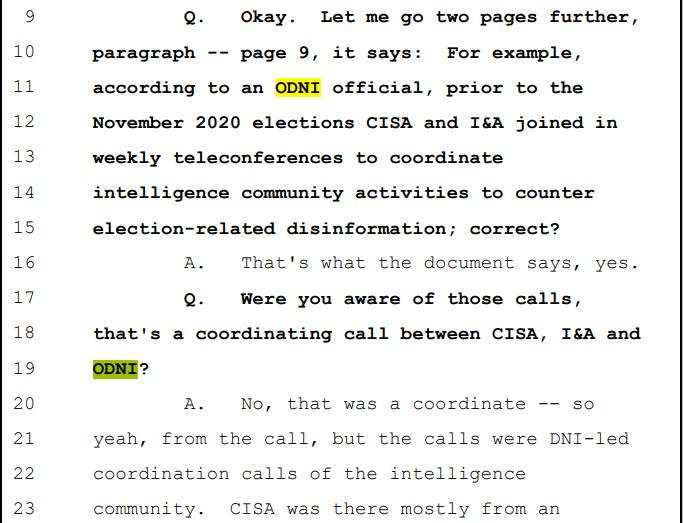

3. Elvis Chan (FBI) was deposed in the Missouri v Biden case He ID'd Pierson as 1 of 4 Govt. officials that warned Social Media about concerns of a Russian “hack-and-leak” operation ahead of the 2020 election (pg. 222) @AGAndrewBailey-it wasn't just FBI

@NameRedacted247 - Name Redacted

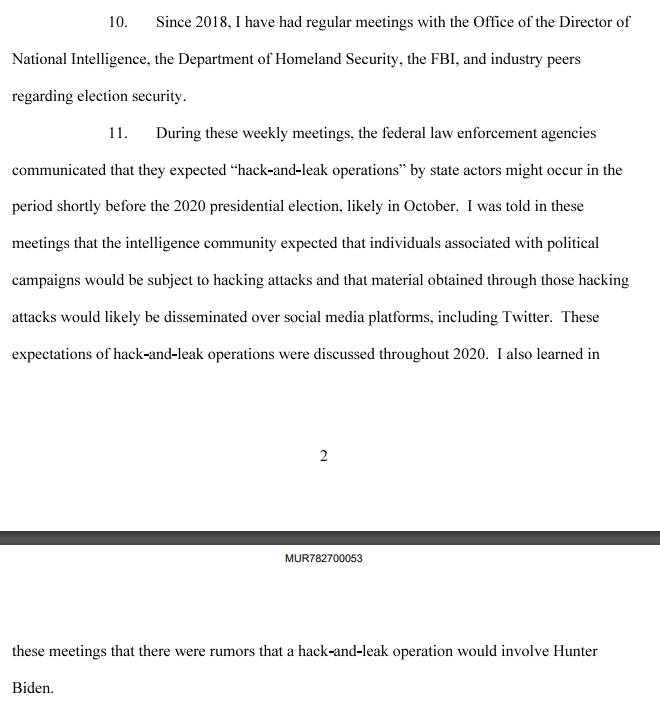

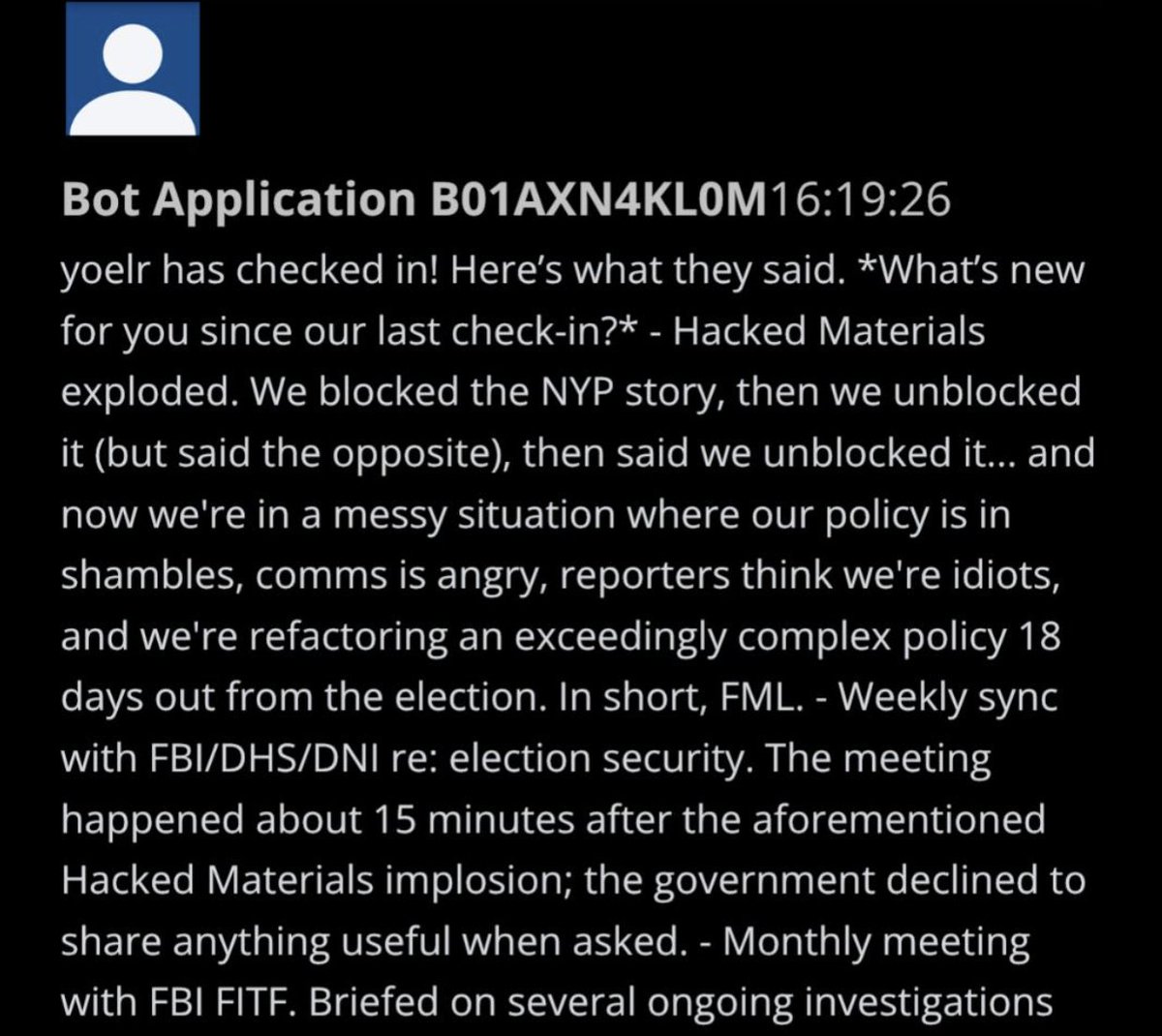

4. Yoel Roth states, in a sworn declaration, that he had weekly meetings with ODNI, DHS & FBI. Roth states he was warned of expected “hack-and-leak” operations by state actors that would involve Hunter Biden https://eqs.fec.gov/eqsdocsMUR/7827_08.pdf

@NameRedacted247 - Name Redacted

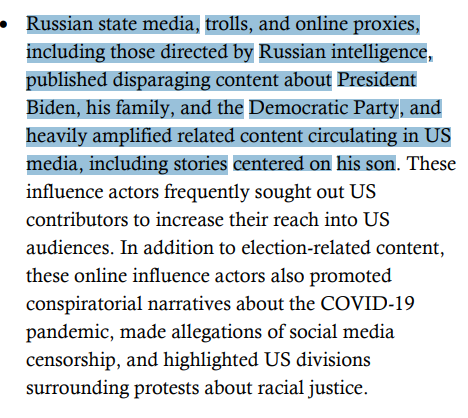

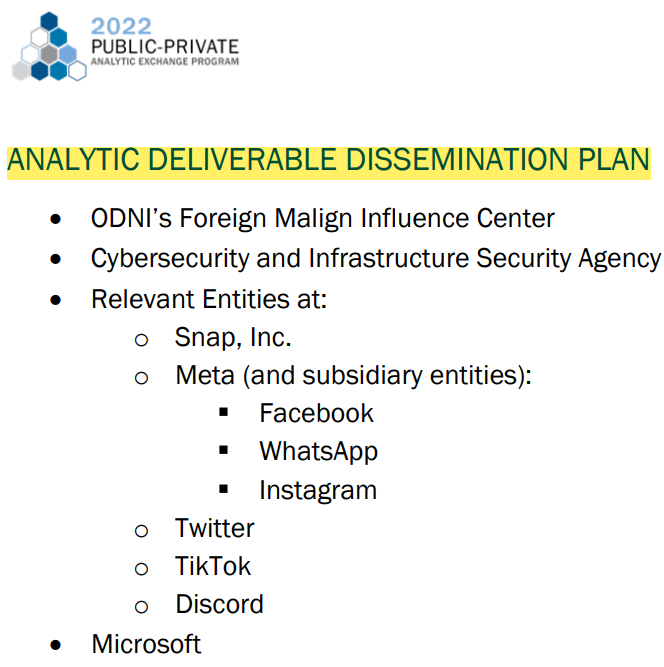

5. Buried in the unclassified IC Assessment of Foreign Threats to the 2020 Elections, there is one section that stands out Page 9- the report blames Russia for publishing & amplifying disparaging content about Biden, his family & specifically “his son" https://www.dni.gov/files/ODNI/documents/assessments/ICA-declass-16MAR21.pdf

@NameRedacted247 - Name Redacted

6. @JohnRatcliffe said NO ONE from ODNI was authorized to discuss “content moderation” w/ Social Media firms Yet Shelby Pierson said “sharing information from the IC w/ Social Media platforms to consider, relative to their terms of service, how to remediate inauthentic content”

@NameRedacted247 - Name Redacted

7. DISTURBING- Pierson says domestic censorship, on social media, is justified if IC determines it’s “foreign sponsored” “if we’re aware that this information is foreign sponsored…we want to do everything we can to MANAGE this information” LISTEN: https://www.npr.org/2020/01/22/798186093/election-security-boss-threats-to-2020-are-now-broader-more-diverse

@NameRedacted247 - Name Redacted

8. PARTNERSHIPS WITH SOCIAL MEDIA & TECH FIRMS 1/14/2020- Pierson speaks at EAC Summit. She confirms that the Intelligence Community, Social Media firms & Tech firms are in a “partnership” In my first #DisinfoGate thread, we heard @BillEvanina also describe this partnership

@NameRedacted247 - Name Redacted

9. Shelby Pierson states: “we need an entire whole of society…working together to understand threats that come w/ election security & countering MALIGN INFLUENCE” She includes “Social Media, Tech firms, the Press, Academia, Special Interest Groups & NGO’s”

@NameRedacted247 - Name Redacted

10. ODNI's Pierson & @BillEvanina held the highest positions in the IC working on ‘election security’ Pierson & @BillEvanina have both, unambiguously, stated that they worked w/ Social Media firms to “take down” or “remediate content”

@NameRedacted247 - Name Redacted

11. @JohnRatcliffe said NO ONE from ODNI was authorized to discuss “content moderation” w/ Social Media firms Both Pierson & Evanina publicly admitted discussing content moderation w/ Social Media firms @Jim_Jordan @Weaponization @JudiciaryGOP should call ALL THREE to testify

@NameRedacted247 - Name Redacted

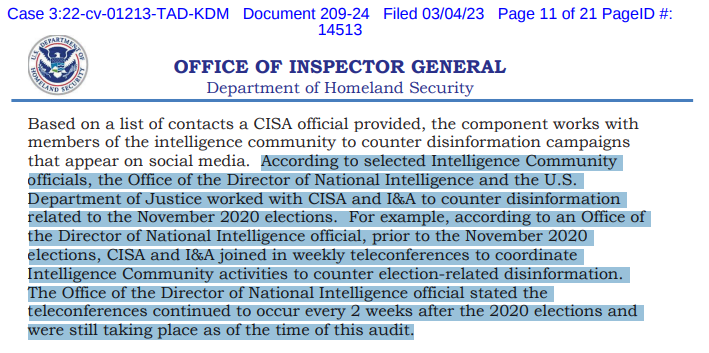

12. Brian Scully confirms, in his deposition, that ODNI officials held weekly teleconferences w/ CISA to discuss ways to “counter disinformation related to the November 2020 Elections” Exhibit 31 is OIG report on DHS that confirms this As noted earlier-Elvis Chan ID’d Pierson

@NameRedacted247 - Name Redacted

13.Shelby Pierson was appointed by former DNI Dan Coats on July 19, 2019. She was named Chair of the Election Executive & Leadership Board. She was the top election security official in the Intelligence Community https://www.dni.gov/index.php/newsroom/press-releases/item/2023-director-of-national-intelligence-daniel-r-coats-establishes-intelligence-community-election-threats-executive

@NameRedacted247 - Name Redacted

14. Was Shelby Pierson a partisan? Did her personal bias affect the types of intelligence she shared with Social Media firms? Did she use that intelligence to advise what content she wanted the Social Media firms to ‘remediate?’

@NameRedacted247 - Name Redacted

15. 2/5/2020- Trump acquitted in first impeachment Eight days later, Shelby Pierson briefed the HPSCI, where she told the committee that “Russia was working to get Trump re-elected.” https://apnews.com/article/campaigns-donald-trump-ap-top-news-elections-politics-4912baca0c4cbc6cb7a3580f4f3c9b96

@NameRedacted247 - Name Redacted

16.Trump was enraged over this, fired Acting DNI Maguire and replaced him with @RichardGrenell Yet Pierson remained in her position. Why?

@NameRedacted247 - Name Redacted

17. 3/10/2020- In a classified briefing to Congress, @BillEvanina walks back claims made by Shelby Pierson a month prior. Evanina told Congress they had “nothing to support” the notion that Putin favored one candidate or another. https://www.cbsnews.com/news/administration-officials-brief-members-of-congress-on-election-security/

@NameRedacted247 - Name Redacted

18. While Shelby Pierson has now left ODNI, the effort to counter “disinformation” continues Pierson’s old position as Elections Threats Executive is part of a new center at ODNI: FOREIGN MALIGN INFLUENCE CENTER Its mission is identical to DHS Disinformation Governance Board

@NameRedacted247 - Name Redacted

19. Jeffrey Wichman, former 30-year CIA officer, is the current acting Director of the Foreign Malign Influence Center #FMIC’s mission is to counter “malign influence” that seeks to influence public opinion & behavior Wichman is the de facto leader of the ‘Thought Police”

@NameRedacted247 - Name Redacted

20.#DisinfoGate- A real, documented, & vast conspiratorial effort led by Shelby Pierson & Bill Evanina at ODNI, along with other Government officials It includes virtually the entire Intel Community in partnership w/ Social Media firms, Tech firms, MSM, Academia, NGO’s, etc.

@NameRedacted247 - Name Redacted

21.#DisinfoGate- Using a false narrative that Russia interfered in the 2016 election, CISA was created as a liaison between IC & Social Media The goal was to censor free speech (under the false guise of foreign disinfo) & socially engineer the opinions of American voters

@NameRedacted247 - Name Redacted

22.#DisinfoGate- After achieving their goal of getting Trump out of office, this Deep State/Social Media partnership is now ostensibly being used to censor & socially engineer our views on COVID, vaccines, climate, race relations, Russia/Ukraine War, etc. End

@NameRedacted247 - Name Redacted

24.#DisinfoGate Part 2- Foreign Malign Influence Center - https://www.dni.gov/index.php/nctc-who-we-are/organization/340-about/organization/foreign-malign-influence-center

@NameRedacted247 - Name Redacted

25.Sources: Tweet 1 - https://www.youtube.com/watch?v=49MWq6cqPXU Tweet 2 - https://www.youtube.com/watch?v=woGyMvqLV5o&t=612s Tweet 6- https://www.youtube.com/watch?v=1EV9BSMfoqY https://www.foxnews.com/video/6317022404112 Tweets 8 & 9 - https://www.youtube.com/watch?v=yvMU67hsqM0&t=256s

@NameRedacted247 - Name Redacted

15. 2/5/2020- Trump acquitted in first impeachment Eight days later, Shelby Pierson briefed the HPSCI, where she told the committee that “Russia was working to get Trump re-elected.” https://apnews.com/article/campaigns-donald-trump-ap-top-news-elections-politics-4912baca0c4cbc6cb7a3580f4f3c9b96

@NameRedacted247 - Name Redacted

16.Trump was enraged over this, fired Acting DNI Maguire and replaced him with @RichardGrenell Yet Pierson remained in her position. Why?

@NameRedacted247 - Name Redacted

17. 3/10/2020- In a classified briefing to Congress, @BillEvanina walks back claims made by Shelby Pierson a month prior. Evanina told Congress they had “nothing to support” the notion that Putin favored one candidate or another. https://www.cbsnews.com/news/administration-officials-brief-members-of-congress-on-election-security/

@NameRedacted247 - Name Redacted

18. While Shelby Pierson has now left ODNI, the effort to counter “disinformation” continues Pierson’s old position as Elections Threats Executive is part of a new center at ODNI: FOREIGN MALIGN INFLUENCE CENTER Its mission is identical to DHS Disinformation Governance Board

@NameRedacted247 - Name Redacted

19. Jeffrey Wichman, former 30-year CIA officer, is the current acting Director of the Foreign Malign Influence Center #FMIC’s mission is to counter “malign influence” that seeks to influence public opinion & behavior Wichman is the de facto leader of the ‘Thought Police”

@NameRedacted247 - Name Redacted

20.#DisinfoGate- A real, documented, & vast conspiratorial effort led by Shelby Pierson & Bill Evanina at ODNI, along with other Government officials It includes virtually the entire Intel Community in partnership w/ Social Media firms, Tech firms, MSM, Academia, NGO’s, etc.

@NameRedacted247 - Name Redacted

21.#DisinfoGate- Using a false narrative that Russia interfered in the 2016 election, CISA was created as a liaison between IC & Social Media The goal was to censor free speech (under the false guise of foreign disinfo) & socially engineer the opinions of American voters

@NameRedacted247 - Name Redacted

22.#DisinfoGate- After achieving their goal of getting Trump out of office, this Deep State/Social Media partnership is now ostensibly being used to censor & socially engineer our views on COVID, vaccines, climate, race relations, Russia/Ukraine War, etc. End

@NameRedacted247 - Name Redacted

24.#DisinfoGate Part 2- Foreign Malign Influence Center - https://www.dni.gov/index.php/nctc-who-we-are/organization/340-about/organization/foreign-malign-influence-center

@NameRedacted247 - Name Redacted

25.Sources: Tweet 1 - https://www.youtube.com/watch?v=49MWq6cqPXU Tweet 2 - https://www.youtube.com/watch?v=woGyMvqLV5o&t=612s Tweet 6- https://www.youtube.com/watch?v=1EV9BSMfoqY https://www.foxnews.com/video/6317022404112 Tweets 8 & 9 - https://www.youtube.com/watch?v=yvMU67hsqM0&t=256s

@NameRedacted247 - Name Redacted

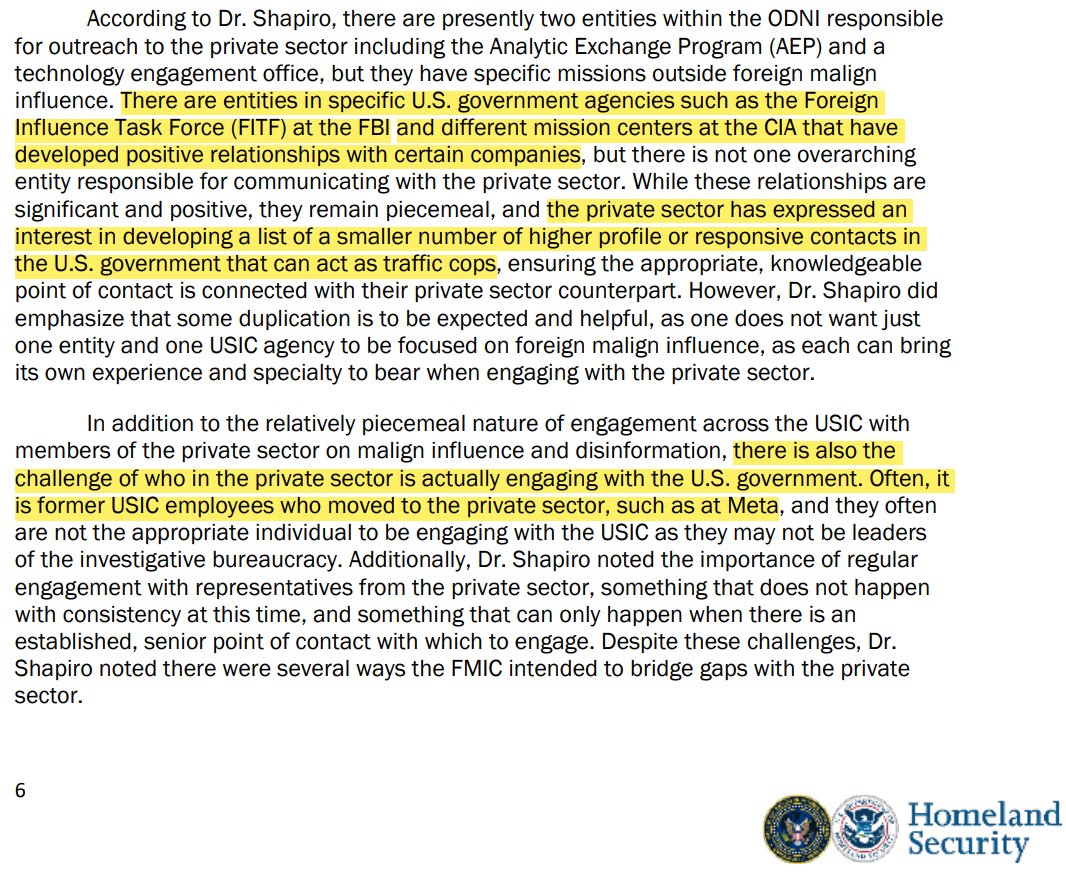

3. The joint DHS-ODNI report emphasizes Public-Private Partnerships to combat Foreign Malign Influence and outlines recommended roles for FMIC: *Directly engage with social media companies to address disinformation. *Serve as a liaison between the Intelligence Community (IC) and Social Media platforms on disinformation issues.

@NameRedacted247 - Name Redacted

2. In February 2023, I flagged concerns about the ODNI's Foreign Malign Influence Center (FMIC). FMIC is a functional clone of the now-defunct Disinformation Governance Board & was launched in September 2022 under Director Jeffrey Wichman, who is a 30-year CIA veteran. https://www.dni.gov/index.php/fmic-home

@NameRedacted247 - Name Redacted

3. The joint DHS-ODNI report emphasizes Public-Private Partnerships to combat Foreign Malign Influence and outlines recommended roles for FMIC: *Directly engage with social media companies to address disinformation. *Serve as a liaison between the Intelligence Community (IC) and Social Media platforms on disinformation issues.

@NameRedacted247 - Name Redacted

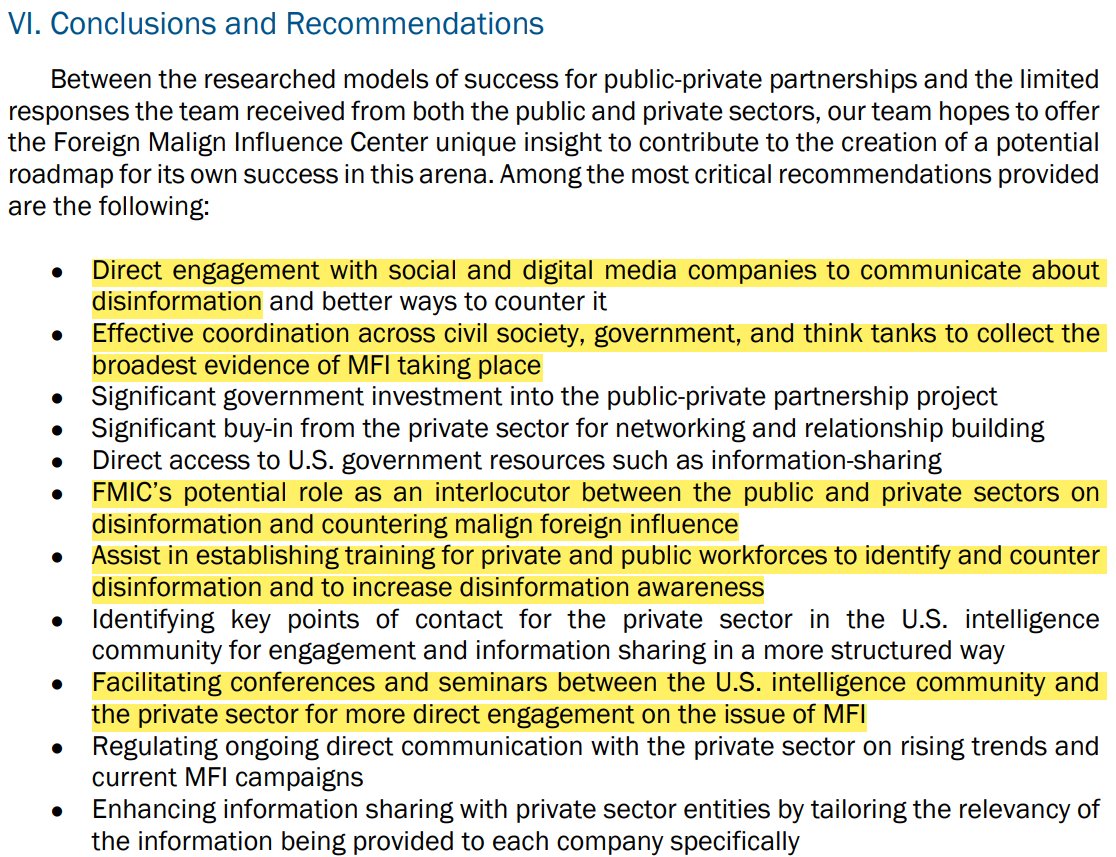

4. At the report's conclusion, the 'Analytic Deliverable Dissemination Plan' is highlighted: ODNI & CISA jointly distribute content labeled as 'disinformation' or 'foreign malign influence' to these platforms: Meta (Facebook, Instagram, WhatsApp), Twitter/X, TikTok, Snapchat, & Discord

@NameRedacted247 - Name Redacted

5. I'll address common arguments about FMIC: First - "They only focus on foreign disinfo!" Wrong Anything contradicting the U.S. establishment narrative is flagged as 'Russian disinformation.' The ODNI's Assessment on Foreign Influence in the 2020 Election cites examples like: a. Criticizing President Biden and his son b. Spreading COVID-19 conspiracy theories. c. Claims of social media censorship d. And let's not forget the 51 'intelligence experts' who said the Hunter Biden laptop had "all the classic earmarks of a Russian information operation."

@NameRedacted247 - Name Redacted

6. I was told the relationship between FMIC & CISA is 'hypothetical malfeasance' Wrong A deposition in the Missouri v. Biden case revealed that Shelby Pierson, ODNI's election threat executive (which now falls under FMIC), had weekly meetings with CISA leading up to the 2020 election. She also directly advised social media platforms on removing 'inauthentic content according to their terms of service.' Don't take my word for it; she admits this herself.

@NameRedacted247 - Name Redacted

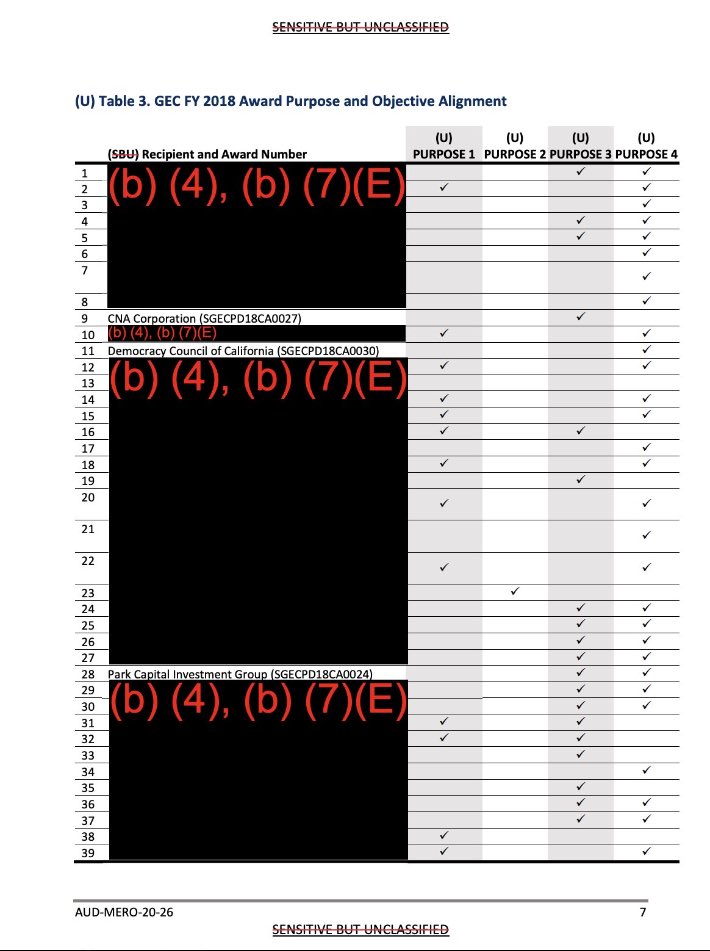

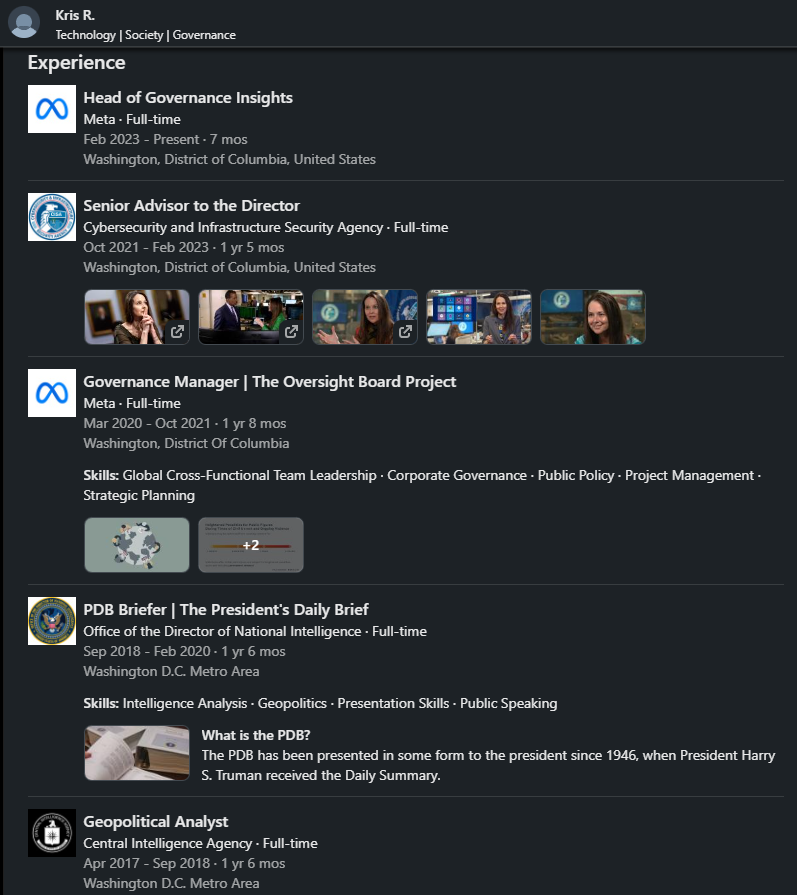

4. Kristopher Rose spent a decade between the CIA & ODNI before joining Meta in March 2020. During his time at ODNI, Rose collaborated with CIA's Aaron Berman in composing the Presidential Daily Brief (PDB) for President Trump. After leaving the ODNI, Rose became a member of the Oversight Board of Meta in 2020, where he was among the 20 individuals involved in the decision to suspend @realDonaldTrump from all Meta platforms. Subsequently, after executing the suspension, Rose transitioned to CISA, serving as Senior Advisor to Jen Easterly. After 1 & half years at CISA, he returned to Meta, now assuming the position of "Head of Governance Insights." For those who may have lost track, here's the Kristopher Rose timeline: CIA -> ODNI -> CIA -> ODNI -> META -> CISA -> META. Are you beginning to see the bigger picture? Two CIA operatives who contributed to writing PDBs for Trump have joined Meta. Berman, in charge of censorship ahead of the 2020 Election, and Rose, the one who played a role in suspending Trump from the platform. http://linkedin.com/in/kristopherrose/…

@NameRedacted247 - Name Redacted

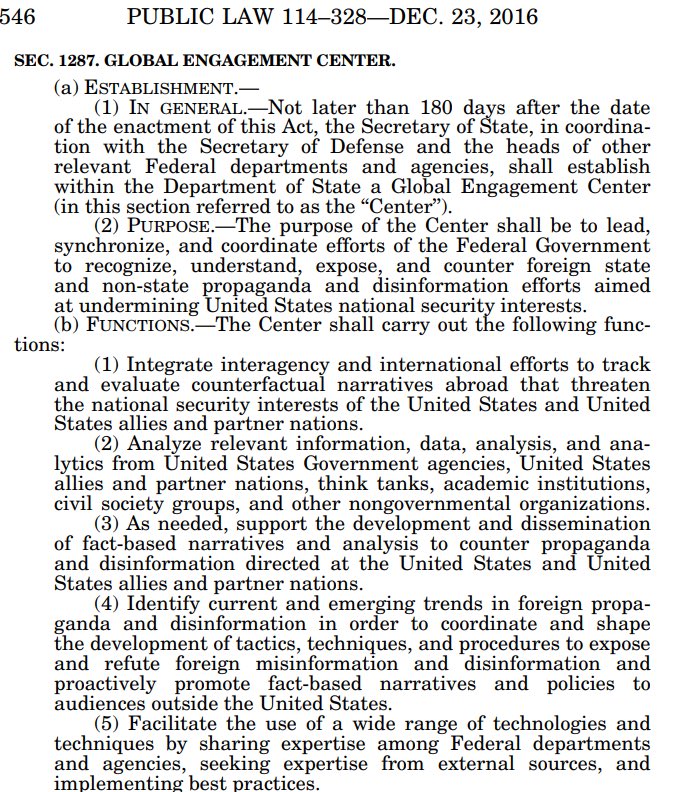

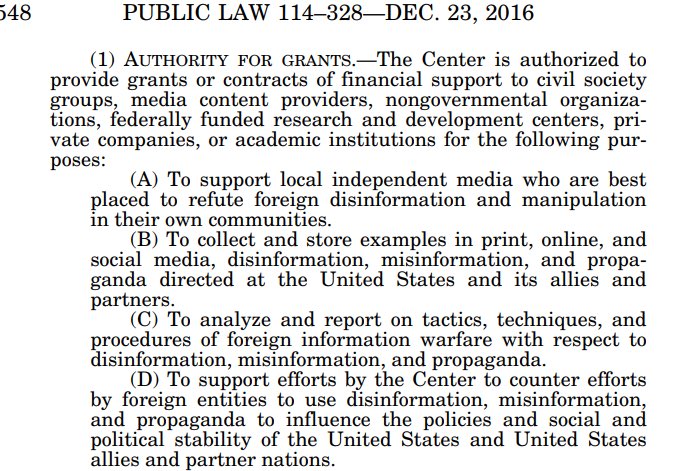

2. GEC was established in the 2017 NDAA. Its primary objective was not only to identify misinformation and disinformation targeted at the US and its allies but also to develop and distribute "fact-based" narratives to counter propaganda. GEC was granted the authority to provide awards to various entities, including: “Private Companies” “Media Content Providers” Presently, @America1stLegal is pursuing a lawsuit via FOIA to uncover the recipients of awards from GEC. We will be revisiting this once the information is unredacted

@NameRedacted247 - Name Redacted

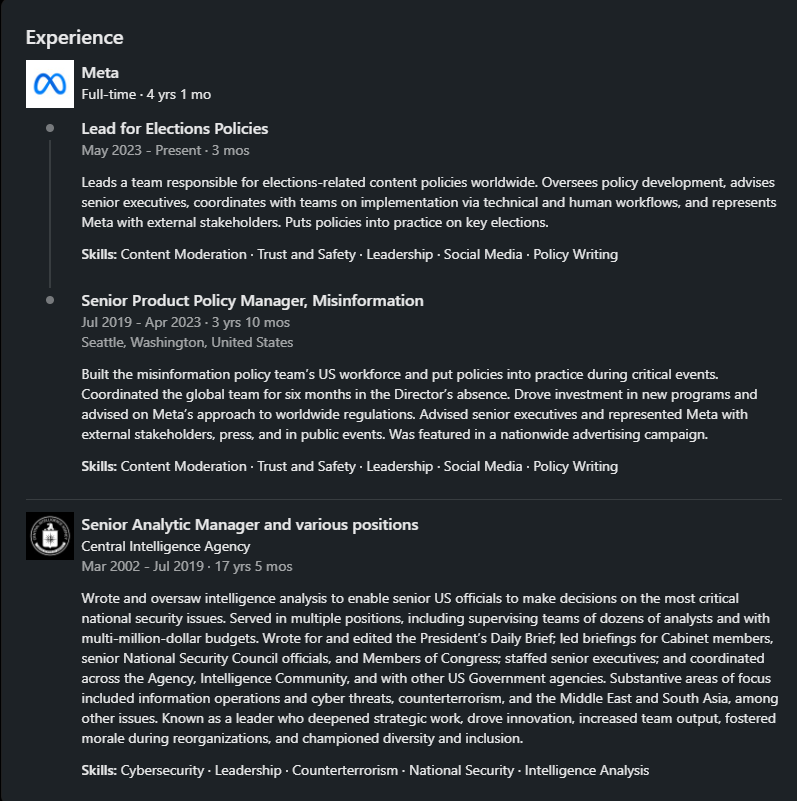

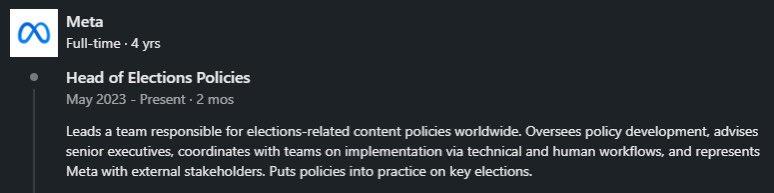

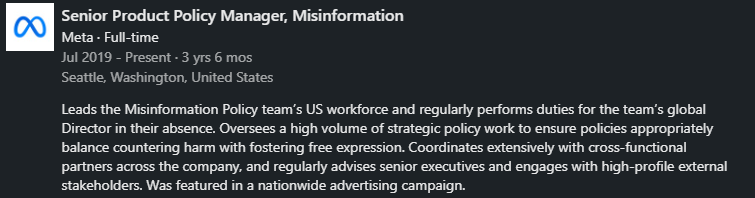

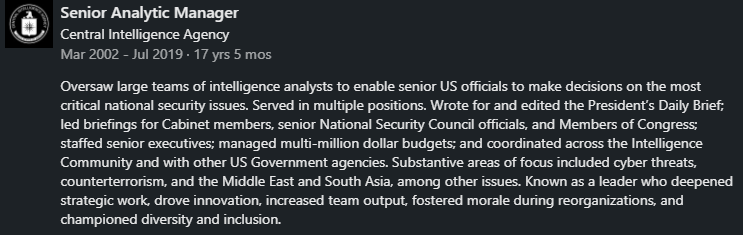

3. Aaron Berman possesses an extensive background at the CIA, spanning two decades, before joining Meta in July 2019. He built the Misinformation Policy team & wrote content policies, overseeing censorship during the 2020 US Election & COVID-19. Notably, he collaborated with fact-checkers to censor "misinformation" in US & foreign elections. As of May 2023, Berman holds the position of Lead for Elections Policy content. In his prior role at the CIA, he was responsible for writing the Presidential Daily Brief (PDB) for President Trump. http://linkedin.com/in/aarondberman/… @AaronDBerman is eager to share the writing skills he acquired during his tenure at the CIA through his Substack platform. https://theblueowl.substack.com For a comprehensive list of the censorship efforts overseen by Berman, I encourage you to read the attached thread:

@NameRedacted247 - Name Redacted

4. Kristopher Rose spent a decade between the CIA & ODNI before joining Meta in March 2020. During his time at ODNI, Rose collaborated with CIA's Aaron Berman in composing the Presidential Daily Brief (PDB) for President Trump. After leaving the ODNI, Rose became a member of the Oversight Board of Meta in 2020, where he was among the 20 individuals involved in the decision to suspend @realDonaldTrump from all Meta platforms. Subsequently, after executing the suspension, Rose transitioned to CISA, serving as Senior Advisor to Jen Easterly. After 1 & half years at CISA, he returned to Meta, now assuming the position of "Head of Governance Insights." For those who may have lost track, here's the Kristopher Rose timeline: CIA -> ODNI -> CIA -> ODNI -> META -> CISA -> META. Are you beginning to see the bigger picture? Two CIA operatives who contributed to writing PDBs for Trump have joined Meta. Berman, in charge of censorship ahead of the 2020 Election, and Rose, the one who played a role in suspending Trump from the platform. http://linkedin.com/in/kristopherrose/…

@NameRedacted247 - Name Redacted

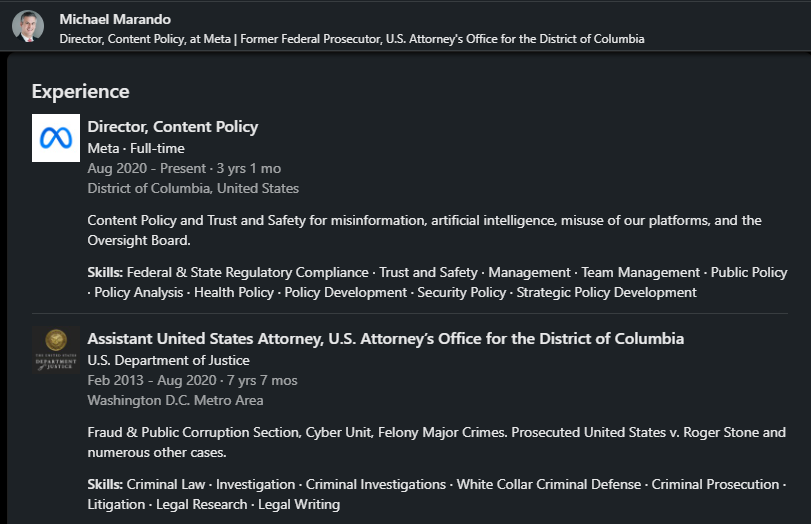

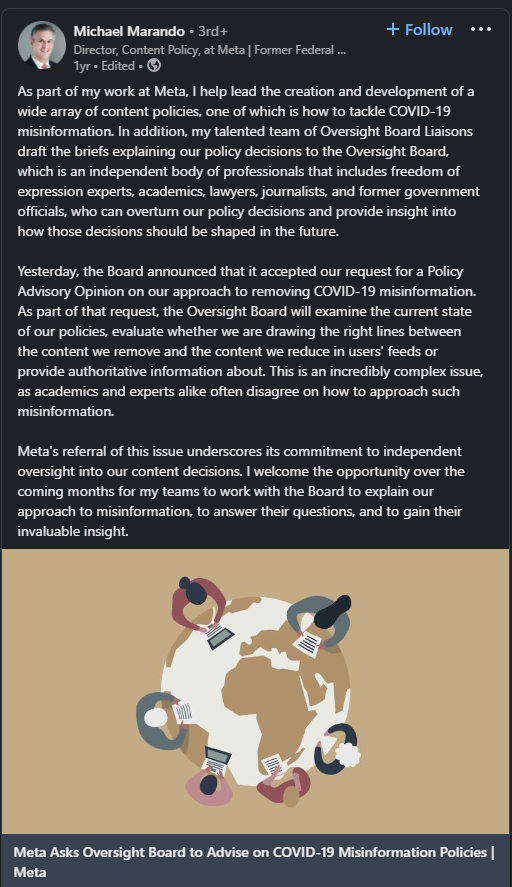

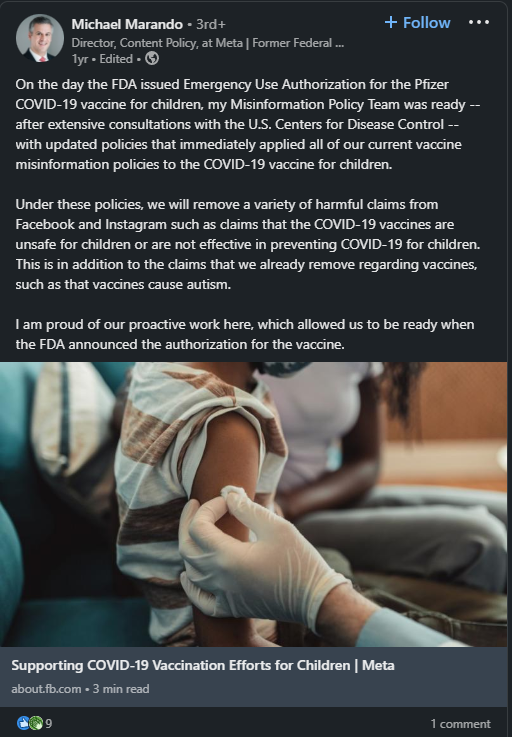

5. Michael Marando: After 8 years at DOJ, including service for the Mueller special counsel, he joined Meta in 2020, assuming the role of Director of Content Policy, working alongside Aaron Berman. Among his accomplishments, Marando proudly highlights his involvement in prosecuting @RogerJStoneJr As Director of Content Policy, he focuses on misinformation & AI. Notably, he takes credit for contributing to the development of Meta's COVID-19 misinformation policy http://linkedin.com/in/michael-marando-b544278/…

@NameRedacted247 - Name Redacted

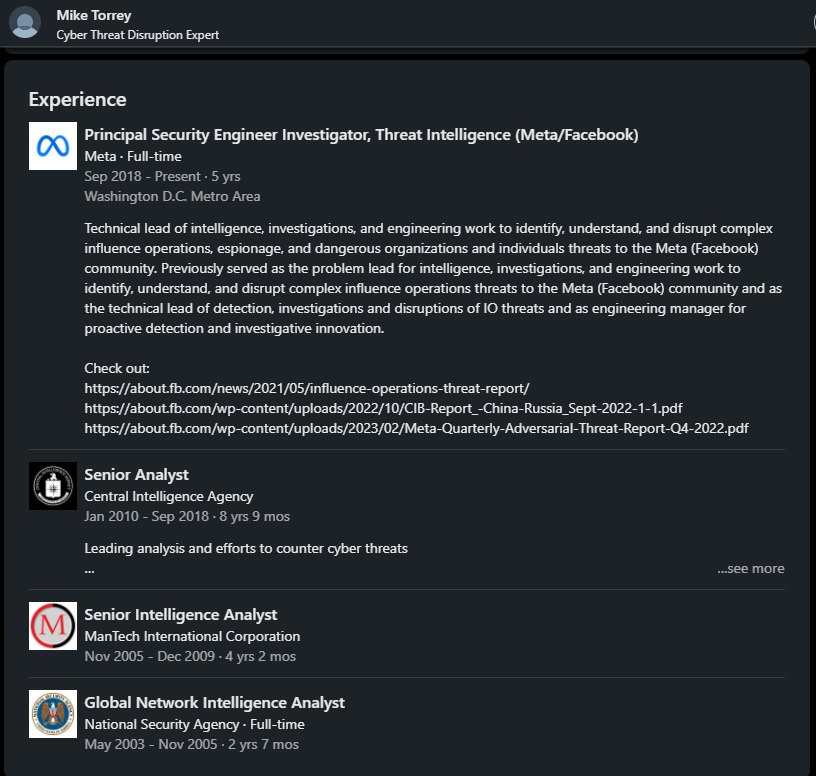

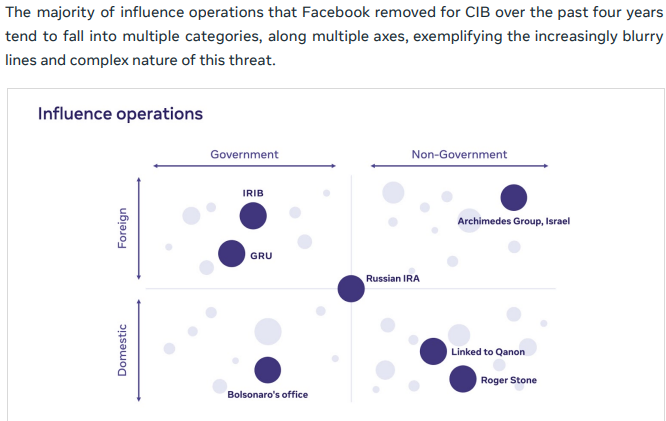

6. Mike Torrey is a Threat Intelligence professional at Meta. Before joining Meta in 2018, Mike dedicated 3 years to the NSA and 9 years to the CIA. While at Meta, he played a key role in co-authoring a report on "The State of Influence Operations 2017-2020 in response to "foreign interference by Russian actors in 2016." Notably, in this report, he labeled "QAnon, @RogerJStoneJr & @jairbolsonaro office" as "influence operations." http://linkedin.com/in/mike-torrey-01658b14a/… The report was a collective effort, and among the other authors was Nathaniel Gleicher, Ben Nimmo, David Agranovich and others. Full report here- https://about.fb.com/wp-content/uploads/2021/05/IO-Threat-Report-May-20-2021.pdf

@NameRedacted247 - Name Redacted

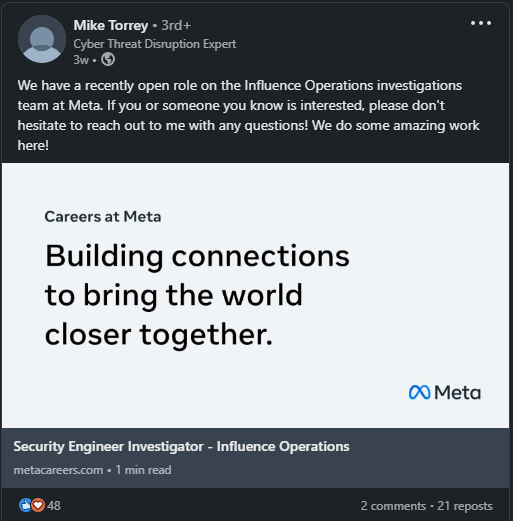

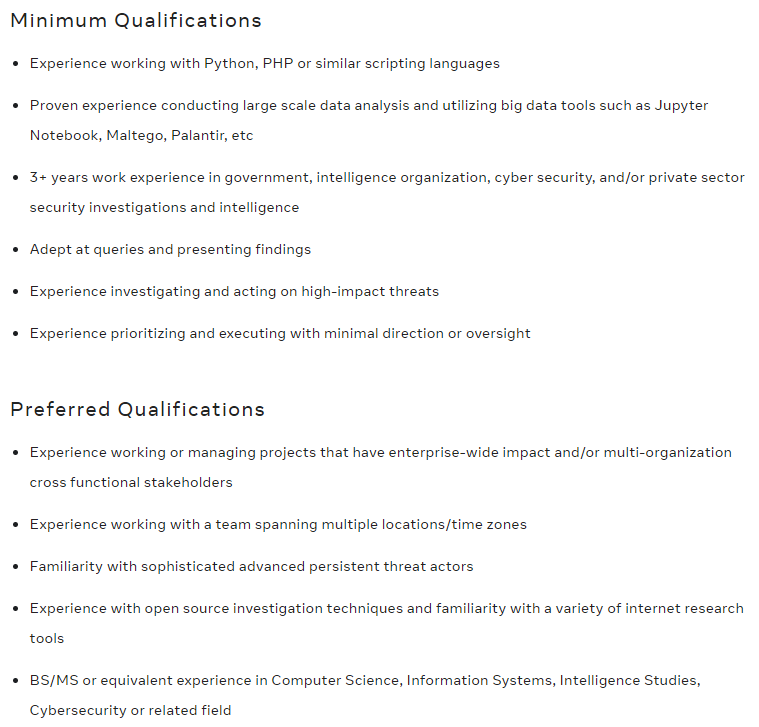

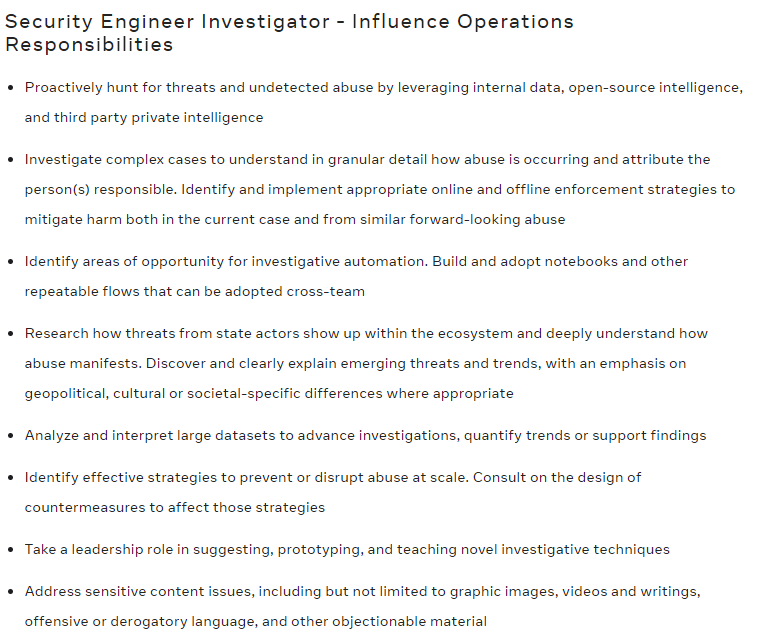

7. Mike Torrey is currently spearheading the recruitment efforts for the Influence Operations investigations team at Meta. For those interested in joining the Meta Influence Operations department, a minimum of 3 years of work experience in Government, Intelligence Organization, etc., is required. Depending on your level of experience, the starting pay ranges from $173k-$241k per year, complemented by bonuses, equity, and benefits. If you're eager to apply, you can do so by following this link: https://www.metacareers.com/jobs/588626383349158

@NameRedacted247 - Name Redacted

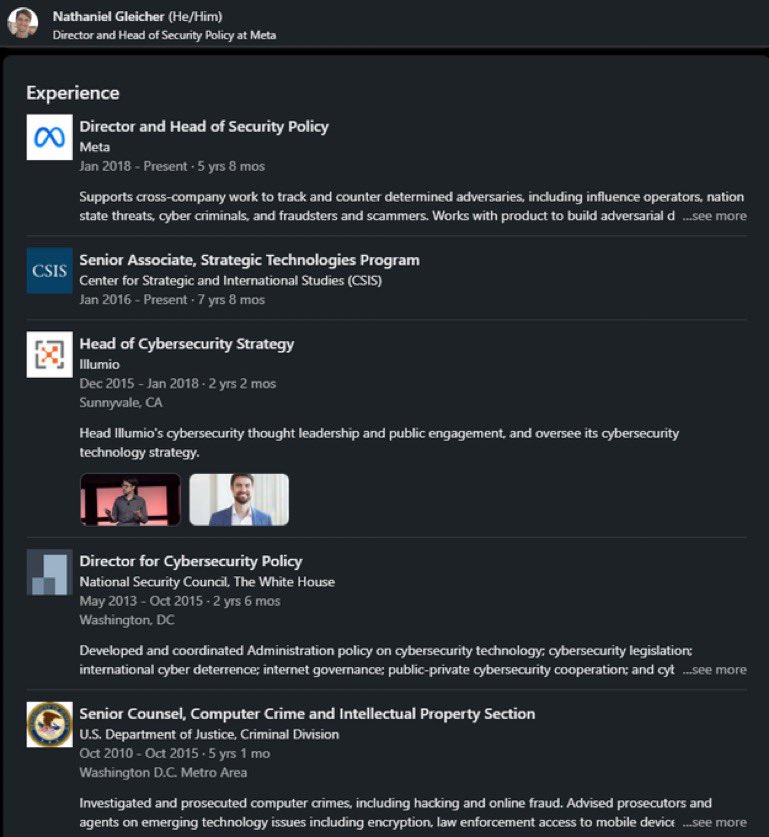

8. Ben Nimmo & Nathaniel Gleicher were co-authors of “The State of Influence Operations 2017-2018” alongside Mike Torrey. Ben Nimmo currently serves as the Global Threat Intel Lead at Meta, in addition to being the Co-Founder of Atlantic Council's DFRLab and a former Head of Investigations at Graphika. Nathaniel Gleicher joined Meta in 2018 as Director & Head of Security Policy. Prior to this role, he had 5 years of experience at DOJ and 2 years in the Obama White House National Security Council. - linkedin.com/in/nathaniel-g… Again- big thanks to @MikeBenzCyber for doing work on these people!

@NameRedacted247 - Name Redacted

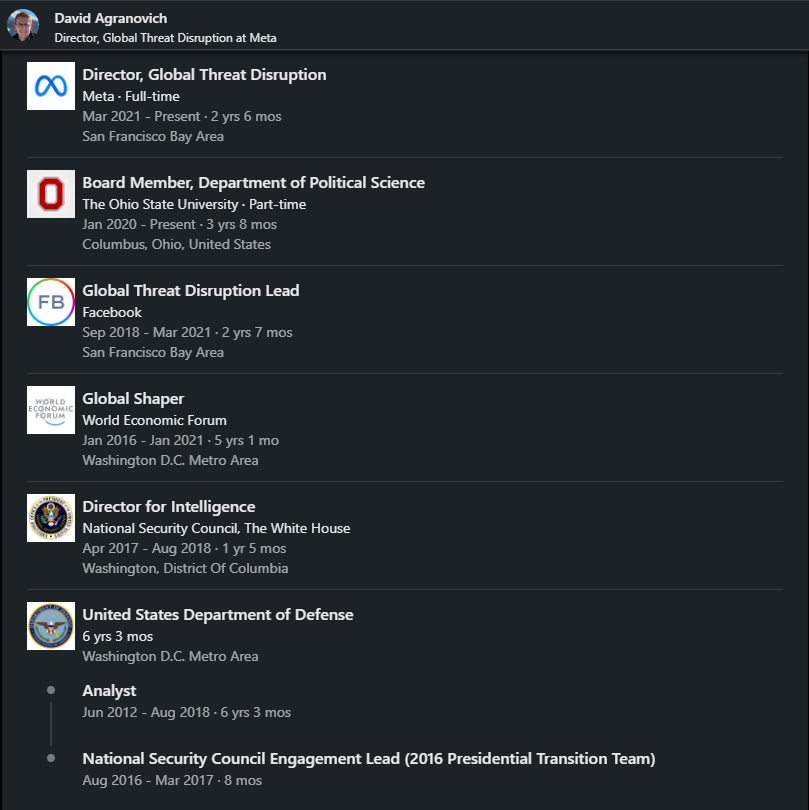

9. David Agranovich, who assumed the role of Director Global Threat Disruption at Meta in 2018, is another co-author "The State of Influence Operations 2017-2020." Before his tenure at Meta, David had an impressive background: World Economic Forum- 2016-2021: Served as "Global Shaper." DOD- 2012-2018: Analyst National Security Council- 2017-2018: Director for Intelligence linkedin.com/in/dagranovich/

@NameRedacted247 - Name Redacted

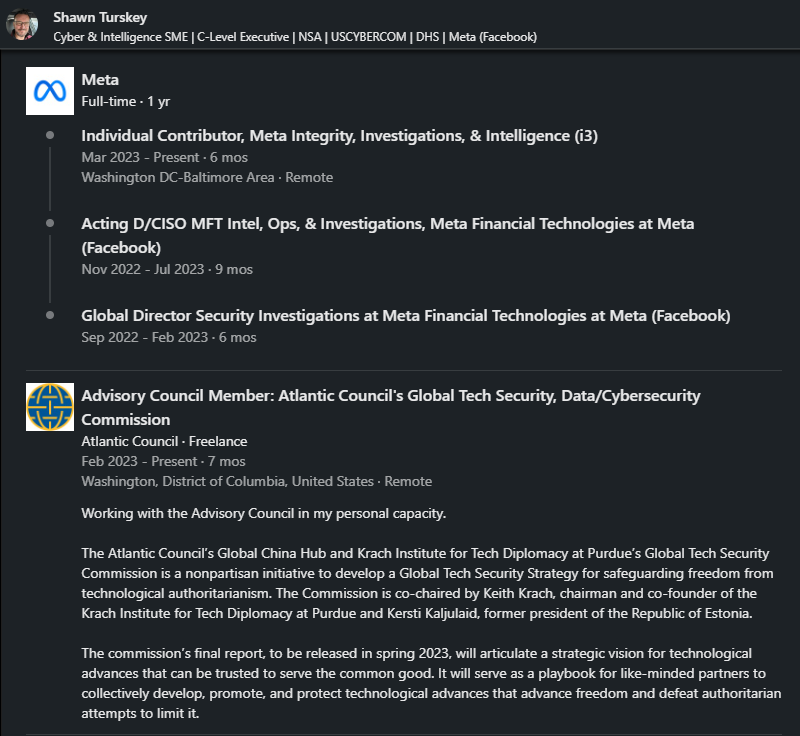

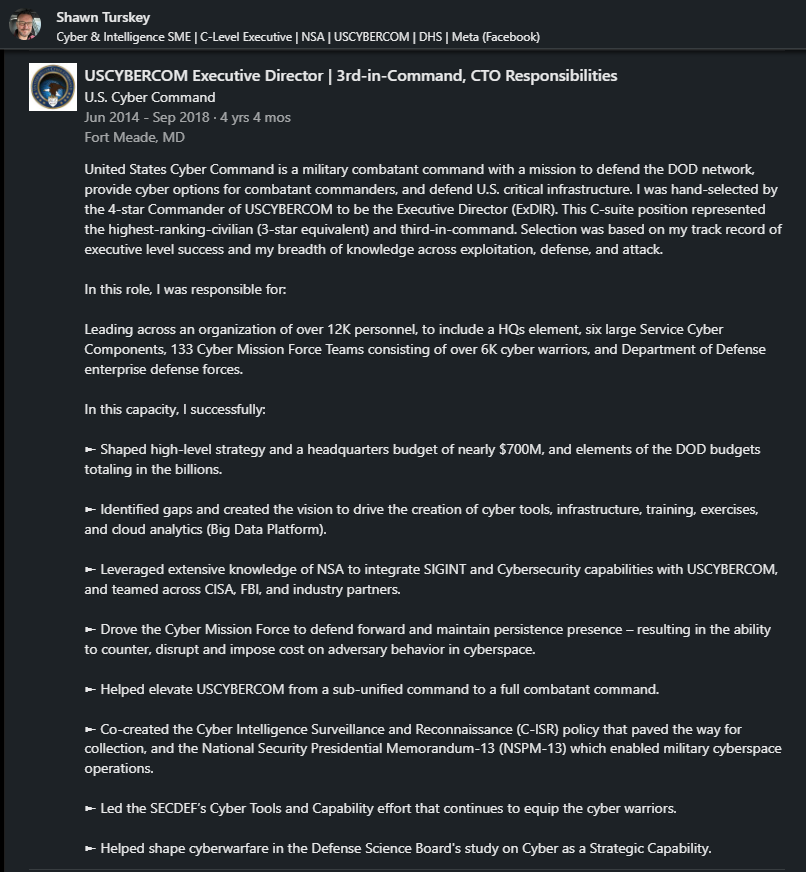

10. Shawn Turskey joined Meta in 2022 as part of the Integrity Investigations & Intelligence team, all the while holding a concurrent role as an Advisory Council Member at the Atlantic Council since February 2023. His impressive resume includes significant experiences: From 1987 to 2014, he served as a Signals Intelligence Analyst at the NSA for an impressive 27 years. In 2014, he assumed the position of Executive Director and 3rd in Command at the US Cyber Command, leading 12,000 personnel and overseeing a budget of $700 million. In 2018, Shawn returned to the NSA as the NSA Representative to the DHS for Cyber & Election Security, and later joined Meta in 2022. linkedin.com/in/shawnturske…

@NameRedacted247 - Name Redacted

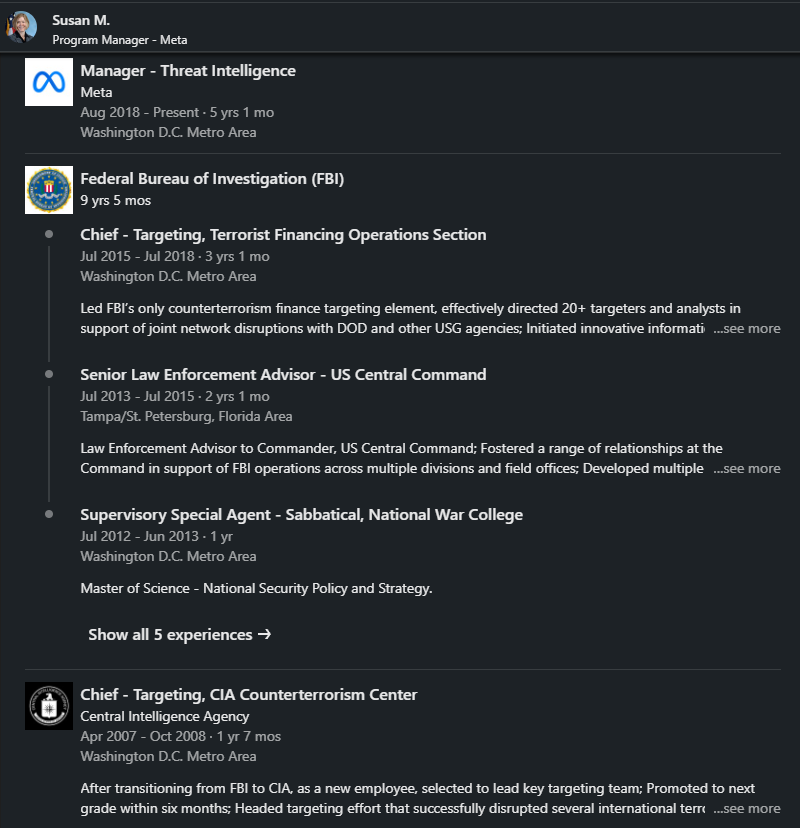

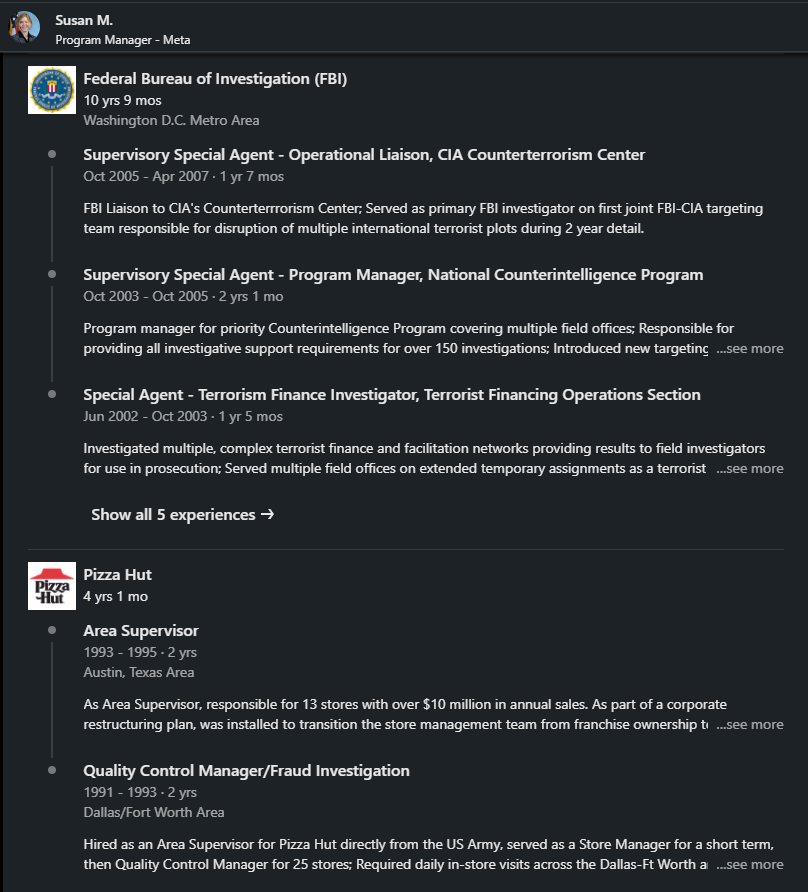

11. Susan M. joined Meta in 2018 as a Threat Intelligence Program Manager. Before joining Meta, she held significant roles in FBI & CIA: FBI: 1996 to 2007 (11 years), she served as a Special Agent, focusing on terrorism and counterintelligence. By the end of 2007, she assumed the role of Operational Liaison with CIA Counterterrorism Center. CIA: 2007-2008 (1 year & 7 months). Susan served as Chief Targeting for CIA Counterterrorism. FBI: 2009-2018 (9 years). Upon her return to the FBI, Susan was the Head of the FBI office in Islamabad. Naturally, after a remarkable 23-year tenure in the Intelligence Community, Meta became her new professional home. linkedin.com/in/susan-m-171…

@NameRedacted247 - Name Redacted

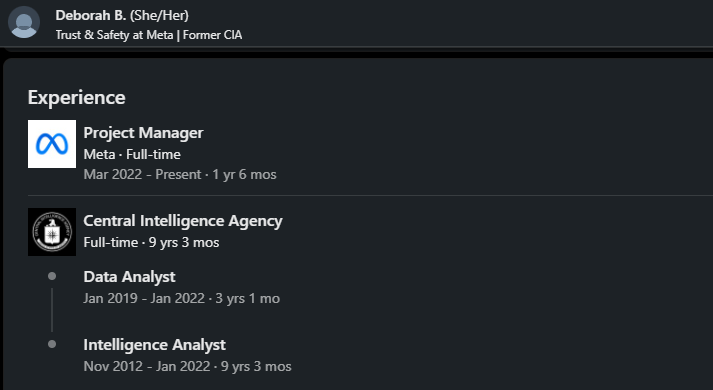

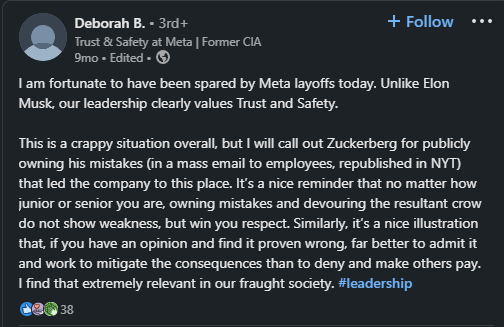

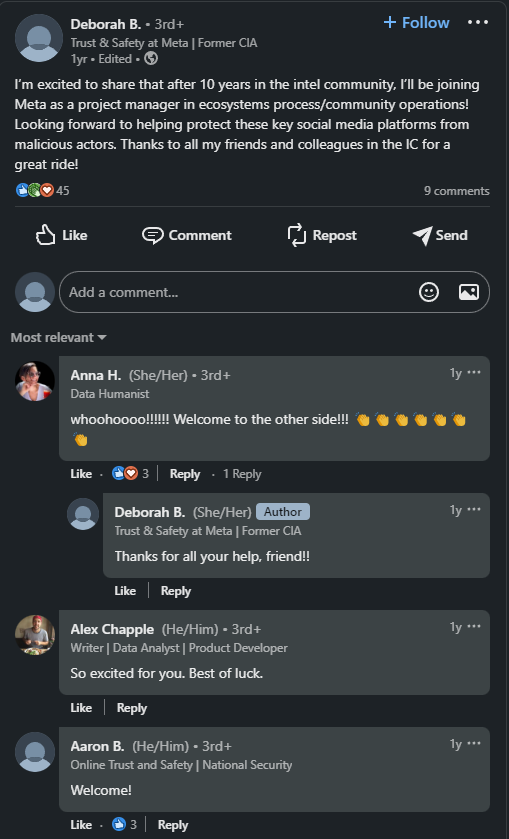

12. Deborah Berman: With an illustrious 9-year career at the CIA, she recently made her transition to Meta's Trust & Safety department in 2022. In a LinkedIn post announcing her departure from the CIA, she receives an unusual “welcome to the other side” reply from Anna Hadzic, another former CIA operative who currently works at InterWorks. Additionally, in a separate post, she takes a subtle dig at @elonmusk leadership values. linkedin.com/in/deborah-b-2…

@NameRedacted247 - Name Redacted

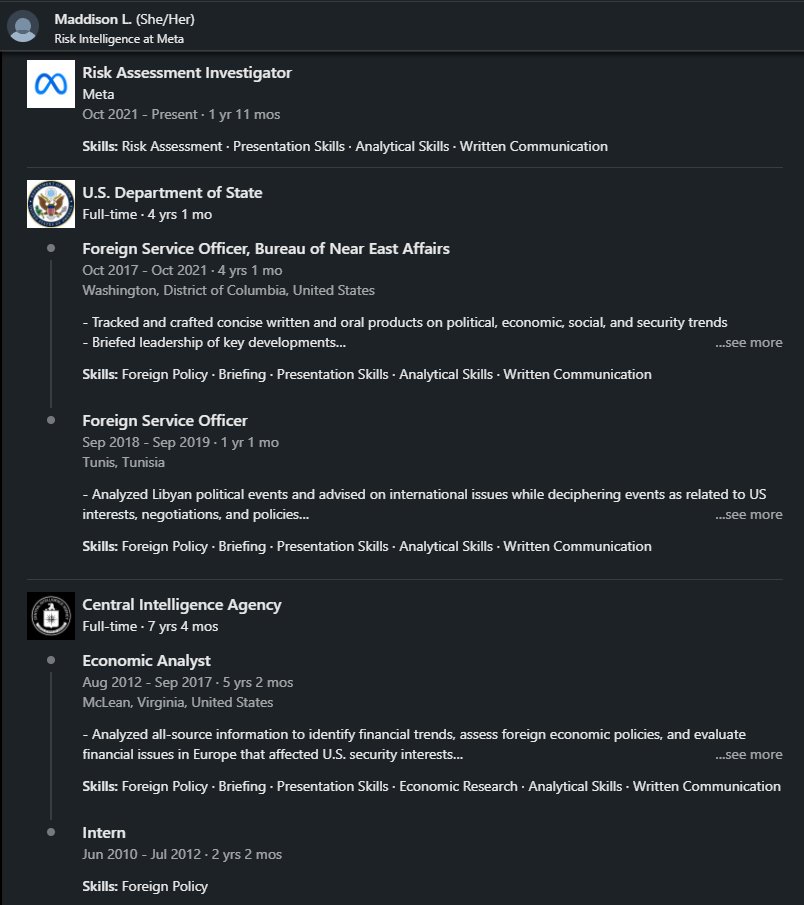

13. Maddison Lucier: Prior to joining Meta in 2021 in the Trust & Safety department as a Risk Assessment Investigator, Maddison served 7 years with CIA, followed by 4 years at the State Department as a Foreign Service Officer in Tunisia. linkedin.com/in/maddison-lu… https://t.co/ZR56Khl3hW

@NameRedacted247 - Name Redacted

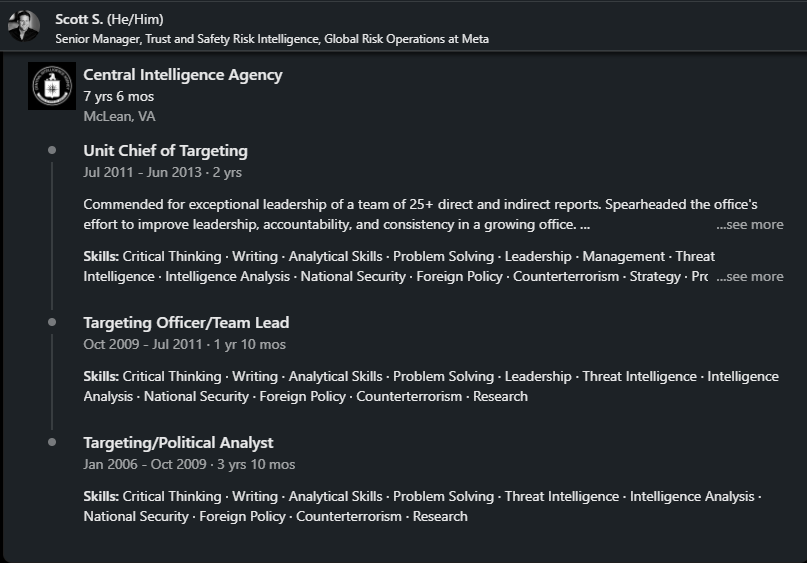

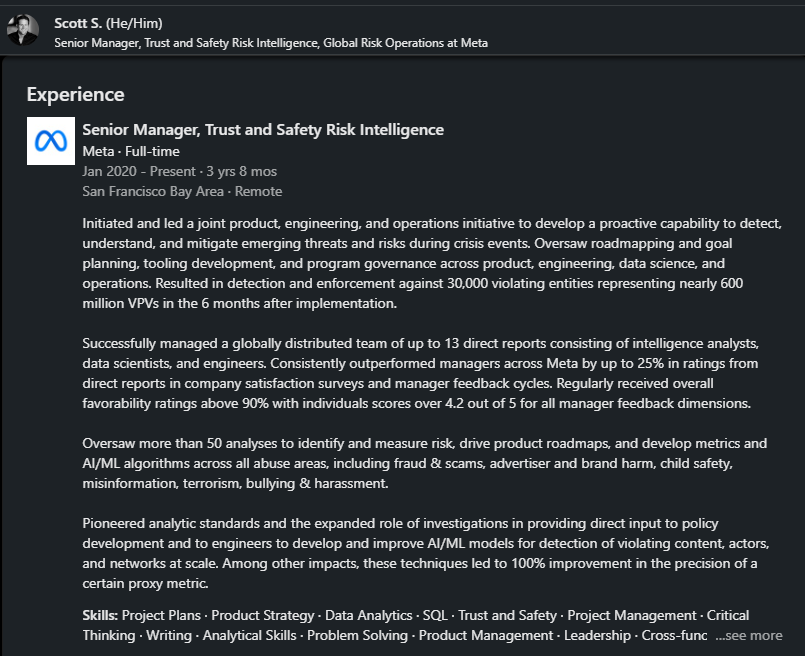

14. Scott Stern, who served as Chief of Targeting for the CIA for 7 and a half years, joined Meta in 2020 as Senior Manager of Trust & Safety. In his current role, he is responsible for developing AI/ML algorithms that address issues related to fraud & scams, advertiser & brand harm, child safety, "misinformation," terrorism, bullying, and harassment. linkedin.com/in/scottbstern/

@NameRedacted247 - Name Redacted

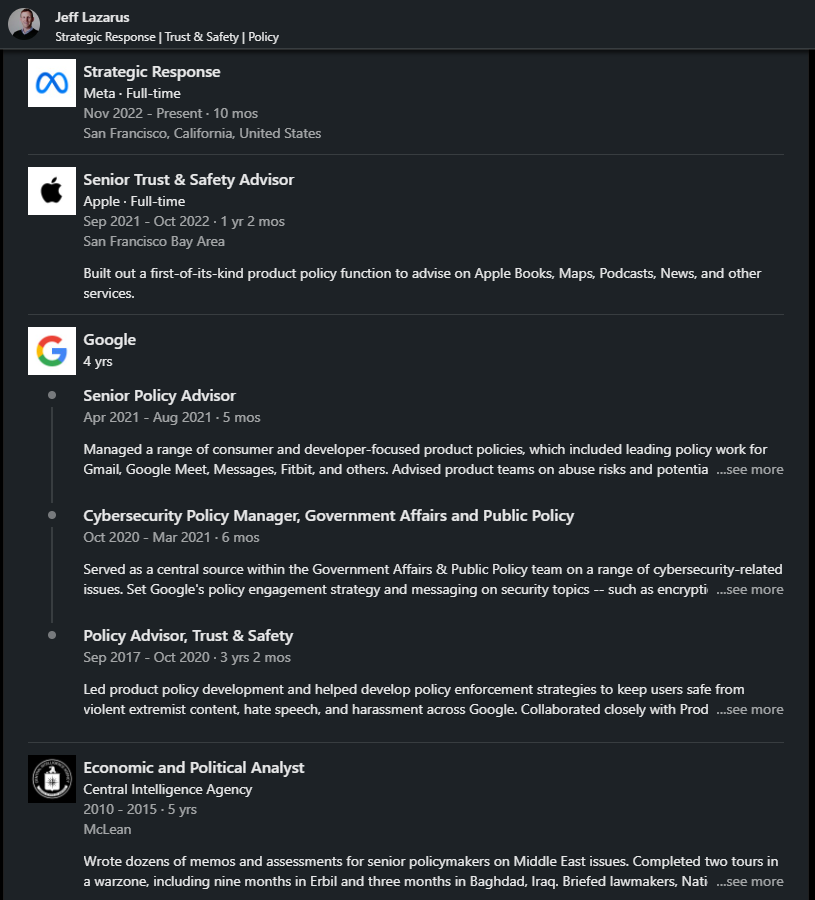

15. Jeff Lazarus joined Meta's Trust & Safety team in 2022, following an impressive career path: CIA: Served for 5 years Google: Worked for 4 years as a Senior Policy Advisor in Trust & Safety Apple: Spent 1 year as a Senior Trust & Safety Advisor linkedin.com/in/jeff-lazaru… https://t.co/43Vpcba2Vr

@NameRedacted247 - Name Redacted

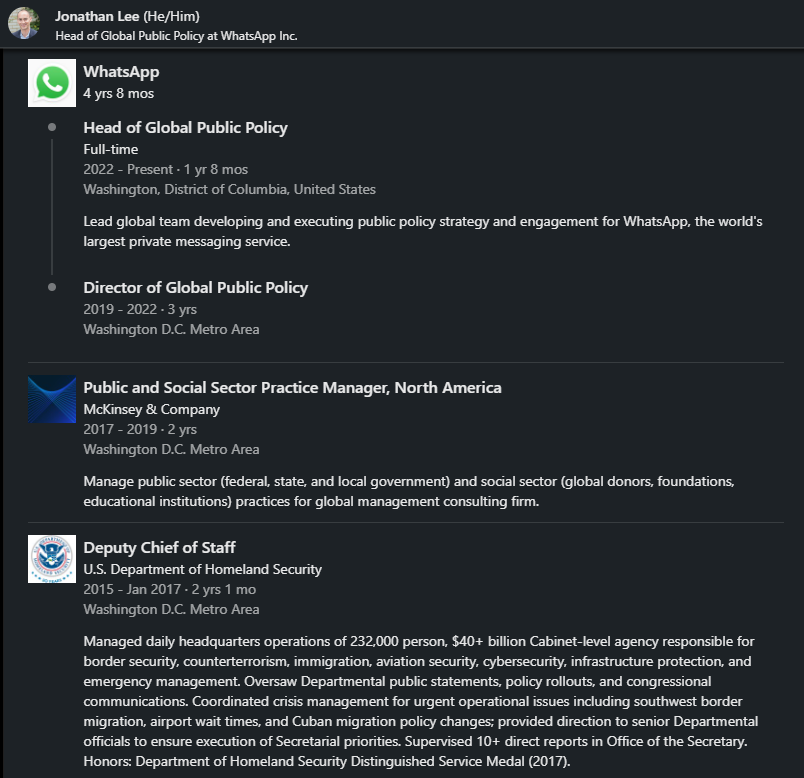

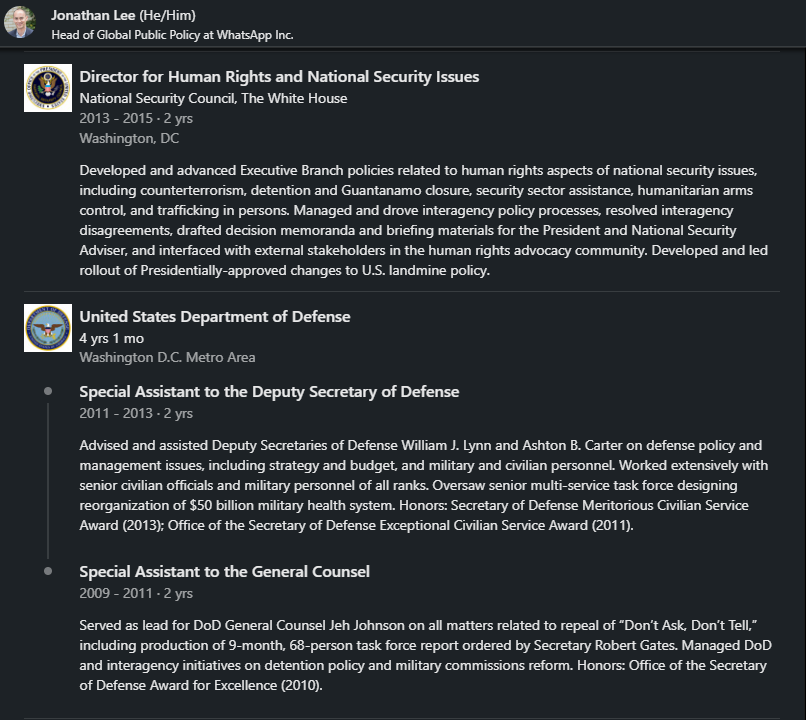

16. Jonathan Lee joined WhatsApp in 2019, initially serving as a Director of Global Public Policy. Since 2022, he has taken on the role of Head of Global Public Policy, alongside Courtney Cooper as Director. Before his tenure at WhatsApp, Jonathan held significant positions in various government agencies, including: 4 years at DOD (2009-2013) - Assistant to Deputy Secretary of Defense 2 years at National Security Council, Obama White House (2013-2015) - Director for Human Rights 2 years at DHS (2015-2017) - Deputy Chief of Staff linkedin.com/in/jonathan-l-…

@NameRedacted247 - Name Redacted

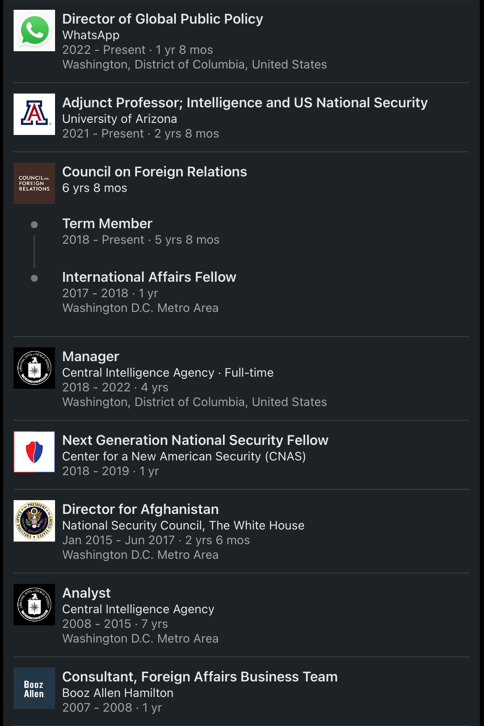

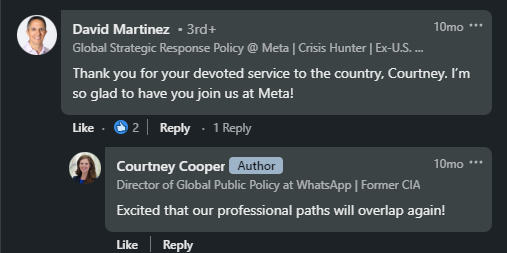

17. Courtney Cooper: Since 2022, she has held the esteemed position of Director of Global Public Policy for WhatsApp. Her impressive qualifications include: 2007-08: Booz Allen Hamilton 2008-2015: CIA (7 years) 2015-2017: National Security Council White House- Director for Afghanistan (2 years) 2018-2022: CIA (4 years) 2017-present: Member of the Council on Foreign Relations Counting a remarkable “16” years of federal service,” Courtney's career spans across 18 countries and 4 continents, encompassing experiences in a warzone and serving at the White House. Despite this extensive journey, she eagerly embraces her new role as a top director at WhatsApp. Upon joining WhatsApp, she receives a warm welcome from David Martinez, to which she replies, "Excited that our professional paths will overlap again!” linkedin.com/in/courtney-co…

@NameRedacted247 - Name Redacted

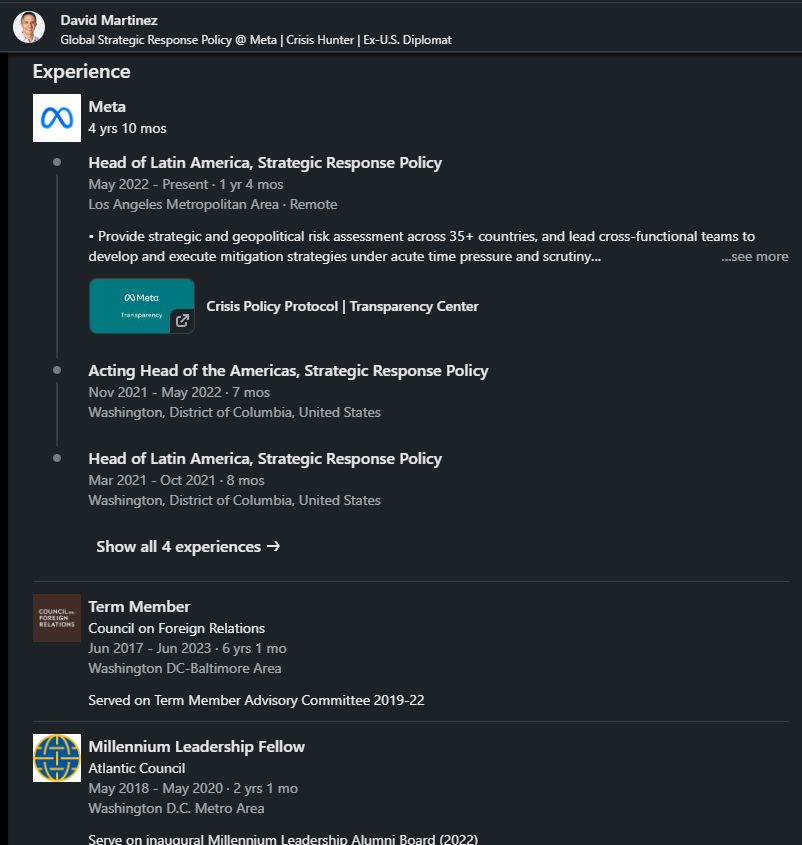

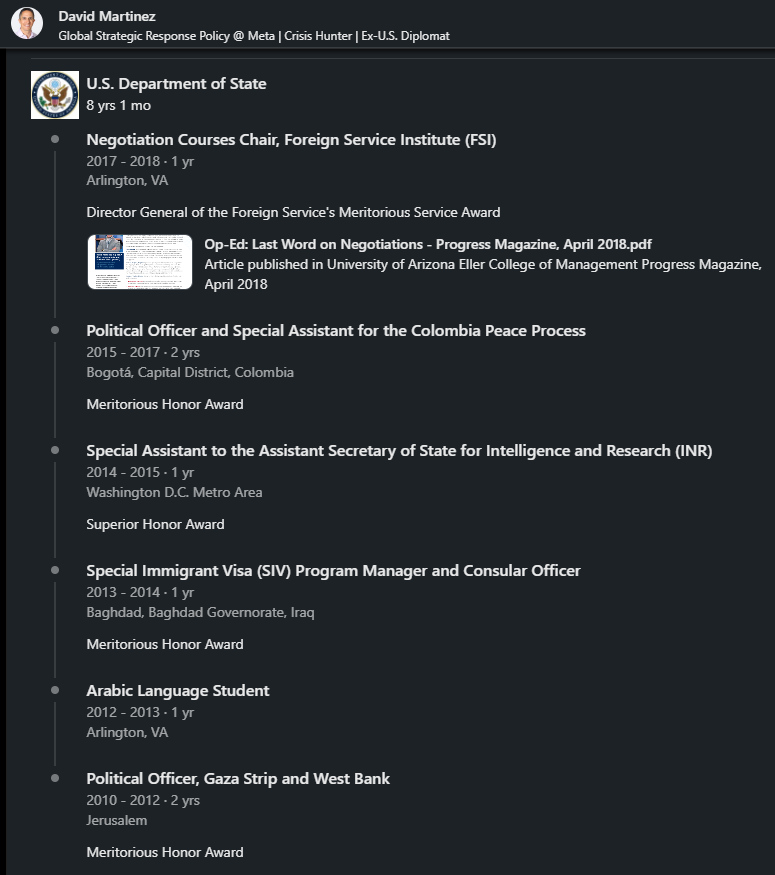

18. David Martinez: A valuable addition to Meta since 2018, he currently serves as the Head of Latin America Strategic Response Policy. David's qualifications are nothing short of impressive: 2008: Booz Allen Hamilton (1 year) 2010-2018: State Department, including 2 years as a Political Officer in Jerusalem, 1 year in Baghdad, and 2 years in Bogota 2018-2020: Atlantic Council fellow 2017-2023: Council on Foreign Relations linkedin.com/in/dmart/

@NameRedacted247 - Name Redacted

19. Joseph Schadler, after a remarkable 24-year career at the FBI, he made the move to WhatsApp in 2022, assuming the role of Trust & Safety Manager linkedin.com/in/josephschad… https://t.co/3s77mH9pZX

@NameRedacted247 - Name Redacted

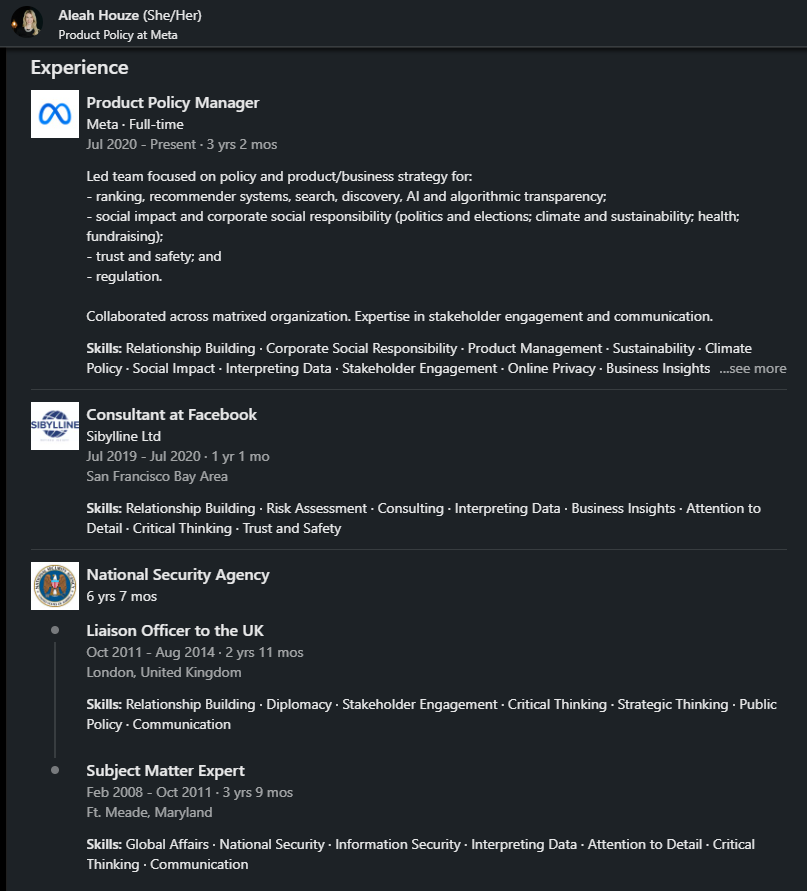

20. Aleah Houze joined Meta in 2020 as a Product Policy Manager in Trust & Safety, collaborating with Aaron Berman on matters related to "politics & elections." Prior to her role at Meta, she dedicated 7 years of service at the NSA as a Liaison Officer to the UK and served as a "Subject Matter Expert." linkedin.com/in/aleah-houze/

@NameRedacted247 - Name Redacted

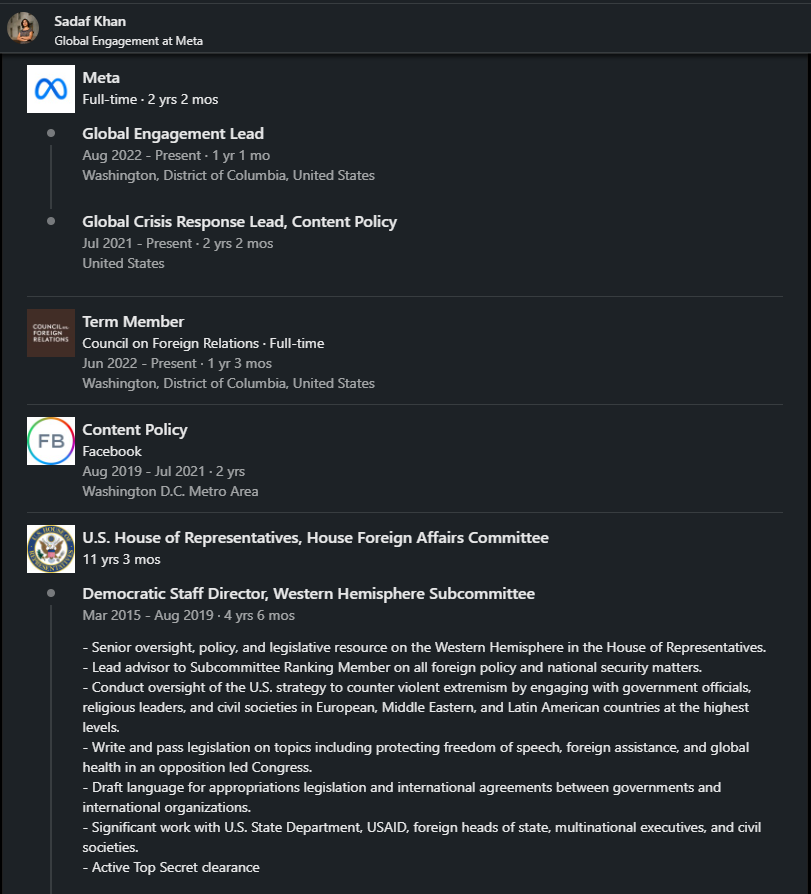

21. Sadaf Khan joined Meta in 2019, where she plays a vital role in Content Policy in Trust & Safety. Concurrently, she is a "team member" at the Council on Foreign Relations. Before her tenure at Meta, Sadaf served as a Democrat Staff Director for 11 years. During this time, she held a significant position as the lead advisor to the Subcommittee Ranking Member, providing guidance on various foreign policy and national security matters. Notably, Sadaf asserts that she possesses an active Top Secret clearance. linkedin.com/in/sjkhan/

@NameRedacted247 - Name Redacted

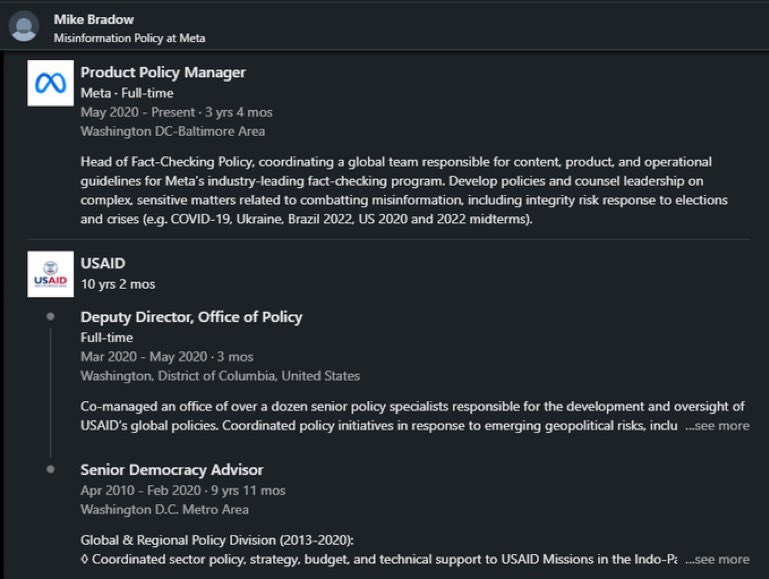

22. Mike Bradow joined Meta in 2020 as Head of Fact-Checking Policy. He works alongside Aaron Berman to tackle misinformation. What are Mike’s qualifications for this position? linkedin.com/in/mikebradow/ Mike spent 10 years at @USAID as Deputy Director in the Office of Policy. Recently @RepMattGaetz has called for USAID to be abolished 👇🏼

@NameRedacted247 - Name Redacted

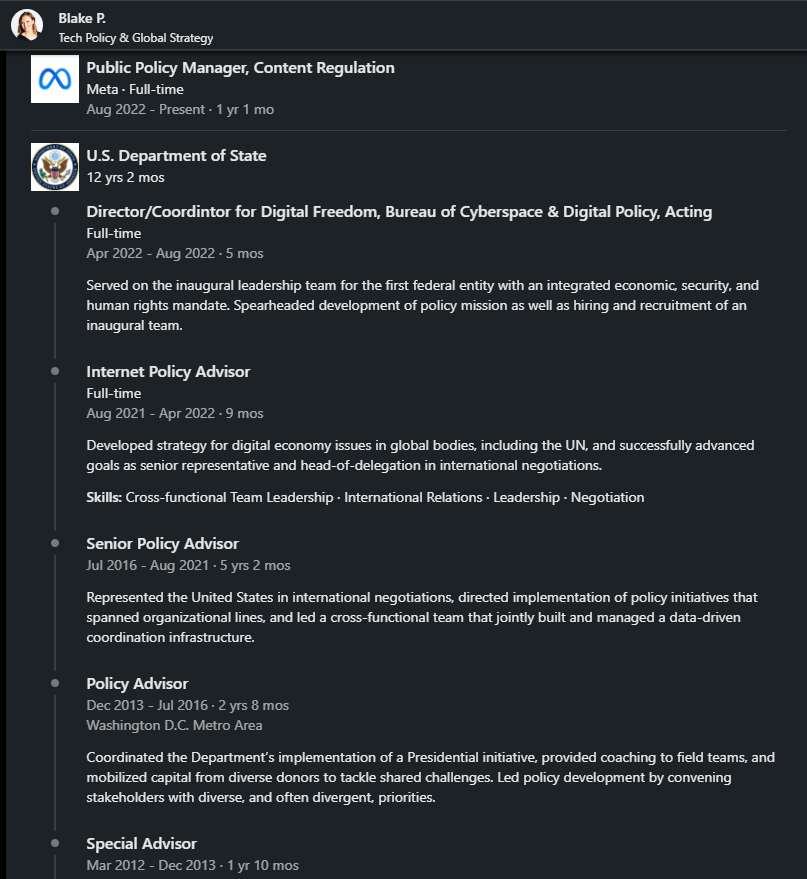

23. Blake P. joined Meta in 2022 as Public Policy Manager in Content Regulation. Her qualifications are working 12 years at the State Department, where her last role was as Internet Policy Advisor and Director for Digital Freedom linkedin.com/in/blake-p-216… https://t.co/gB7ULqkXR7

@NameRedacted247 - Name Redacted

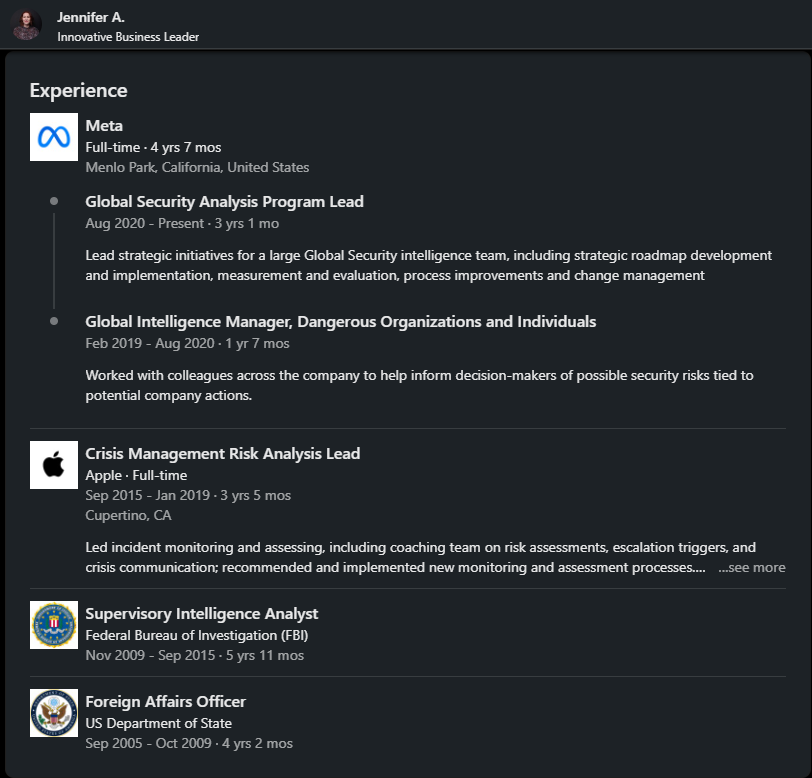

24. Jennifer A. joined Meta in 2019 and currently holds the position of Global Security Analysis Program Lead. Before joining Meta, she gained valuable experiences in the following government roles: 6 years at the FBI 4 years as a Foreign Affairs Officer at the State Department linkedin.com/in/jennifer-a-…

@NameRedacted247 - Name Redacted

25. Sam Aronson joined Meta in 2022 as a Global Policy Manager for Content Policy. His qualifications for this role include: DOD- 1 year (2014-2015)- Political Military Affairs Analyst State Department- 7 years (2015-2022)- After serving as a Special Agent for 2 years in New York, he was transferred to Niger serving in various roles. After 5 years in Niger, he spent less than a year in Afghanistan as a Political & Consular Officer. linkedin.com/in/sam-aronson…

@NameRedacted247 - Name Redacted

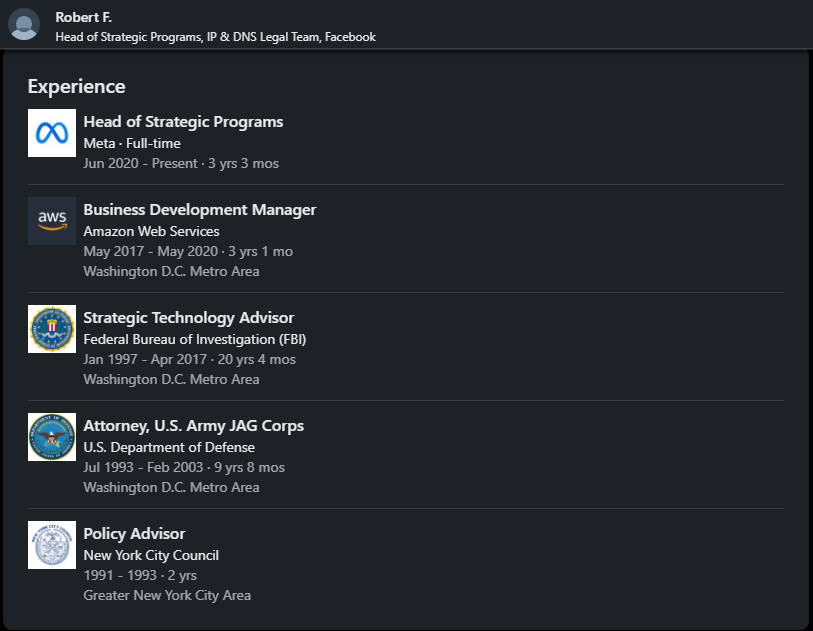

26. Robert Flaim joined Meta in 2020 as the Head of Strategic Programs. Before his tenure at Meta, he had a notable career path: 10 years as a JAG Corps Attorney for DOD Subsequently, a 20-year career at the FBI linkedin.com/in/bobbyflaim/ https://t.co/lRTIyxlMlr

@NameRedacted247 - Name Redacted

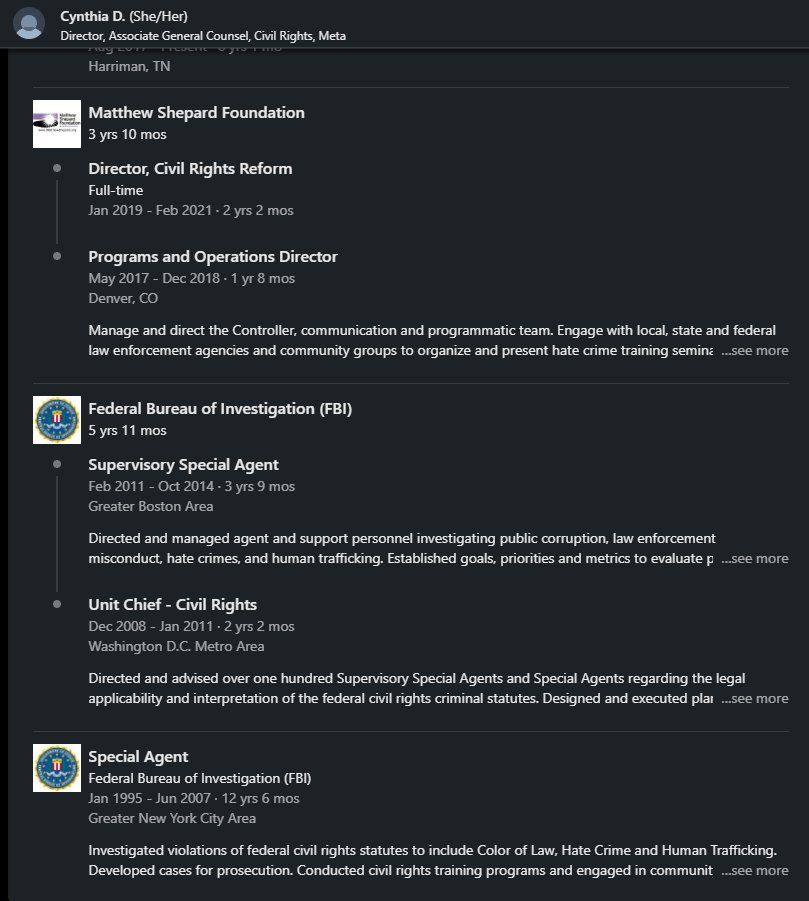

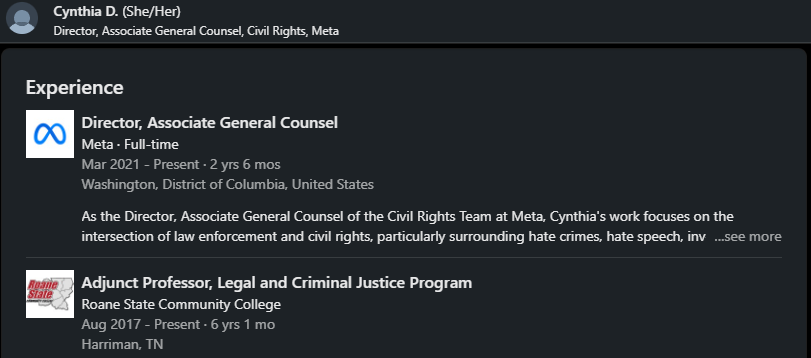

27. Cynthia Deitle joined Meta in 2021 as the Director Associate General Counsel. Prior to her role at Meta, she had an illustrious 19-year career at the FBI. As the Director, Associate General Counsel of the Civil Rights Team at Meta, Cynthia's work centers on the intersection of law enforcement and civil rights, with a particular focus on hate crimes, hate speech, investigations, and surveillance. linkedin.com/in/cynthia-d-3…

@NameRedacted247 - Name Redacted

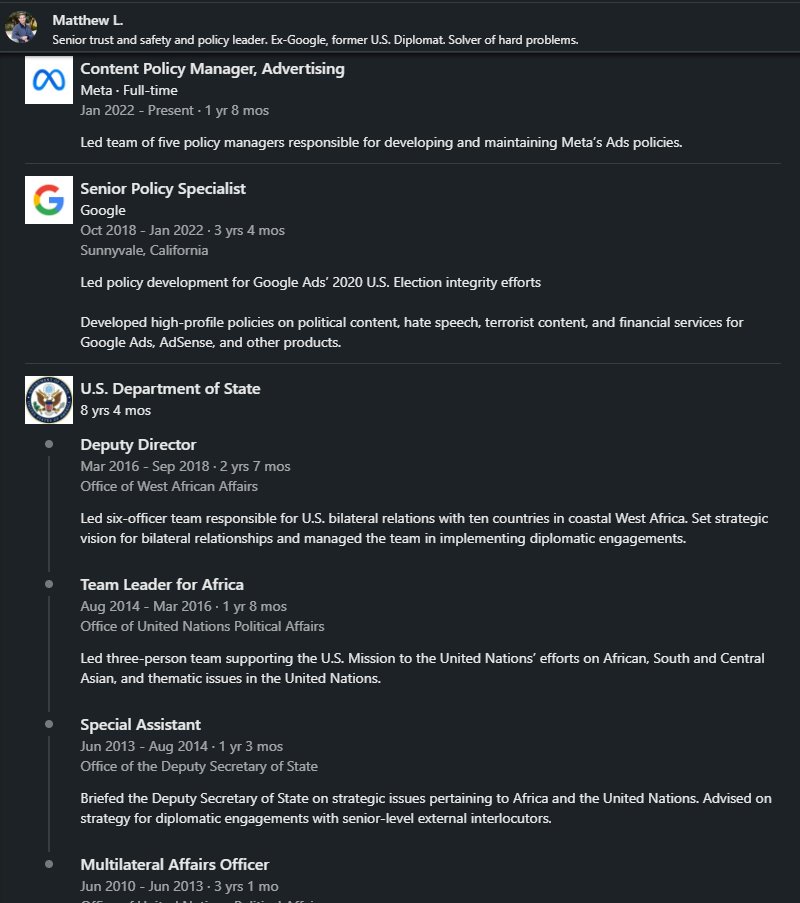

28. Matthew L. joined Meta in 2022. He is a Senior Trust & Safety Manager in charge of Content Policy. Prior to joining Meta, Matthew joined Google in 2018 as Senior Policy Specialist in charge of Google Ads’ “2020 US Election Integrity efforts” Prior to joining Google, Matthew worked at the State Department for 8 years focused in Africa. He also describes himself as a “Solver of hard problems” linkedin.com/in/matthew-l-a…

@NameRedacted247 - Name Redacted

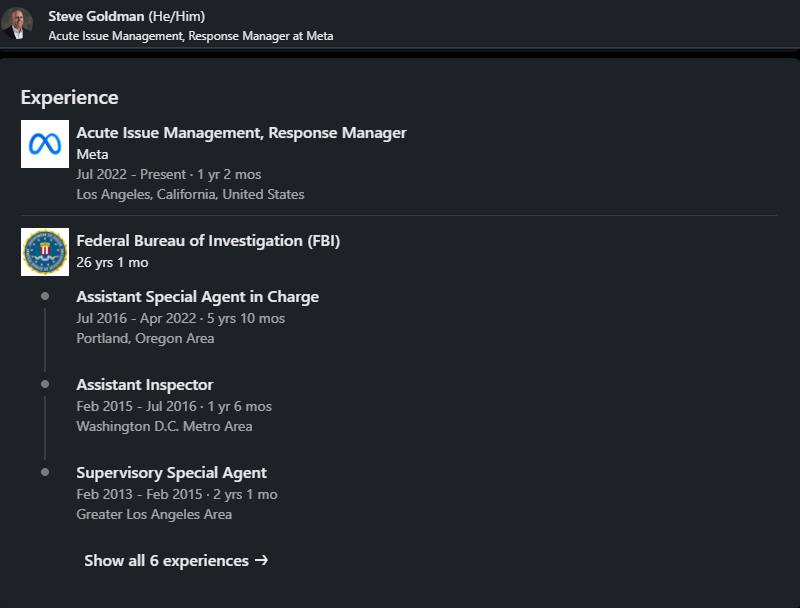

29. Steve Goldman joined Meta in 2022, assuming the role of Acute Issue Management, Response Manager. Before joining Meta, Steve had an extensive 26-year career at the FBI, where he served as a Special Agent in Charge in Portland. linkedin.com/in/steve-goldm… https://t.co/CCjRUMe3QD

@NameRedacted247 - Name Redacted

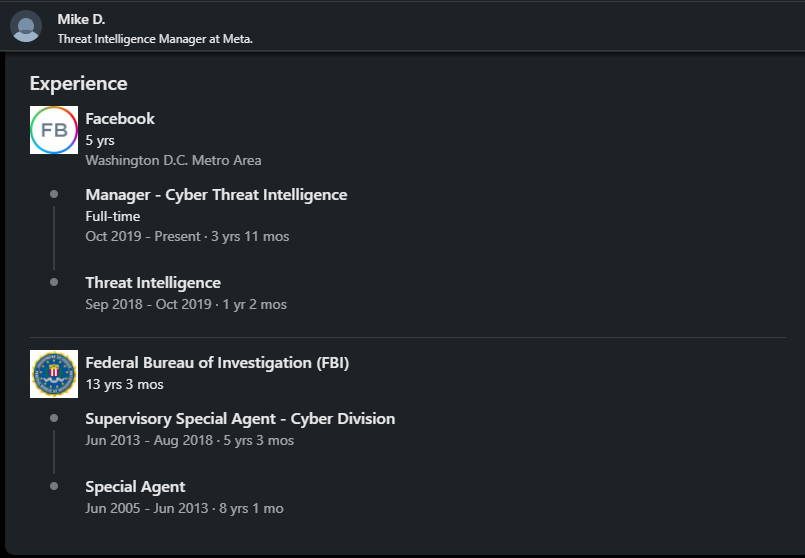

30. Mike D. joined Meta in 2018, assuming the role of Manager of Cyber Threat Intelligence. Prior to joining Meta, Mike served as a Supervisory Special Agent with the FBI for 13 years. linkedin.com/in/mike-d-48b1… https://t.co/5e2vhGWHje

@NameRedacted247 - Name Redacted

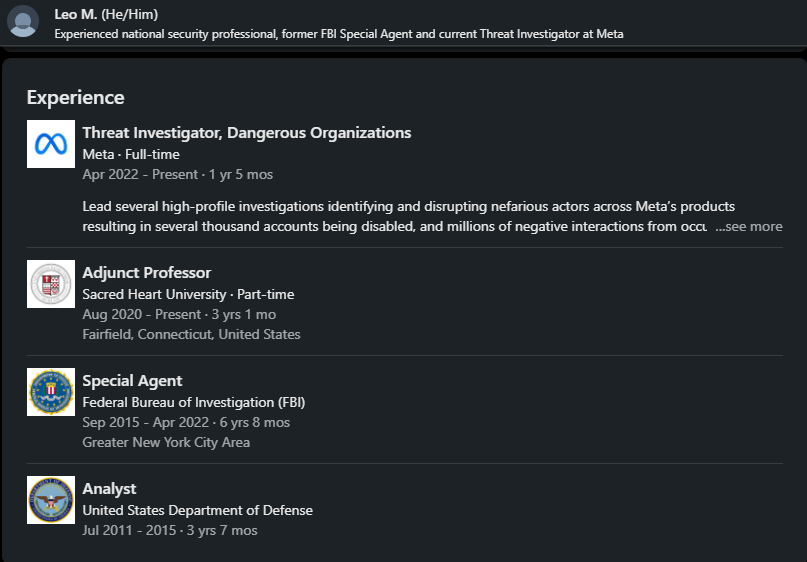

31. Leo M. joined Meta in 2022 as a Threat Investigator, specializing in Dangerous Organizations. Prior to his position at Meta, he served as a Special Agent at the FBI for 7 years and worked as an Analyst at the DOD for 4 years. linkedin.com/in/leo-m-35956… https://t.co/dqFK4qbpPN

@NameRedacted247 - Name Redacted

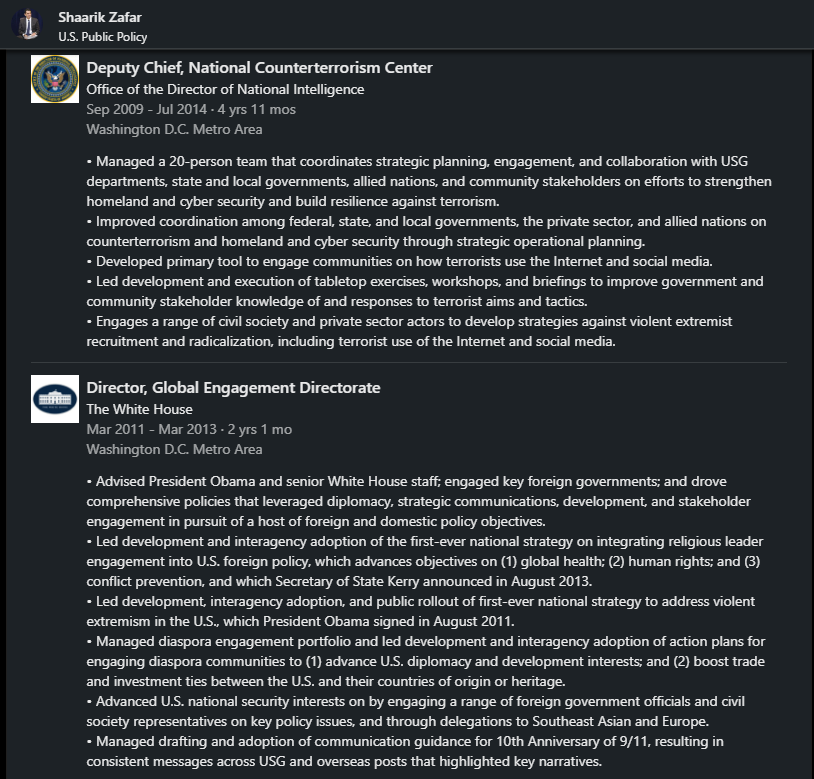

32. Shaarik Zafar joined Meta in 2018 in the US Public Policy department. He provides advice and counsel to cross-functional partners, driving development on issues including youth safety, hate speech, terrorism, misinformation, and political advertising. Prior to joining Meta, his extensive career includes: DOJ: 2 years (2004-2006) as Special Counsel post 9/11 discrimination DHS: 4 years (2006-2009) as a Senior Policy Advisor Obama White House: 2 years (2011-2013) as Director of Global Engagement Directorate & advisor to President Obama ODNI: 5 years (2009-2014) as Deputy Chief National Counterterrorism Center State Department: 3 years (2014-2017) as Special Representative to Muslim Communities & advisor to John Kerry ODNI: 2 and a half years (2016-2018) as Program Mission Manager linkedin.com/in/shaarikzafa…

@NameRedacted247 - Name Redacted

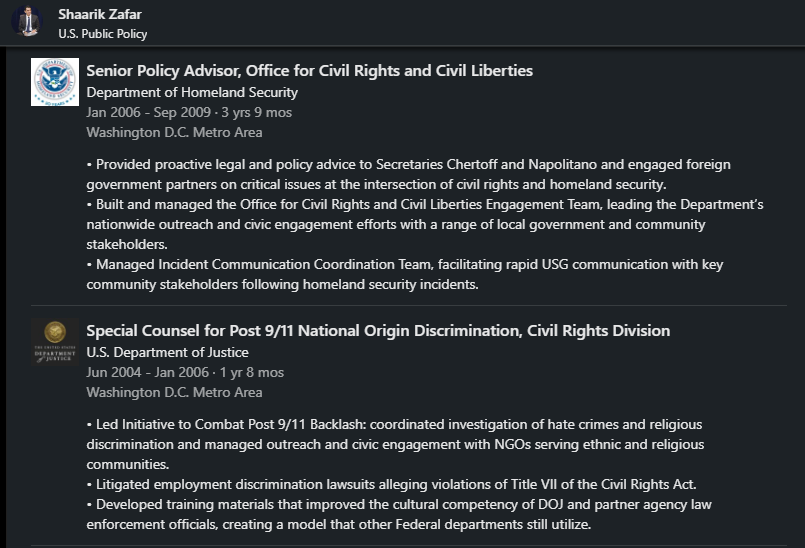

33. Amanda Lewis joined Meta in 2020 as the Global Systems Manager. Her prior experience includes 4 years at DOD as an Operations Analyst and 10 years as a Senior Analyst at DHS - linkedin.com/in/amanda-lewi… https://t.co/MnCB0biqSE

@NameRedacted247 - Name Redacted

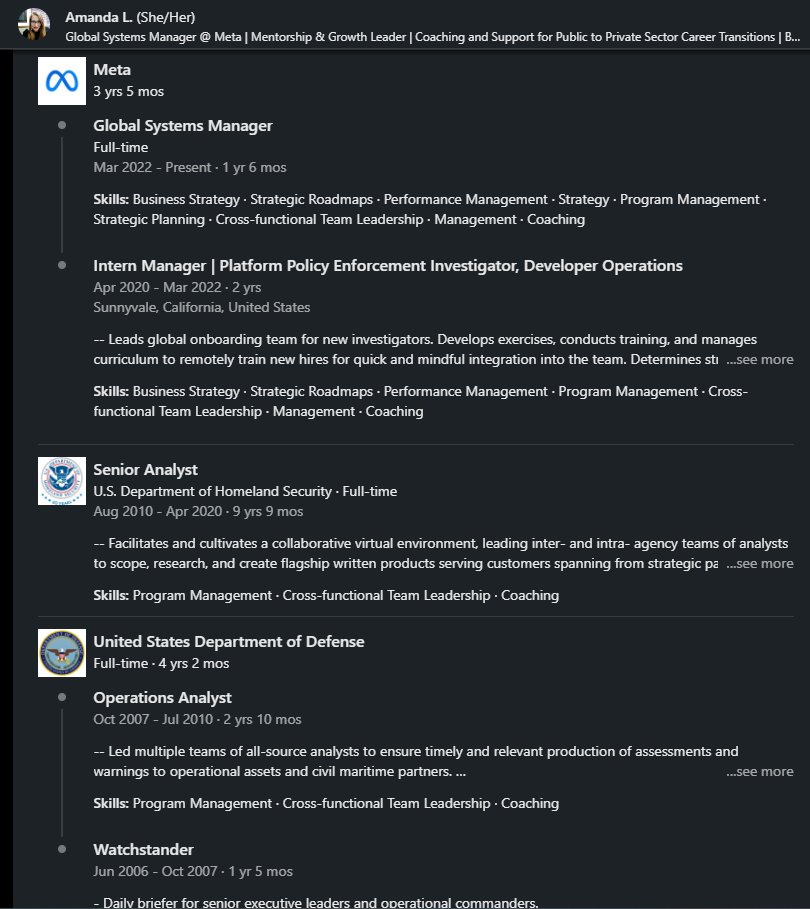

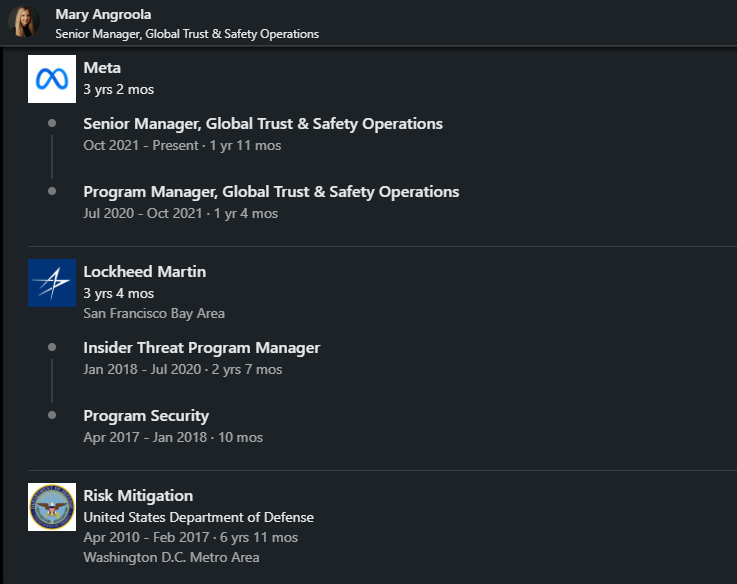

34. Mary Angroola joined Meta in 2020. She is a Senior Manager in Trust & Safety. Prior to Meta: DOD- 7 years (2010-2017)- Risk Mitigation Lockheed Martin – 3 years (2017-2020) linkedin.com/in/mary-angroo… https://t.co/80kFyBEqLy

@NameRedacted247 - Name Redacted

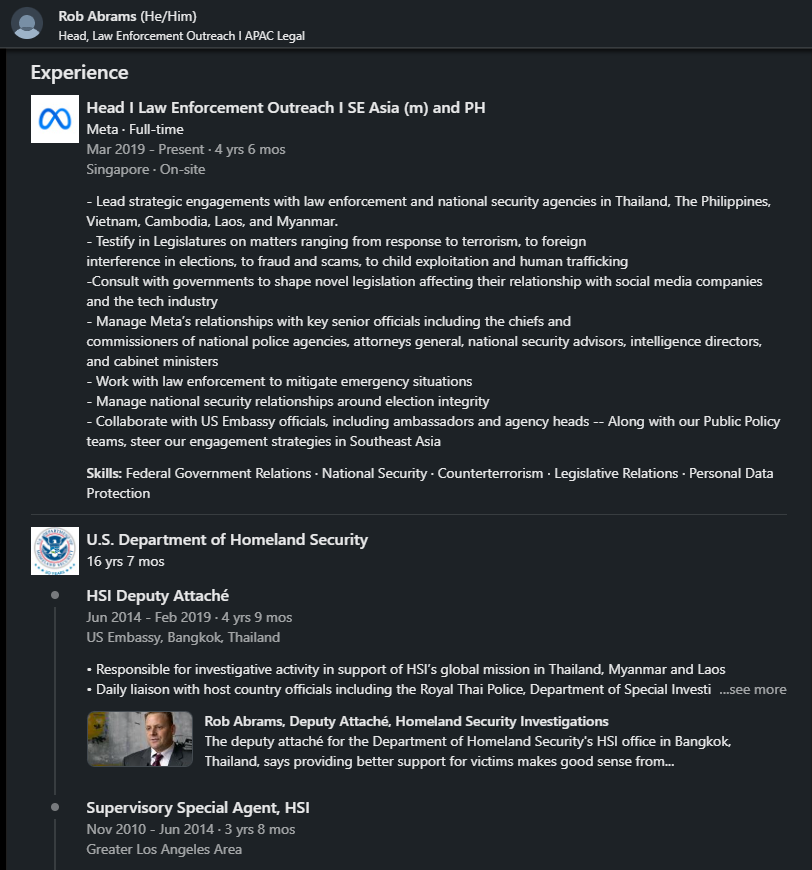

35. Rob Abrams joined Meta in 2019 after 16 years at DHS. He is the Head Law Enforcement Outreach for SE Asia & works in Singapore. Notable job duties at Meta include: “Consults with governments to shape novel legislation affecting their relationships with social media companies & the tech industry” “Manage national security relationships around election integrity” “Testify in Legislatures on matters ranging from response to terrorism, to foreign interference in elections, to fraud and scams, to child exploitation and human trafficking” My personal view is that while Rob's efforts to expose child exploitation and human trafficking through Meta's platform are commendable and deserving of credit, some concerns arise regarding his consultations with foreign governments on election-related matters and his involvement in crafting legislation that may favor social media firms. These aspects raise questions about potential biases and the impact on fair and transparent democratic processes. linkedin.com/in/abramsrj/

@NameRedacted247 - Name Redacted

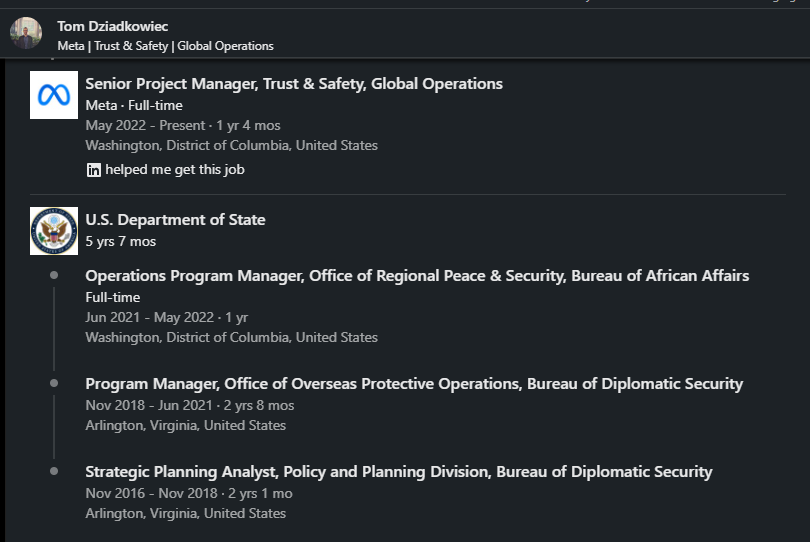

36. Tom Dziadkowiec joined Meta in 2022 as Senior Manager Trust & Safety. Prior to joining Meta, he worked at the State Department for 5 years as Program Manager linkedin.com/in/tom-dziadko… https://t.co/ns8C2CiIm1

@NameRedacted247 - Name Redacted

37. Abbas Ravjan-, Prior to joining Meta in 2019 as Privacy & Public Policy Senior Manager, Abbas was a Member of the Biden-Harris Transition Team. He developed & drafted policy memos & draft Executive Orders that were signed by President Biden on Day One. Prior to serving on the Biden-Harris transition team, he spent 6 years at the State Department. linkedin.com/in/abbasravjan…

@NameRedacted247 - Name Redacted

38. Kevin LeClair joined Meta in 2020- “Head of Operations”. Prior to Meta: 4 years State Department- Special Agent 3 years Department of Treasury- Special Agent 1 year US Attorney’s Office- Special Agent In his role at Meta, he describes himself as a Problem Solver, Business enabler & Community Builder. linkedin.com/in/kevinjlecla…

@NameRedacted247 - Name Redacted

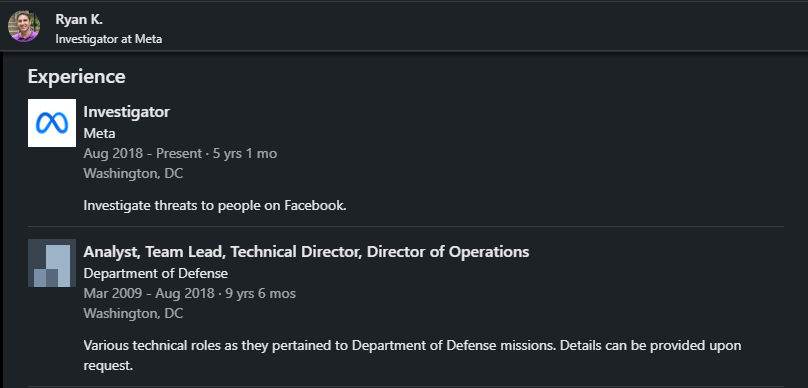

39. Ryan Kagin- Joined Meta in 2018. He’s an “Investigator” Prior to Meta, Ryan was an Analyst at the DOD for over 9 years linkedin.com/in/kagin/ https://t.co/VVT6VDRfa3

@NameRedacted247 - Name Redacted

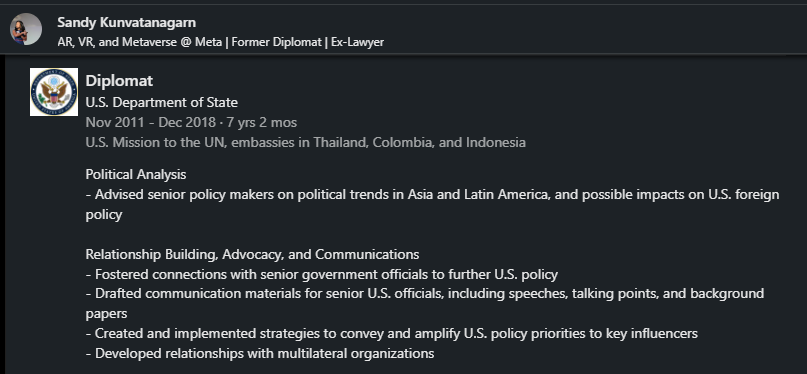

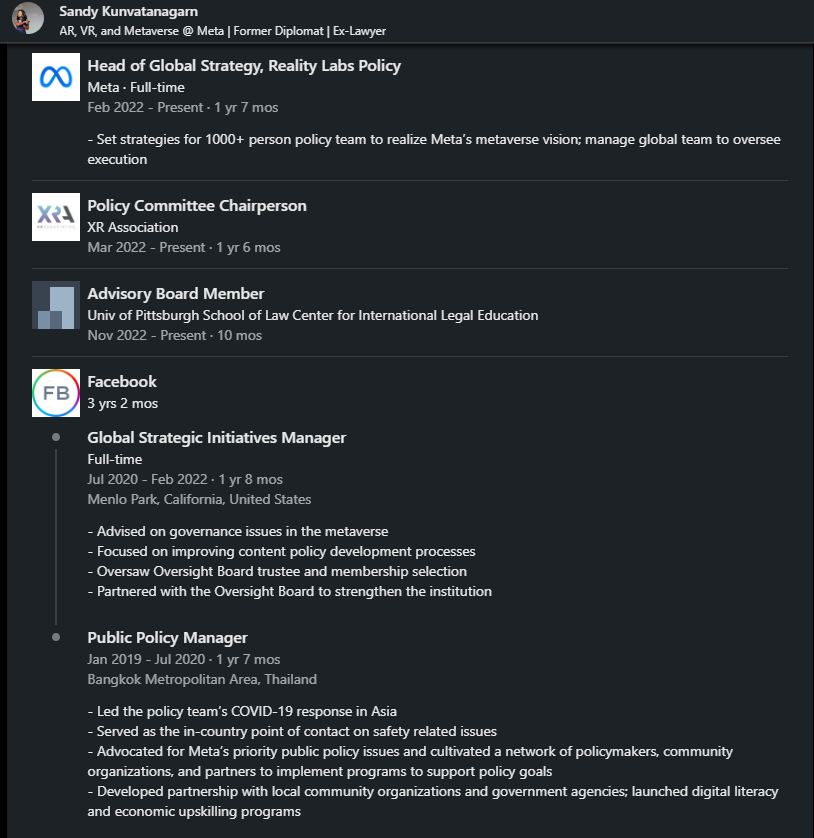

40. Sandy Kunvatanagarn- After serving the State Department as a Diplomat for 7 years, she joined Meta in 2019 as a Public Policy Manager. She claims to have led the policy team’s COVID-19 response in Asia linkedin.com/in/sandy-kunva… https://t.co/AFgRByk1mA

@NameRedacted247 - Name Redacted

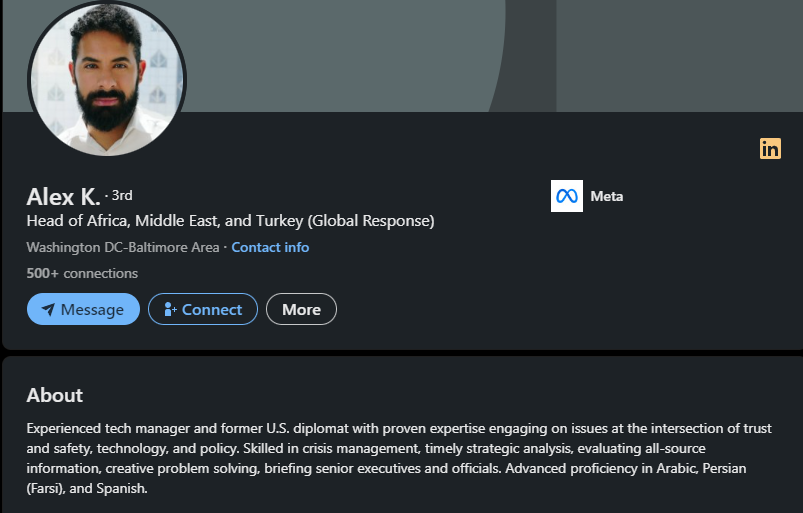

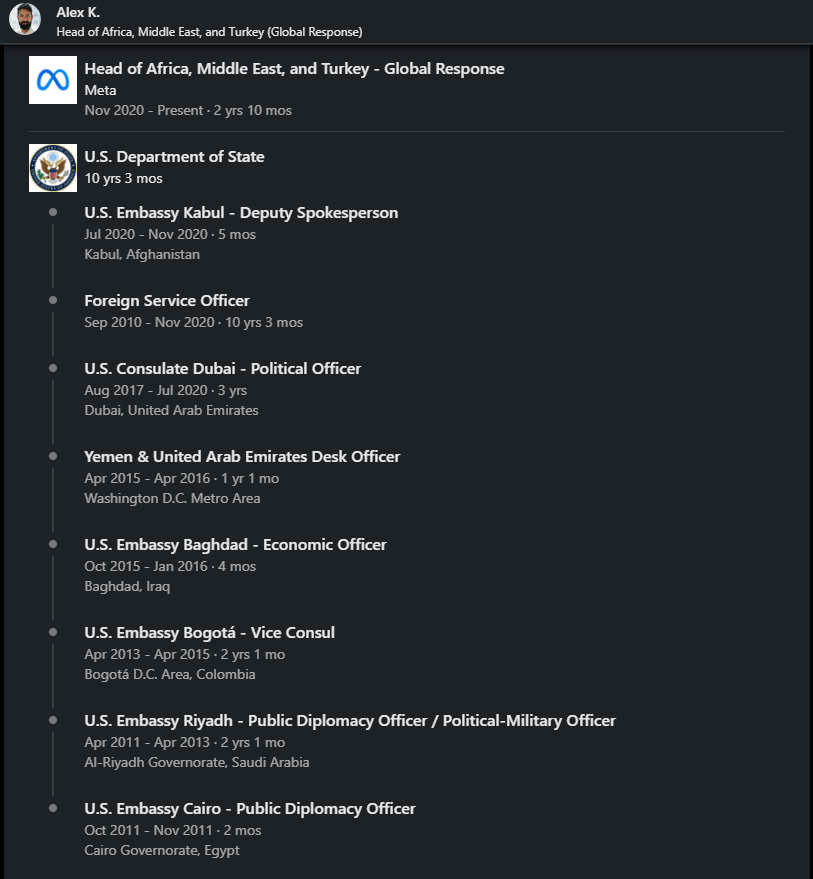

41. Alex Kokon- Joined Meta in 2020 as Head of Trust & Safety- Africa, Middle East & Turkey- Global Response. He describes himself as an “Experienced tech manager.” Prior to taking his talents to Meta, Alex worked at the State Department for 10 years. He worked at numerous US Embassies including Cario, Riyadh, Bogota, Baghdad, Yemen, UAE, Dubai & Kabul. linkedin.com/in/alexkokon/

@NameRedacted247 - Name Redacted

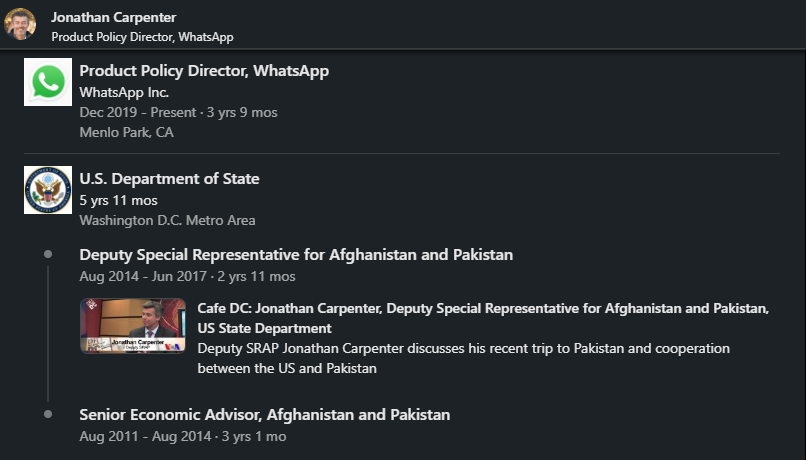

42. Jonathan Carpenter joined WhatsApp in 2019 as a Product Policy Director. Prior to that he spent 6 years a the State Department stationed in Afghanistan & Pakistan as a Senior Economic Advisor & Deputy Special Representative linkedin.com/in/jjcarp/ https://t.co/s7B4v0BrLn

@NameRedacted247 - Name Redacted

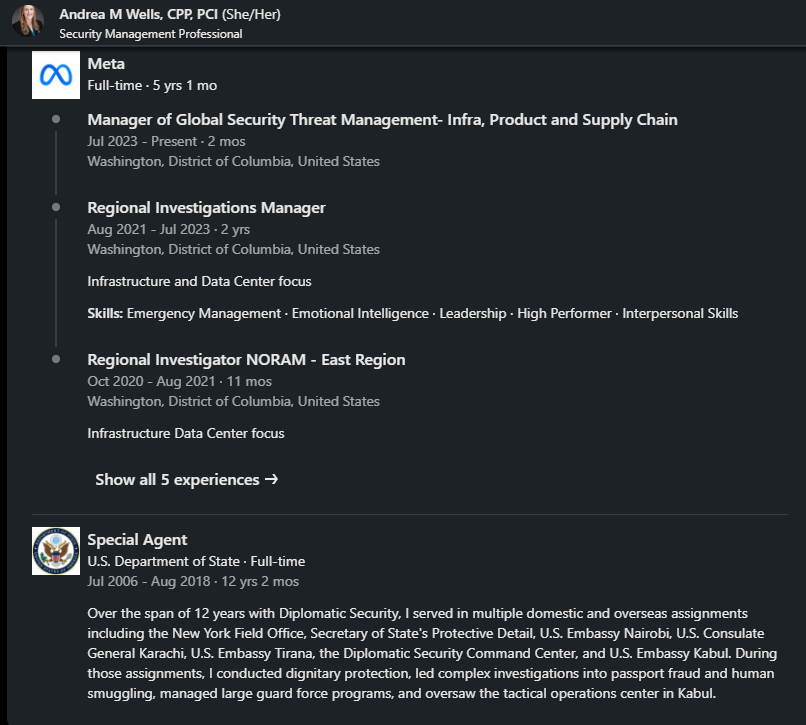

43. Andrea Wells- Joined Meta in 2018. She’s currently a Manager of Global Security Threat Management. Her prior experience is 12 years at State Department as a Special Agent linkedin.com/in/andreamwell… https://t.co/3xZO1zlAOf

@NameRedacted247 - Name Redacted

44. I've made my best effort to narrow down the results to Meta employees in Content Moderation, Public Policy, and Trust & Safety positions. The initial search yielded close to 400 names, but I excluded anyone with little to no relevant experience, such as interns or individuals with significant gaps in employment between their time in the Intelligence Community and joining Meta. Additionally, some employees' LinkedIn profiles are private, so the overall number is a rough estimate. Furthermore, it's worth noting that some employees have been laid off since my December 2022 thread, while others have either deleted their LinkedIn profiles or made them private. END

@NameRedacted247 - Name Redacted

20. Here is the full video, which is still available on YouTube. Moderated by CIA Renee DiResta from Stanford Internet Observatory. Includes Brian Clarke from Twitter Trust & Safety, Dr. Anne Merritt from Google & Aaron Berman from Facebook https://youtube.com/watch?v=hB_YNbnt8x4&t=90s…

@NameRedacted247 - Name Redacted

2. Aaron Berman spent over 17 years with the CIA before joining Facebook in 2019. He built their Misinformation Policy department and wrote most of the misinfo policy. Additionally, Berman says he 'represented Meta with external stakeholders.' This would include The White House, US Intel Agencies (FBI, CIA, DHS, CISA, etc.), foreign governments & intelligence, MSM, and more. He led the misinformation policy operation across all of Meta’s platforms for the 2020 US Election, COVID-19, the Ukraine War, and elections worldwide." http://linkedin.com/in/aarondberman/…

@NameRedacted247 - Name Redacted

2. Aaron Berman spent over 17 years with the CIA before joining Facebook in 2019. He built their Misinformation Policy department and wrote most of the misinfo policy. Additionally, Berman says he 'represented Meta with external stakeholders.' This would include The White House, US Intel Agencies (FBI, CIA, DHS, CISA, etc.), foreign governments & intelligence, MSM, and more. He led the misinformation policy operation across all of Meta’s platforms for the 2020 US Election, COVID-19, the Ukraine War, and elections worldwide." http://linkedin.com/in/aarondberman/…

@NameRedacted247 - Name Redacted

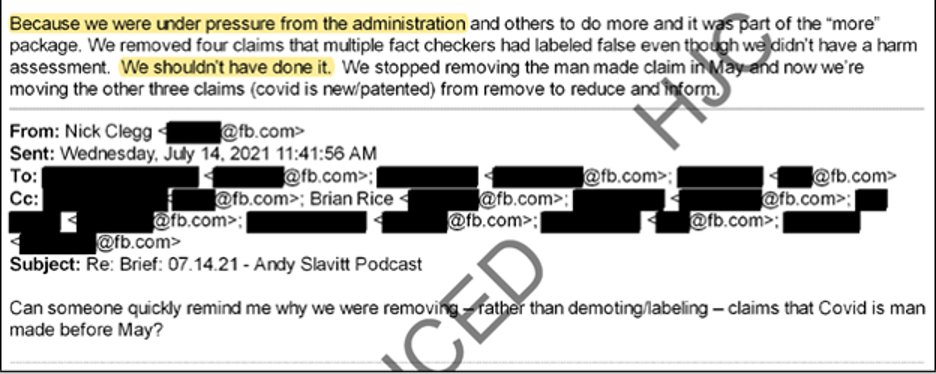

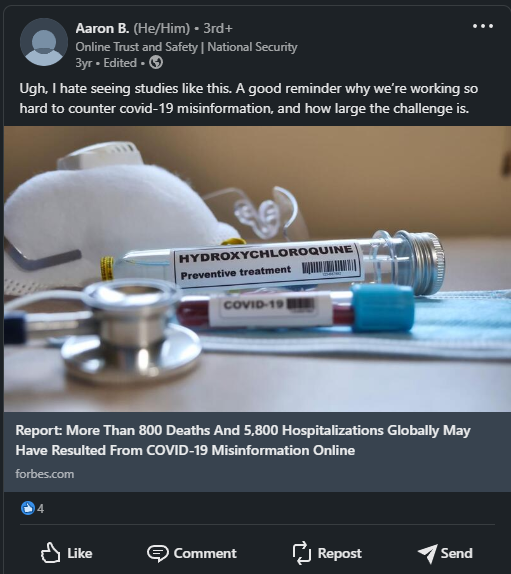

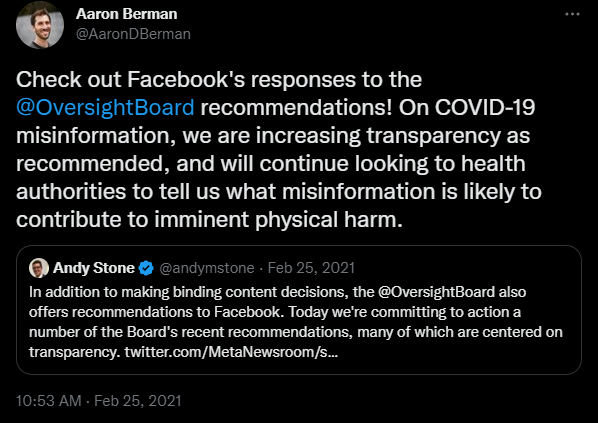

3. Aaron Berman is very active on his LinkedIn, with over a 100 posts. Here is a post from 3 years ago, while Trump was President. Berman expressed his frustration with COVID 'misinfo' about Hydroxychloroquine and mentioned that Meta was working hard to counter COVID-19 misinformation. Wait….what? According to @JimJordan's narrative, Facebook wouldn't have censored COVID content without pressure from Biden's White House. Biden wasn’t President in 2020. What’s going on here? Smoking Gun?

@NameRedacted247 - Name Redacted

4. In this next post, Aaron Berman stated that Meta was 'expanding' false claims they remove on Facebook & Instagram about COVID and vaccines. Again, this was written on April 16, 2020. Who was the President in 2020? Trump. Therefore, @Jim_Jordan's claim that Facebook only censored COVID because of pressure from the Biden White House is misleading https://about.fb.com/news/2020/04/covid-19-misinfo-update/

@NameRedacted247 - Name Redacted

@Jim_Jordan 5. In this post, Berman discusses how Facebook is promoting 'reliable' vaccine information to parents and enforcing their policies on harmful content related to children. He expresses his happiness about the FDA authorizing COVID vaccines for children.

@NameRedacted247 - Name Redacted

6. In the following tweets, you will see LinkedIn posts written by Aaron Berman that describe Meta’s extensive efforts to ‘combat misinformation’ in global elections. This is nothing more than election meddling, interference & rigging. In the first post, Berman talks about the 2020 US Election. He describes how Facebook displayed “warnings” on over 150 million pieces of content viewed on Facebook that were ‘debunked’ by one of Meta’s third-party fact checkers. Did Biden pressure Facebook to do this? In the second post, Berman ‘sends love’ to his fellow friends working on content moderation for the 2020 election and describes his efforts as being “neck-deep”

@NameRedacted247 - Name Redacted

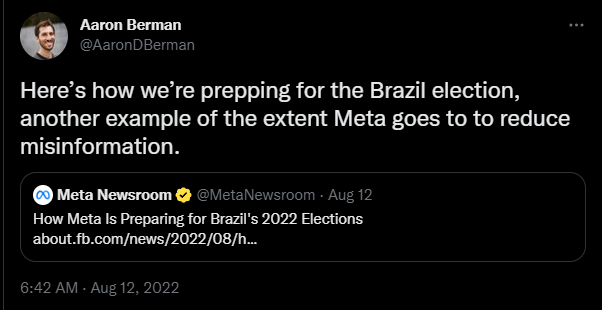

@Jim_Jordan 7. Berman writes in detail how Meta interfered in the 2022 Brazil election, by aggressively limiting the “forward messages” feature on WhatsApp and how Meta worked with 6 ‘fact checkers’ in Brazil @ggreenwald

@NameRedacted247 - Name Redacted

8. Berman posted about Meta’s election interference in Nigeria’s 2023 election which included ‘partering with local radio stations to create “NoFalseNewsZone” radio dramas in English & Pidgin”. Meta also ran ads on Facebook & radio in 4 different languages – Yoruba, Pidgin, Hausa & Igbo

@NameRedacted247 - Name Redacted

9. Berman posted about Meta’s election interference in Kenya’s 2022 election which included partnering with fact checkers AFP, Pesa Check & Africa Check to review content in English & Swahili. Berman states they also relied on ‘guidance from local partners.’ Who might that be? Also seen here is Meta’s extensive efforts to “combat misinfo” for the 2022 Philippines election

@NameRedacted247 - Name Redacted

10. This is nothing more than election interference by the largest social media platform with over 3 billion users worldwide. So- Is this all happening because of pressure from the “Biden White House”? Or is this a wider Intelligence Operation (Mockingbird) infiltrating Social Media to rig global elections, censor content on COVID, manipulate Americans on Ukraine War, Climate Change, and other important issues? Here’s more….

@NameRedacted247 - Name Redacted

@Jim_Jordan @ggreenwald 11. Berman posts on LinkedIn how Facebook & fact checkers are working in overdrive related to the Ukraine War. Also included is a tweet from Feb 2022, where he describes what Meta is doing to fight the spread of misinformation. He adds that Meta is working 24/7 on this.

@NameRedacted247 - Name Redacted

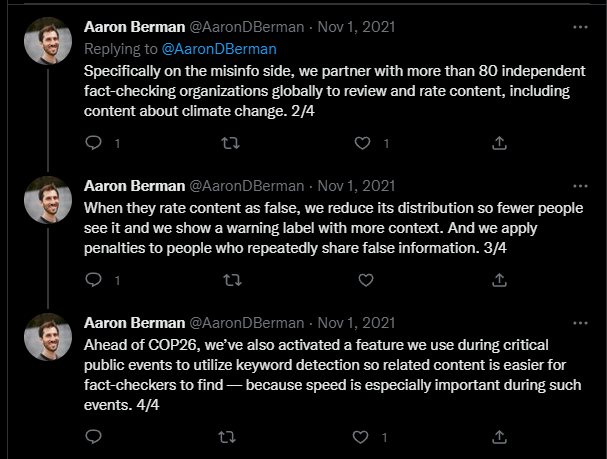

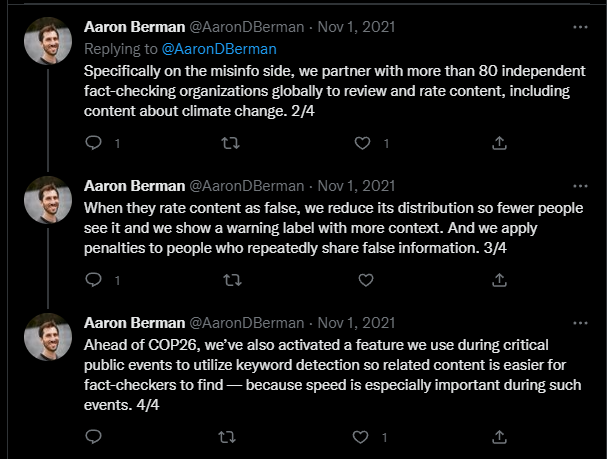

@Jim_Jordan @ggreenwald 12. Berman announces the launch of Facebook Climate Science Information Center to connect people to ‘authoritative info’ about climate change. He also tweeted how Meta has partnered with 80 independent fact checkers.

@NameRedacted247 - Name Redacted

13. In a very bizarre post on LinkedIn, Aaron Berman states that intelligence assessments are not meant to be crystal balls. He describes these assessments as having a range of plausible outcomes in order to help policymakers assess risks & ‘shape events’ accordingly. Lastly he states “EVEN AN ASSESSMENT THAT GETS THE PREDICTION WRONG MAY BE EXACTLY WHAT IS NEEDED.’- What does he mean by this?

@NameRedacted247 - Name Redacted

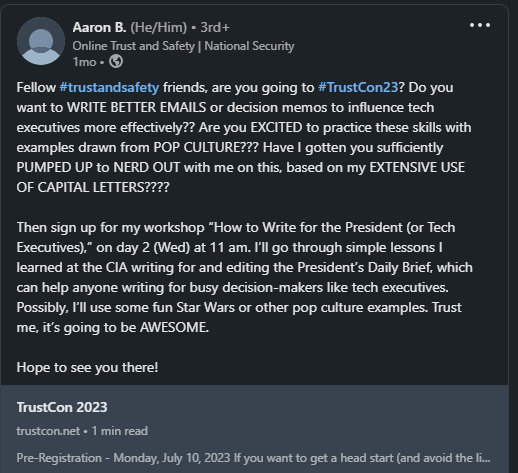

@Jim_Jordan @ggreenwald 14. Just this month, Aaron Berman attended TrustCon23, where he taught a workshop on how to write better emails in order to “influence tech executives more effectively” Interesting.

@NameRedacted247 - Name Redacted

15. Apparently there is a Global Fact Checker annual conference called “Global Fact”. It is hosted by Poynter’s International Fact Checking Network. This event was sponsored by Google, Meta, Tik Tok and News Initiative and held in Oslo, Norway. Aaron Berman was in attendance, of course, & spoke at the conference.

@NameRedacted247 - Name Redacted

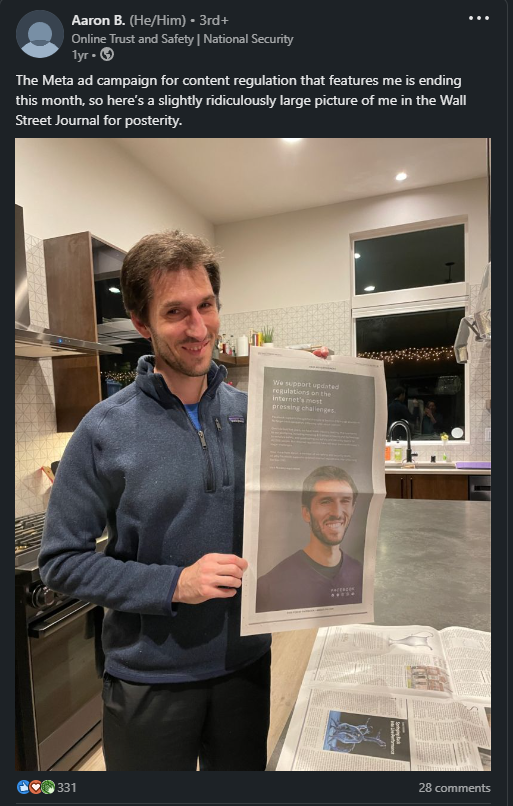

@Jim_Jordan @ggreenwald 17. Aaron Berman was also featured in an ad campaign for Meta https://about.meta.com/regulations/

@NameRedacted247 - Name Redacted

19. There are an alarming number of other Intelligence Community operatives currently working at Meta in Trust & Safety and other departments. I wrote a thread about this in December 2022. Some of you have seen it, but I will be publishing a new thread that highlights more people later this week. Stay tuned

@NameRedacted247 - Name Redacted

20. Here is the full video, which is still available on YouTube. Moderated by CIA Renee DiResta from Stanford Internet Observatory. Includes Brian Clarke from Twitter Trust & Safety, Dr. Anne Merritt from Google & Aaron Berman from Facebook https://www.youtube.com/watch?v=hB_YNbnt8x4&t=90s

@NameRedacted247 - Name Redacted

MAJOR UPDATE Aaron Berman, former 17 year CIA officer, is now “Head of Elections Policy” for Facebook & Instagram. Berman joined Facebook in 2019 and was responsible for writing Misinformation Policy & enforcing it for the 2020 election, COVID, Brazil elections etc He is joined by 15 other CIA, FBI & DHS working in Trust & Safety. LinkedIn - https://linkedin.com/in/aarondberman @RobertKennedyJr @elonmusk https://t.co/o7oLq4oAWr

@NameRedacted247 - Name Redacted

1. Google currently employs at least 165 people, in high-ranking positions, from the Intelligence Community. Google’s Trust & Safety team is managed by 3 ex-CIA agents, who control “misinfo & hate speech.” Here’s the breakdown: CIA-27 FBI-52 NSA-30 DHS-50 ODNI-6 Thread🧵

@NameRedacted247 - Name Redacted

2. Since the 2016 Presidential election, Google/Facebook/Twitter have hired at least 300+ people formerly employed by CIA, FBI, etc Ex-CIA agents are Heads of Trust & Safety at Google & Facebook. Is it OK that ex-CIA agents control what “misinfo” is?

@NameRedacted247 - Name Redacted

3. Nick Rossmann (He/Him)– Current Google Senior Manager Trust & Safety. Former CIA Analyst 5 years. https://www.linkedin.com/in/nickrossmann/

I am an experienced manager skilled in growing teams developing strategic insights for decision-makers. I am an effective relationship builder, well-versed in leading in cross-functional organizations - with product management, communications, legal, and public policy - to drive security results. I have proven skills in prioritizing and managing multiple projects with high visibility.

In the private sector, I have overseen the IBM threat intelligence group with a focus on organizational culture to permeate a "whatever it takes" attitude. I have grown a team of 12 analysts to 40 threat hunters, reverse engineers, and developers with a global voice on cybersecurity threats and protect global companies. I have launched threat intelligence research into the public to take analysts' insight to cybersecurity decision-makers around the globe, driving news coverage and market access.

While in government as a CIA intelligence analyst, I developed innovative intelligence products to address customers' needs. As an analyst covering a unique target - rule of law issues in the Middle East - I developed collaborative working relationships with intelligence customers to identify their analytic needs and use interviews and debriefings to leverage their knowledge for leaders across the community.

I readily take "personal leadership" regardless of my role in the organization - whether volunteering to lead interagency working groups, mentoring junior employees development gaps, and developing solutions for clients. | Learn more about Nick Rossmann's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

4. Rossmann has posted dozens of troubling tweets on his Twitter account. Many tweets show his disdain for President Trump, Trump’s family, Trump voters & white people Reminder- the following tweets are from a Senior Manager of “TRUST & SAFETY” at Google & former CIA analyst:

@NameRedacted247 - Name Redacted

5. In March 2020, while COVID infections were exploding, Rossmann, in a tweet directed at people who voted for Trump, stated: “I hope they cough on their grandparents, who voted for Trump, & get to rot” What did he mean by this? https://archive.vn/rppqw

@NameRedacted247 - Name Redacted

6. Rossman, in a series of anti-white people tweets, states, “Anti-vaxxers are like Nazis“ https://archive.vn/YWMDD https://archive.vn/ZdKeT https://archive.vn/PYgWh https://archive.vn/rOOpB

@NameRedacted247 - Name Redacted

8. Rossmann calls President Trump a “lunatic & racist” https://archive.vn/Pk5Kh

@NameRedacted247 - Name Redacted

9. Rossmann asks Trump if he’s an agent of a foreign power – https://archive.vn/xi7t8

@NameRedacted247 - Name Redacted

10.Rossmann tweets “Enjoy prison” to @EricTrump https://archive.vn/rsWmI

@NameRedacted247 - Name Redacted

11.Rossmann tweets that @Jaredkushner “should be strangled”- https://archive.vn/4C6rZ

@NameRedacted247 - Name Redacted

12. Jacqueline Lopour (She/Her)– Current Google Senior Manager Trust & Safety. Former CIA analyst 10 years. https://www.linkedin.com/in/jacqueline-l-23322072/

@NameRedacted247 - Name Redacted

13. Jacqueline is a proponent of the Russia-gate conspiracy theory. She states, emphatically, “They (Russia) deliberately released the DNC information to @wikileaks …with the specific motivation of getting Trump elected.” Full video link -https://www.cbc.ca/player/play/831297091976

@NameRedacted247 - Name Redacted

14. In a video posted on Facebook, Jacqueline made clear which candidate she, and the Intelligence Community, prefers- Hillary Clinton https://fb.watch/hDGbFzDoKr/

@NameRedacted247 - Name Redacted

15.I won’t list every employee in this thread, as it’s quite extensive. Below, I’ll highlight some of the more notable Senior Management roles at Google, held by former Intelligence Community:

@NameRedacted247 - Name Redacted

16.Dawn Burton (She/Her)- Current Google Director/Chief of Staff Privacy & Safety. Former Twitter Senior Director Trust & Safety 3 years. Former FBI Deputy Chief of Staff to Former Director James Comey- 4 years. Former DOJ 6 years https://www.linkedin.com/in/dawn-b-39dk394kf/

@NameRedacted247 - Name Redacted

17.Jacob Barrett – Current Google Director Trust & Safety. Former CIA 7 years. https://www.linkedin.com/in/jacobgbarrett/

@NameRedacted247 - Name Redacted

18.Beth Schmierer – Current Google Intelligence Manager. Former CIA Analyst 5 years. Former Department of State Diplomat in Spain 10 years. https://www.linkedin.com/in/beth-schmierer-b307aa222/

@NameRedacted247 - Name Redacted

19.Chelsea Magnant – Current Google Cybersecurity Policy Manager. Former CIA Analyst 9 years. https://www.linkedin.com/in/chelsea-m-68b667103/

@NameRedacted247 - Name Redacted

20.Katherine Tobin (She/Her). Current Google Head of Workspace Innovation. Former ODNI 7 years. Former CIA Branch Chief 4 years. https://www.linkedin.com/in/katherine-tobin/

@NameRedacted247 - Name Redacted

21.Yong Suk Lee- Current Google Director Global Risk Analysis. Former CIA analyst 22 years. https://www.linkedin.com/in/yong-suk-lee-7724a318a/

Visiting Scholar, Hoover Institution, Stanford University

Senior Fellow, Asia Program, Foreign Policy Research Institute

Member, Association of Threat Assessment Professionals | Learn more about Yong Suk Lee's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

22.Crystal Lister – Current Google Security & Trust Center Program Manager. Former CIA Cyber & Counterintelligence 9 years. https://www.linkedin.com/in/crystallister/

@NameRedacted247 - Name Redacted

23.Amber Johnson – Current Google Head of Global Communications. Former CIA 8 years. https://www.linkedin.com/in/amberchristina/

@NameRedacted247 - Name Redacted

24.Connie LaRossa – “Obama/Biden Alum” Current Google National Security Policy. Former Department of Defense 5 years. Former DHS 7 years. https://www.linkedin.com/in/connie-larossa-5a6bb077/

@NameRedacted247 - Name Redacted

25.Robert Chung- Current Google Key Account Executive. Former NSA Director Intelligence 2 years. Former US Army Intelligence Manager 11 years. Former State Department 1 year. https://www.linkedin.com/in/rob-chung/

@NameRedacted247 - Name Redacted

26. Adam Calabro- Current Google Cybercrime Manager. Former NSA analyst 7 years. https://www.linkedin.com/in/adam-calabro-6772a5109/

@NameRedacted247 - Name Redacted

27. Kingman Wong- Current Google Security Compliance Lead. Former FBI special agent 25 years. https://www.linkedin.com/in/kingman-k-wong-phd/

@NameRedacted247 - Name Redacted

28. Heather Dagostino – Current Google Program Manager. Former FBI Intel Analyst 11 years. https://www.linkedin.com/in/heatherdagostino/

@NameRedacted247 - Name Redacted

29. Pamela Cerria- Current Google Program Manager. Former Department of Defense 3 years. Former DHS 3 years. https://www.linkedin.com/in/pamela-cerria-0647a345/

@NameRedacted247 - Name Redacted

30. Jeremy Warner- Current Google Manager Customer Success. Former CIA Senior Intelligence Analyst 7 years. https://www.linkedin.com/in/jeremy-warner/

@NameRedacted247 - Name Redacted

31. Lauren Kelly – Current Google Office of CFO. Former Biden-Harris Transition Team. Former Director in Obama White House 6 years. Former DHS 2 years. https://www.linkedin.com/in/lauren-kelly-b4252582/

@NameRedacted247 - Name Redacted

32. Reminder- Twitter currently employs at least 15 former FBI agents Since Jim Baker was fired (after interfering with #TwitterFiles release) @Elonmusk has been asked, numerous times, how many FBI agents remain at Twitter. He refuses to answer Why? https://t.co/0Qn09iT5UP

@NameRedacted247 - Name Redacted

33. Last week, we learned that Facebook’s Head of Trust & Safety is a 17-year former CIA Analyst- Aaron Berman. https://t.co/UclWuL90D0

@NameRedacted247 - Name Redacted

34. Why, since 2016, did Twitter, Facebook & Google go on a hiring blitz of former CIA, FBI, NSA, etc. and assign them to high level managerial positions, many of which oversee “misinformation” and censorship policy?

@NameRedacted247 - Name Redacted

35. Given the coordination we’ve seen between FBI & Twitter in #TwitterFiles, there should be more media coverage on hiring practices at Big Tech .@JudiciaryGOP @Jim_Jordan should investigate why former IC agents are imbedded in high ranks of the largest Social Media companies

@NameRedacted247 - Name Redacted

1. After learning that Twitter employs at least 15 former FBI agents, I searched Facebook. What I found is alarming Facebook currently employs at least 115 people, in high-ranking positions, that formerly worked at FBI/CIA/NSA/DHS: 17 CIA 37 FBI 23 NSA 38 DHS Thread🧵

@NameRedacted247 - Name Redacted

2. All, but a few, of the former intelligence agents were hired, by Facebook after the 2016 Presidential Election & after the FBI established their social media-focused task force FTIF.

@NameRedacted247 - Name Redacted

3. As @mtaibbi detailed in #TwitterFiles Part 6, we know there was massive coordination of censorship between the FBI & Twitter during 2020-2022. Who is controlling “misinfo” censorship at Facebook? Is there similar coordination between Facebook & the Intelligence community?

@NameRedacted247 - Name Redacted

4. The following is a list (obtained through PUBLICLY available LinkedIn profiles) of former CIA/FBI/NSA/DHS that are currently working at Facebook, at least 10 work in the Trust & Safety (Misinfo) department. Many of the LinkedIn profiles are private so those will not be posted.

@NameRedacted247 - Name Redacted

5. Aaron Berman (He/Him) leads the Misinformation Policy team at Facebook. According to Aaron’s public LinkedIn profile, he worked for the CIA for 17 years. https://www.linkedin.com/in/aarondberman/

@NameRedacted247 - Name Redacted

6. Aaron states that his experience at the CIA included writing President’s Daily Brief, leading briefings for Cabinet members, senior NSC officials & members of Congress.

@NameRedacted247 - Name Redacted

7. On Twitter, Aaron is followed by Yoel Roth & admits he is friends with Trust & Safety people at Twitter. Was Facebook coordinating with Twitter on info-sharing to censor posts they deem as ‘misinfo’? archive.vn/7r2vX

@NameRedacted247 - Name Redacted

8. Aaron admits to specific Facebook campaigns where he tackles “misinfo.” Re: COVID19, they allow ‘health authorities’ to guide what Facebook should label as misinformation archive.vn/85N7v

@NameRedacted247 - Name Redacted

9. On a YouTube discussion, with Stanford, Aaron admits that Facebook works with a ‘Global network of over 80 fact checker Organizations” who direct Facebook on which posts to reduce distribution, add warning labels & shadowban

@NameRedacted247 - Name Redacted

10. Aaron discusses in detail the lengths Facebook goes to in censoring what they deem as COVID19 misinfo, specifically on Vaccines

@NameRedacted247 - Name Redacted

11. Here is the entire YouTube video where Aaron and members from Twitter & Google discuss misinformation censoring https://www.youtube.com/watch?v=hB_YNbnt8x4&t=90s

@NameRedacted247 - Name Redacted

15. Aaron tweeted that the CIA backs insurgency groups archive.vn/i8KiE

@NameRedacted247 - Name Redacted

16.“As a current combatant against misinfo and former intelligence officer” archive.vn/9jAkq

@NameRedacted247 - Name Redacted

17. Climate change censorship & again, Aaron states that Facebook partners “with more than 80 independent fact-checking organizations” archive.vn/gArWb archive.vn/6ijCS

@NameRedacted247 - Name Redacted

18.Deborah B. (She/Her). Current Facebook Trust & Safety. Former CIA Analyst 15 years. https://www.linkedin.com/in/deborah-b-219225225/

@NameRedacted247 - Name Redacted

19.Scott S. (He/Him) current Facebook Senior Manager Trust & Safety. Former CIA 7 years. https://www.linkedin.com/in/scottbstern/

@NameRedacted247 - Name Redacted

20.Bryan Weisbard. Current Facebook Trust & Safety. Formerly 9 years of “multiple senior level leadership positions in US Government Intelligence Community.” Former Twitter Online Safety & Security Analysis 4 years. Former Youtube Trust & Safety 1 year. https://www.linkedin.com/in/bryanweisbard/

@NameRedacted247 - Name Redacted

21.Hagan Barnett. Current Facebook Trust & Safety Operations Lead. Former Self Employed Contractor CIA 1 year, Booz Allen 4 years, US Department of Treasury 3 years. https://www.linkedin.com/in/haganbarnett/

@NameRedacted247 - Name Redacted

22.Jeff Lazarus. Current Facebook Trust & Safety. Former Apple Trust & Safety 1 year. Former Google Trust & Safety 4 years. Former CIA 5 years. https://www.linkedin.com/in/jeff-lazarus-b76846191/

@NameRedacted247 - Name Redacted

23.Chon Rosa. Current Facebook Trust & Safety. Former US Army Intelligence & Security Command 4 years. https://www.linkedin.com/in/chon-c-rosa/

@NameRedacted247 - Name Redacted

24.Jason Barry. Current Facebook Trust & Safety Manager. Former DHS 7 years. https://www.linkedin.com/in/jason-barry-808536a9/

@NameRedacted247 - Name Redacted

25.Rick Cavalieros. Current Facebook Trust & Safety Manager. Former FBI 21 years. https://www.linkedin.com/in/rick-cavalieros-17a1198/

@NameRedacted247 - Name Redacted

26. Sandeep A. (He/Him). Current Senior Investigator Trust & Safety. Former NSA SIGINT Lead Analyst 4 years. https://www.linkedin.com/in/sandeep-abraham/

@NameRedacted247 - Name Redacted

27. Amarpreet G. (She/Her). Current Facebook Product Integrity, Elections. Former FBI 6 years. https://www.linkedin.com/in/amarpreet-ghuman/

@NameRedacted247 - Name Redacted

28. Brian Kelley. Current Facebook Law Enforcement Outreach Manager. Former FBI 7 years. https://www.linkedin.com/in/briankelley0717/

@NameRedacted247 - Name Redacted

29. Aleah Houze. Current Facebook Product Policy Manager. Former NSA 7 years. https://www.linkedin.com/in/aleah-houze/

@NameRedacted247 - Name Redacted

30. Shawn Turskey. Current Facebook Global Director Security Investigations. Former NSA 19 years. Former US Cyber Command 4 years. https://www.linkedin.com/in/shawnturskey/

In Sept 2022, I… | Learn more about Shawn Turskey's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

31. Mike Torrey. Current Facebook Security Engineer Investigator. Former NSA 3 years. Former CIA 9 years. https://www.linkedin.com/in/mike-torrey-01658b14a/

@NameRedacted247 - Name Redacted

32. Corey Ponder. Current Facebook Senior Strategist. Former Policy Consultant DHS 7 months. Former CIA 6 years. Former Policy Advisor Google 2 years. https://www.linkedin.com/in/coreytponder/

@NameRedacted247 - Name Redacted

33. John Papp (He/Him). Current Facebook Infrastructure ASIC Sourcer. Former DIA 4 years. Former CIA 12 years. https://www.linkedin.com/in/johnpapp/

@NameRedacted247 - Name Redacted

34. Nick Lovrien (He/Him). Current Facebook Chief Global Security. Current Board Director US State Department. Former CIA 5 years. https://www.linkedin.com/in/nick-lovrien-cpp-a5a98392/

Nick is a recognized expert in global security strategy, risk management, geopolitics, and national security, with a unique background in both the public (CIA) and private sectors. He serves on the Board of Directors for the US Department of State Overseas Security Advisory Council, the International Security Foundation, and the Silicon Valley Leadership Group. He has received multiple awards and honors, including the Don Walker Chief Security Officer Of The Year Award in 2020. Nick is also a passionate advocate for diversity, equity, and inclusion, and a proud executive sponsor for Meta's LGBTQ+ resource group. He is fluent in English, Portuguese, and Spanish. | Learn more about Nick Lovrien, CPP's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

35. Cameron H. Current Facebook Workflow Risk Project Manager. Former CIA 4 years. https://www.linkedin.com/in/cameron-h-759a9b191/

@NameRedacted247 - Name Redacted

36. Andi Allen (She/Her). Current Facebook Senior Technical Recruiter. Current “Talent Partner” for https://helpukraine22.org/ . Former CIA 4 years. https://www.linkedin.com/in/andi-allen-634a9a89/

@NameRedacted247 - Name Redacted

37. Travis M. Current Facebook Technical Investigator. Former NSA 10 years. https://www.linkedin.com/in/travis-m/

@NameRedacted247 - Name Redacted

38. Keith Pridgen. Current Facebook Program Manager. Former NSA 2 years. Former US Navy Information Warfare Officer 7 years. https://www.linkedin.com/in/keithpridgen/

@NameRedacted247 - Name Redacted

39. Daniel Kaiser. Current Facebook Research Data Scientist. Former NSA 2 years. https://www.linkedin.com/in/daniel-kaiser-a397a419b/

• Exceptionally skilled in deep learning with neural networks, machine learning, applied mathematics, and algorithmic design.

• Expert knowledge of Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP) cloud environments.

• Proven experience in big data analytics, including analyzing data on a Hadoop cluster.

• Presented to key stakeholders and corporate executives.

• Experienced in model development, testing using Anaconda/pip, and deployment using Docker images running on Kubernetes cluster and on custom cloud architectures.

• Deep knowledge of recommender systems, computer vision, natural language processing (NLP), time series analysis, and Bayesian optimization.

• Used wide array of Python packages including XGBoost, PyTorch, TensorFlow, Keras, gensim, scikit-learn, GPyOpt, pandas, NumPy, and PyMongo.

• Fluent in Python/Jupyter Notebooks, Spark (PySpark), Java, C++, R, Pig, SQL, MongoDB, and Matlab. | Learn more about Daniel Kaiser's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

40. Jerrod Lowmaster. Current Facebook/Instagram Data Scientist. Former NSA 5 years. https://www.linkedin.com/in/jerrodlowmaster/

Big Data

Hadoop - MapReduce - Apache Pig - Hive

Scripting and Scientific Computing in Python - IPython -Numpy -Pandas - Matplotlib - Bokeh - scikit-learn - flask

Databases - Data Warehousing - MySql - ETL

Statistics - Econometrics - Machine Learning

Linux - Bash Scripting

Funnel Analysis | Learn more about Jerrod Lowmaster's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

41. Gabrielle Johnson (She/Her). Current Facebook Platform Investigator. Former NSA Deputy Office Chief 2 years. https://www.linkedin.com/in/gabrielle-t-johnson/

@NameRedacted247 - Name Redacted

42. Michael Khbeis (He/Him). Current Facebook Director of Operations. Former NSA 10 years. https://www.linkedin.com/in/michael-khbeis-3201778/

@NameRedacted247 - Name Redacted

43. Josh Bulluck. Current Facebook SPARQ Manager. Former NSA Signals Intelligence Analyst 2 years. Former US Army Intelligence Analyst 7 years. https://www.linkedin.com/in/jsbulluck/

@NameRedacted247 - Name Redacted

44. Eric Gonzalez. Current Facebook Systems Project Manager. Former NSA 3 years. Former US Navy Cryptologic Warfare Officer 2 years. https://www.linkedin.com/in/erictgonzalez/

- Leading Technical Teams in Developing & Deploying Software Products for 50k+ End Users

- Large Scale (1m+) Data Labeling support for AI/ML model training.

- Driving Department of Defense Information Security Compliance Standards for multiple organizations

- Implementing Privacy & Technical Security Controls (i.e. SOC2)

- Developing Threat Intelligence Analysis Tradecraft

- Information & Influence Operations Analysis

- Big Data Collection & Analysis

- Building cross-functional teams

- Advising senior executive decision makers.

- Effectively defining customer product security requirements

- Data Privacy & Digital Rights advocate ✊🏾

- Podcast host | The Tech Amendment 🎙

- DJ | https://soundcloud.com/doyouwepa 🤘🏾 🎧

Honored to have sailed ships, flown planes, and served in the Intelligence Community in support of U.S. National Security. 🇺🇸

Credentials:

M, Eng., Cybersecurity Policy, PMP, CSM, AWS-CCP | Learn more about Eric Gonzalez's work experience, education, connections & more by visiting their profile on LinkedIn linkedin.com

@NameRedacted247 - Name Redacted

45. Seth Summersett. Current Facebook Head of Security Partners. Former NSA 8 years. https://www.linkedin.com/in/seth-summersett-057b081a0/

@NameRedacted247 - Name Redacted

46. Brian McFarland (He/Him). Current Facebook Security Partner. Former NSA Cryptographer 3 years. https://www.linkedin.com/in/brian-mcfarland-3a64126/

@NameRedacted247 - Name Redacted

47. Mike D. Current Facebook Threat Intelligence Manager. Former FBI 13 years. https://www.linkedin.com/in/mike-d-48b149b3/

@NameRedacted247 - Name Redacted

48. Steve Goldman. Current Facebook Acute Issue Management. Former FBI 26 years. https://www.linkedin.com/in/steve-goldman-1b656943/

@NameRedacted247 - Name Redacted

49. Jennifer A. Current Facebook Global Intelligence Lead. Former Foreign Affairs Officer US Department of State. Former FBI 6 years. https://www.linkedin.com/in/jennifer-a-4b7632/

@NameRedacted247 - Name Redacted

50. Steven S. Current Facebook Director & Associate General Counsel. Former DOJ Trial Attorney 5 years. Former FBI Deputy General Counsel 8 years. https://www.linkedin.com/in/steven-s-36819437/

@NameRedacted247 - Name Redacted

51. Cynthia Deitle (She/Her). Current Facebook Director, Associate General Counsel. Former FBI 19 years. https://www.linkedin.com/in/cynthia-deitle-jd-ll-m-3a9991141/

@NameRedacted247 - Name Redacted

52. Tromila Maile. Current Facebook FIU Investigator. Former FBI Intelligence Analyst 3 years. https://www.linkedin.com/in/tromila-maile/

@NameRedacted247 - Name Redacted

53. Tim Hadley. Current Facebook Data Center Manager. Former FBI 17 years. https://www.linkedin.com/in/tim-hadley-3958888/

@NameRedacted247 - Name Redacted

54. Jeffrey K. Van Nest. Current Facebook In-House Counsel. Former FBI 20 years. https://www.linkedin.com/in/jeffrey-k-van-nest-0812b81a/

@NameRedacted247 - Name Redacted

55. Meredith Burkett. Current Facebook Anti-Scraping Investigator. Former FBI 6 years. https://www.linkedin.com/in/meredith-burkett-57650bb4/

@NameRedacted247 - Name Redacted

56. Leo M. Current Facebook Threat Investigator. Former FBI 7 years. Former DOD 4 years. https://www.linkedin.com/in/leo-m-359567169/

@NameRedacted247 - Name Redacted

57. Keith Allan. Current Facebook Corporate Strategy & Global Operations. Former FBI 5 years. https://www.linkedin.com/in/keitheallan/

@NameRedacted247 - Name Redacted

58. Anthony S. Current Facebook Business Integrity Specialist. Former FBI 8 years. https://www.linkedin.com/in/kysmith99/

@NameRedacted247 - Name Redacted

59. Christina F. Current Facebook Security Engineer Investigator. Former FBI 5 years. https://www.linkedin.com/in/christinafowler1/

@NameRedacted247 - Name Redacted

60. Reminder- All of these Facebook employees publicly list their work experience. I’ve labeled their names “as listed” on their LinkedIn Profiles. Anyone can do a simple LinkedIn search & find the same. Current Company Facebook/META. Past Company FBI/CIA/DHS/NSA. 115 results.