TruthArchive.ai - Tweets Saved By @srishticodes

@srishticodes - Srishti

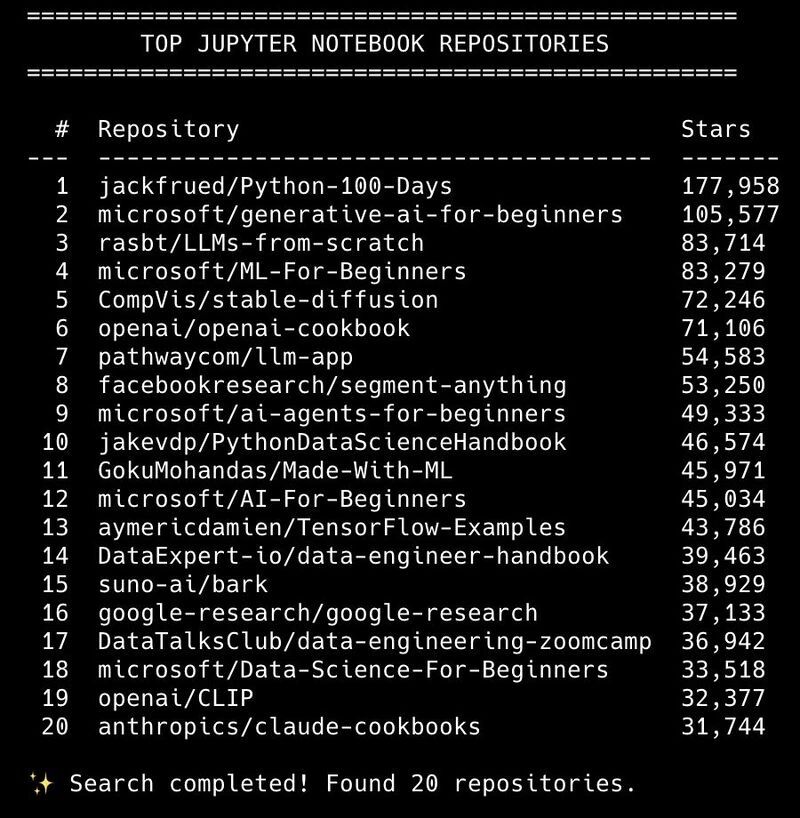

The 10 Most Valuable AI Learning Repositories on GitHub I analyzed the top GitHub repositories where Jupyter Notebooks (.ipynb) are the primary format and filtered out pure hype, keeping only the most practical, structured learning resources. Here are the 10 repositories that will actually make you better at AI 👇 1. microsoft/generative-ai-for-beginners ⭐ ~105 k Full repo for Microsoft’s Generative AI course with Jupyter notebooks and lessons on building GenAI apps. 🔗 https://github.com/microsoft/generative-ai-for-beginners 2. rasbt/LLMs-from-scratch ⭐ ~83 k Educational implementation of GPT-style LLMs from scratch (code + notebooks). 🔗 https://github.com/rasbt/LLMs-from-scratch 3. microsoft/ai-agents-for-beginners ⭐ ~49 k Course on building agentic AI systems, tools, memory, planning, and workflows. 🔗 https://github.com/microsoft/ai-agents-for-beginners 4. microsoft/ML-For-Beginners ⭐ ~83 k Classic machine learning fundamentals curriculum (26 lessons). 🔗 https://github.com/microsoft/ML-For-Beginners 5. openai/openai-cookbook ⭐ ~71 k Official OpenAI API examples, production-ready patterns, recipes, and demos in notebooks. 🔗 https://github.com/openai/openai-cookbook 6. jackfrued/Python-100-Days ⭐ ~177 k Intensive Python learning roadmap with 100 days of exercises/notebooks. 🔗 https://github.com/jackfrued/Python-100-Days 7. pathwaycom/llm-app ⭐ ~54 k RAG templates and real-world deployable LLM apps (prod-ready pipelines). 🔗 https://github.com/pathwaycom/llm-app 8. jakevdp/PythonDataScienceHandbook ⭐ ~46 k Foundational data science notebook collection (NumPy, Pandas, Matplotlib, Scikit-Learn). 🔗 https://github.com/jakevdp/PythonDataScienceHandbook 9. CompVis/stable-diffusion ⭐ ~72 k Original Stable Diffusion text-to-image model code (excellent learning material). 🔗 https://github.com/CompVis/stable-diffusion 10. facebookresearch/segment-anything ⭐ ~53 k Meta’s Segment Anything Model (SAM) for interactive image segmentation. 🔗 https://github.com/facebookresearch/segment-anything

@srishticodes - Srishti

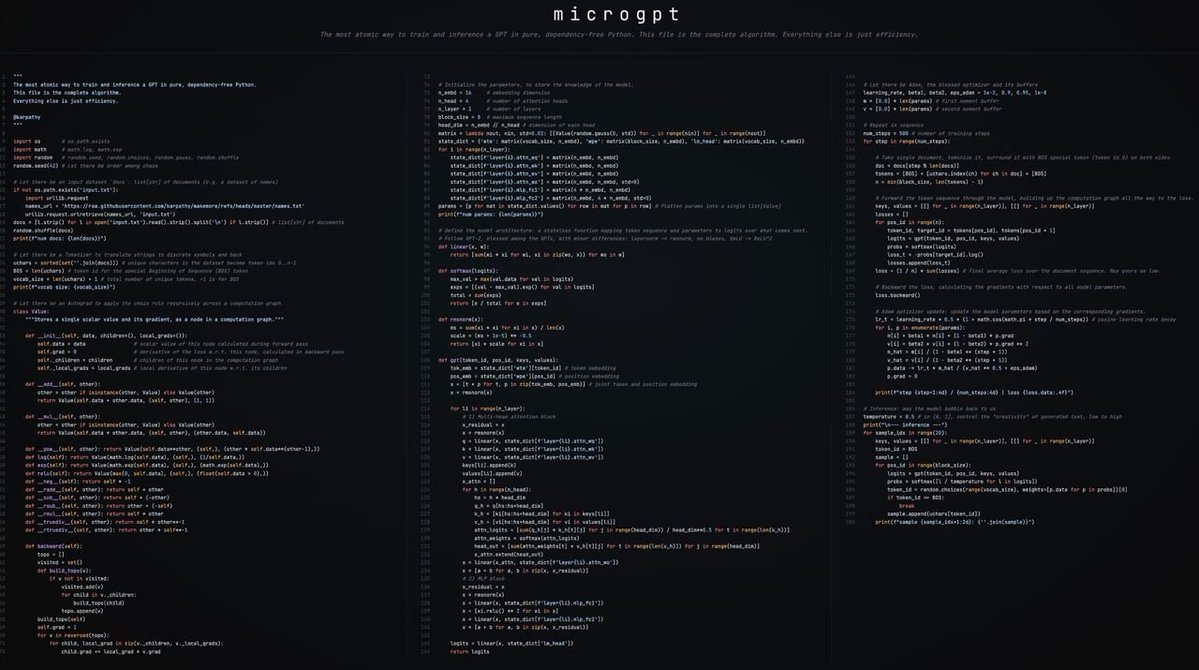

Most beautiful code I have seen shared in public recently. Built by Andrej Karpathy - single file of 200 lines of pure Python with no dependencies that trains and inferences a GPT. This is how it should be taught to everyone trying to get into learning LLMs. This might be the cleanest, most elegant public code drop in AI this year. Karpathy's new "art project": microgpt (https://karpathy.github.io/2026/02/12/microgpt/) → Single Python file (~200 lines) → No PyTorch, no NumPy, no external libraries at all → Full working GPT: data loading → character tokenizer → tiny autograd engine → GPT-2-style transformer → Adam optimizer → training loop → inference/sampling It's the bare-metal essence of what makes large language models tick - everything else (CUDA kernels, distributed training, mixed precision, flash attention, massive datasets…) is optimization & engineering around this core. Perfect starting point for anyone trying to truly understand LLMs instead of just calling APIs. Highly recommend reading + running it. Changes how you see "AI is just matrix multiplies + softmax" from abstract → concrete.

@srishticodes - Srishti

Stanford just made a $200,000 AI degree free. No application. No tuition. No “elite access”. Stanford released its actual AI/ML curriculum on YouTube. Not a PR-friendly intro. Not “AI for the public”. This is the real thing. The same lectures shaping people working on frontier models. What just became public: Deep Learning (CS230) → https://youtube.com/playlist?list=PLoROMvodv4rNRRGdS0rBbXOUGA0wjdh1X&si=H3R8bDhWd1h8yaw7 Transformers & LLMs (CME295) → https://youtube.com/playlist?list=PLoROMvodv4rPZxxeUFvQHCkZJsaEBdDZj&si=5iQjZJNl-YvJ6EaS Language Models from Scratch (CS336) → https://youtube.com/playlist?list=PLoROMvodv4rOY23Y0BoGoBGgQ1zmU_MT_&si=ABog2scp9v9a73Pk ML from Human Feedback (CS329H) → https://youtube.com/playlist?list=PLoROMvodv4rNm525zyAObP4al43WAifZz&si=2ZwNABa-KjG5w_hz Computer Vision (CS231N) → https://youtube.com/playlist?list=PLoROMvodv4rOmsNzYBMe0gJY2XS8AQg16&si=tw-3BAW5HdXOoYgn LLM Evaluation & Scaling → https://youtube.com/playlist?list=PLoROMvodv4rObv1FMizXqumgVVdzX4_05&si=r6eOC9Hq2Wilz1yA The uncomfortable truth: The degree isn’t the scarce asset anymore. Execution speed is. Top schools know this. That’s why they’re publishing the playbook. 👉 Bookmark this. Comment the first lecture you’ll actually watch.